NEW PAPER from UK AISI Model Transparency team: Could we catch AI models that hide their capabilities? We ran an auditing game to find out. The red team built sandbagging models. The blue team tried to catch them. The red team won. Why? 🧵1/17

Joseph Bloom

194 posts

@JBloomAus

White Box Evaluations Lead @ UK AI Safety Institute. Open Source Mechanistic Interpretability. MATS 6.0. ARENA 1.0.

NEW PAPER from UK AISI Model Transparency team: Could we catch AI models that hide their capabilities? We ran an auditing game to find out. The red team built sandbagging models. The blue team tried to catch them. The red team won. Why? 🧵1/17

At the #Neurips2025 mechanistic interpretability workshop I gave a brief talk about Venetian glassmaking, since I think we face a similar moment in AI research today. Here is a blog post summarizing the talk: davidbau.com/archives/2025/…

Imagine if ChatGPT highlighted every word it wasn't sure about. We built a streaming hallucination detector that flags hallucinations in real-time.

A simple AGI safety technique: AI’s thoughts are in plain English, just read them We know it works, with OK (not perfect) transparency! The risk is fragility: RL training, new architectures, etc threaten transparency Experts from many orgs agree we should try to preserve it: 🧵

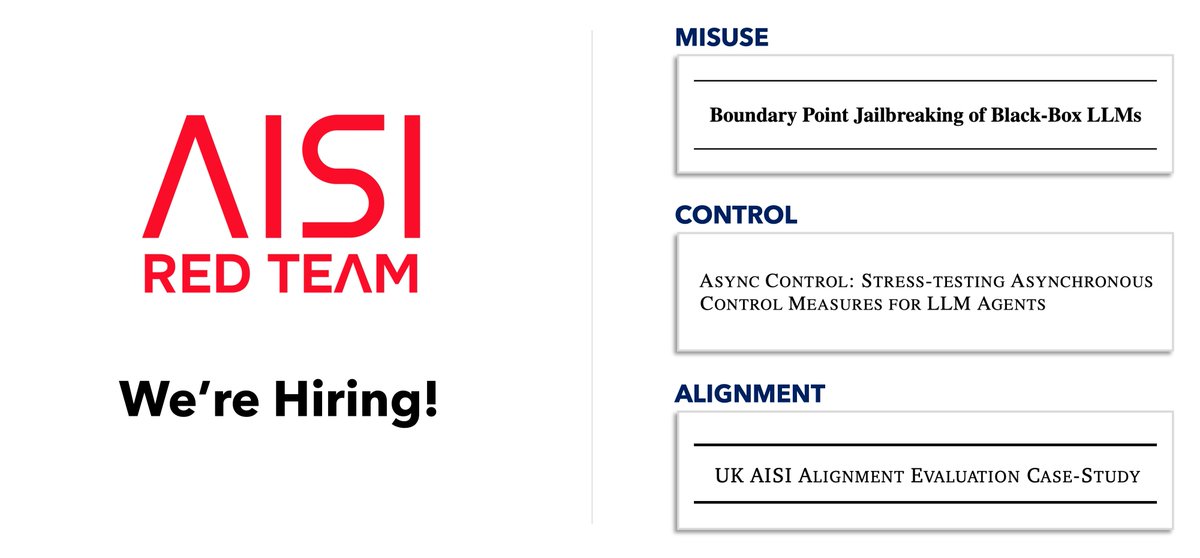

The AISI Whitebox Control Team is doing cool investigations into how well linear probes work, and has a new post sharing nuanced in-progress work. The results are mixed, in interesting ways! Please see Joseph's thread for details! I have only high-level observations. 🧵

We’ve released a detailed progress update on our white box control work so far! Read it here: alignmentforum.org/posts/pPEeMdgj…