James Ide

2.8K posts

Expo SDK 55 is here😅 React Native 0.83. React 19.2. Legacy Architecture dropped. New Architecture is the default. ◆ 75% smaller update downloads with Hermes bytecode diffing ◆ Expo Router v7 🤯 ◆ Brownfield isolation via expo-brownfield ◆ AI tooling: MCP + agent skills for Claude Code ◆ Expo UI much improved for both Swift and Jetpack Compose There is so much more...it feels futile to try and isolate a few bullets...check out the full release notes and let us know what you think ↓

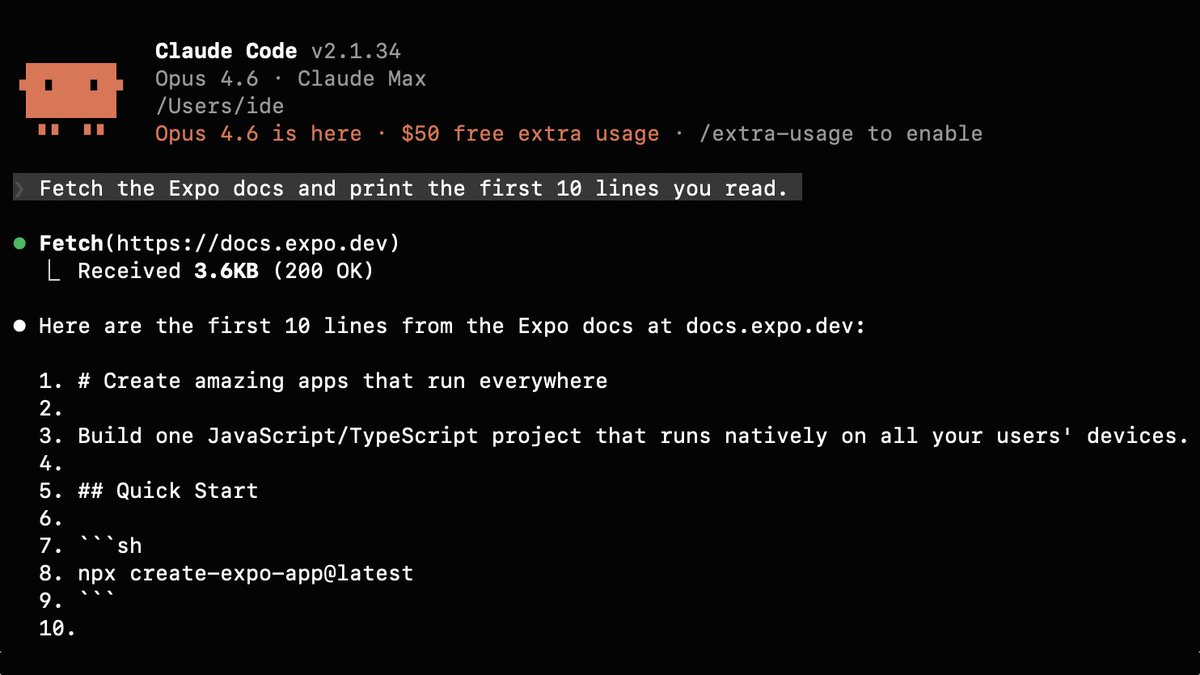

When reading the Expo docs: You get HTML LLMs get Markdown Fit whole docs in your context window, easily.

Did you know! You can append .md to any @expo blog or changelog post to get the content as markdown (this will also work with accept headers)

Your AI conversations aren't privileged. Yesterday, Judge Jed Rakoff ruled that 31 documents a defendant generated using an AI tool and later shared with his defense attorneys are not protected by attorney-client privilege or work product doctrine. The logic is simple: an AI tool is not an attorney. It has no law license, owes no duty of loyalty, and its terms of service explicitly disclaim any attorney-client relationship. Sharing case details with an AI platform is legally no different from talking through your legal situation with a friend (which is not privileged). You can't fix it after the fact, either. Sending unprivileged documents to your lawyer doesn't retroactively make them privileged. That's been settled law for years. It just hadn't been tested with AI until now. And here's what really hurt the defendant: the AI provider's privacy policy (Claude), in effect when he used the tool, expressly permits disclosure of user prompts and outputs to governmental authorities. There was no reasonable expectation of confidentiality. The core problem is the gap between how people experience AI and what's actually happening. The conversational interface feels private. It feels like talking to an advisor. But unless you negotiate for an enterprise agreement that says otherwise, you're inputting information into a third-party commercial platform that retains your data and reserves broad rights to disclose it. Judge Rakoff also flagged an interesting wrinkle: the defendant reportedly fed information from his attorneys into the AI tool. If prosecutors try to use these documents at trial, defense counsel could become a fact witness, potentially forcing a mistrial. Winning on privilege doesn't make the evidentiary picture simple. For anyone advising clients or managing legal risk, this is a wake-up call. AI tools are not a safe space for clients to process their counsel's advice and to regurgitate their legal strategy. Every prompt is a potential disclosure. Every output is a potentially discoverable document. So what do we do about it? First, attorneys need to be proactive. Advise clients explicitly that anything they put into an AI tool may be discoverable and is almost certainly not privileged. Put it in your engagement letters. Make it part of onboarding. Don't assume clients understand this, because most don't. Second, if clients want to use AI to help process legal issues (and they clearly will, increasingly), then let's give them a way to do it inside the privilege. Collaborative AI workspaces shared between attorney and client, where the AI interaction happens under counsel's direction and within the attorney-client relationship, can change the analysis entirely. I'm excited to be planning this kind of approach, and I think it's where the industry needs to head. storage.courtlistener.com/recap/gov.usco…

SimpleClaw launched 5 days ago. Today it hit $17k MRR, and the owner is selling this SaaS project. He is asking $2.25 million.

I fear the future of work with AI. If you look at where the technology got us with instant messaging, it's clear that the future of work with AI won't be we work less. It will be that we work more, and are expected to contribute prompts at any times to move the agents forward.

I repeatedly tell GCP they erode customer trust with changes like these and I can't recommend them to others choosing cloud providers. In any case, does it make sense for Cloudflare to become a Verified Peering Provider, either now or as something to work towards? It's this thing: peering.google.com/#/options/veri…