Jack Wu

1.4K posts

Jack Wu

@JackTripleU

Building Products @Meta Superintelligence Labs. Managing AIs and engineers who manage AIs who manage AIs who (I think) write code.

As AI agents accelerate coding, what is the future of software engineering? Some trends are clear, such as the Product Management Bottleneck, referring to the idea that we are more constrained by deciding what to build rather than the actual building. But many implications, like AI’s impact on the job market, how software teams will be organized, and more, are still being sorted out. The theme of our AI Developer Conference on April 28-29 in San Francisco is The Future of Software Engineering. I look forward to speaking about this topic there, hearing from other speakers on this theme, and chatting with attendees about it. We’re shaping the future, and I hope you will join me there! It is currently trendy in some technology and policy circles to forecast massive job losses due to AI. Even if they have not yet materialized, these losses certainly must be just over the horizon! I have a contrarian view that the AI jobpocalypse — the notion that AI will lead to massive unemployment, perhaps even rioting in the streets — won’t be nearly as bad as dire forecasts by pundits, especially pundits who are trying to paint a picture of how powerful their AI technology is. Among professions, AI is accelerating software engineering most, given the rise of coding agents. According to a new report by Citadel Research, software engineering job postings are rising rapidly. So if software engineering is a harbinger of the impact AI will have on other professions, this expansion of software engineering jobs is encouraging. Yes, fresh college graduates are having a hard time finding jobs. And yes, there have been layoffs that CEOs have attributed to AI, even if a large fraction of this was “AI washing,” where businesses choose to attribute layoffs to AI, even though AI has not changed their internal operations much yet. And yes, there is a subset of job roles, such as call center operator, that are more heavily impacted. Many people are feeling significant job insecurity, and I feel for everyone struggling with employment, whether or not the cause is AI-related. And many other factors, such as over-hiring during the pandemic and high interest rates, have contributed to the slowdown in the labor market, and the notion that AI is leading to unemployment is oversimplified. In software engineering, I see a lot of exciting work ahead to adapt our workflows. It is already clear that: (i) As AI makes coding easier, a lot more people will be doing it. (ii) Writing code by hand and even reading (generated) code is not that important, because we can ask an LLM about the code and operate at a higher level than the raw syntax (although how high we can or should go is rapidly changing). (iii) There will be a lot more custom applications, because now it’s economical to write software for smaller and smaller audiences. (iv) Deciding what to build, more than the actual building, is becoming a bottleneck. (v) The cost of paying down technical debt is decreasing (since AI can refactor for you). At the same time, there are also a lot of open questions for our profession, such as: - In the future, what will be the key skills of a senior software engineer? And for junior levels, what should be the new Computer Science curriculum? - If everyone can build features, what skills, strategies, or resources create competitive advantage for individuals and for businesses? - What are the new building blocks (libraries, SDKs, etc.) of software? How do we organize coding agents to create software? - What should a software team look like? For example, how many engineers, product managers, designers, and so on. What tooling do we need to manage their workflow? - How do AI agents change the workflow of machine learning engineers and data scientists? For example, how can we use agents to accelerate exploring data, identifying hypotheses, and testing them? I’m excited to explore these and other questions about the future of software engineering at AI Dev. I expect this to be an exciting event. Please join us! [Original text: The Batch newsletter.] ai-dev.deeplearning.ai

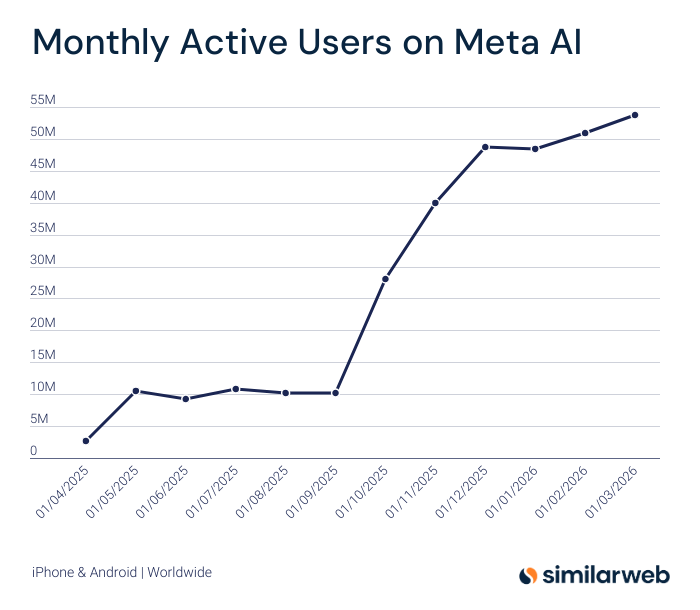

I tested $META's Muse Spark over the last few hours and came away net positive. 3 main takeaways: 1) Quality: It's a very good model. Not quite frontier but good. It showed comparable performance vs Opus 4.6 across web data search, PDF parsing, and general knowledge/conversation/writing. It's worse at coding. Both models solved an easy coding task, but Muse Spark failed the hard one while Opus one-shotted. The image-gen is also worse than ChatGPT. But all in, it's a legitimate and usable general model. Lots of room to develop the UI further (ie it should show a map when recommending local restaurants) but the underlying model itself is impressive. 2) Speed: Notably, Muse Spark answered almost instantly while Opus 4.6 felt borderline unusable at times. I'm a huge Anthropic fan but latency has become a major issue. Simple answers take too long and multi-step agent flows break more often. Meta seems to have more available compute which is a real factor going forward. 3) Scaling: Meta hasn't published a full model card so we're working with limited disclosure. But the graphs below might be the most important part of the release. Their rearchitected pretraining stack shows a near-linear relationship between RL compute and accuracy. If that holds, Meta has a clear path to training much larger, more intelligent models. That's arguably more consequential than Muse Spark itself. All in, it's positive. Muse Spark is a good, usable model, it's being served smoothly, and Meta looks to be on an encouraging trajectory.

The coolest thing Meta AI's Muse Spark can do by far is counting objects! As you can tell, it's far from perfect. They call it "visual grounding" and it can count objects and do bounding boxes. I've been playing with the new model and here's what I think so far: Good stuff: – Incredible at vision. It's ability to read text in images is the best I've seen. – Really high quality at web design. It's the only model I've seen that uses Unsplash, OpenLibrary and other images by default. – It's free! You don't pay to use Muse Spark Thinking. Bad stuff: – Meta's classic playbook of growth tactics are dodgy. They're sending Instagram notifs to people's friends without their consent. Their app ranking jump is not organic. – Reasoning itself is pretty solid but not best in class. It can do pretty advanced math and science problems. The long term threat here is Meta has distribution and has the ability to give their model away for free, which makes them a formidable threat to the big AI labs, particularly in consumer.

i find muse spark is very good at data analysis—both finding relevant open-source data and analyzing it. for example, here's my results for analyzing global share of GDP over past century: meta.ai/share/cw54skLB…

Not usually a Meta AI user, but wanted to give them a shot after the latest model release (it's free anyway). So I installed the app on my desktop, and noticed "contemplating" mode (didn't see that on the mobile app btw). When I asked a question, 16 agents simultaneously started working on the question which looks pretty cool!