James G. Beldock

3.3K posts

James G. Beldock

@jbeldock

Day Job: Facebook, formerly @ShotSpotter. Night Job: SF Bay Area Immigrant. Life Job: New Yorker and lesser half of @TatyanaDB. https://t.co/jF6JNGdp0c

Crazy to see the positive response to Muse Spark. I joined Meta in Dec and was surprised how startup-y MSL feels. Dec through now, through the holidays was literally nonstop building for me. Everyone on the team cares. People want to do great work

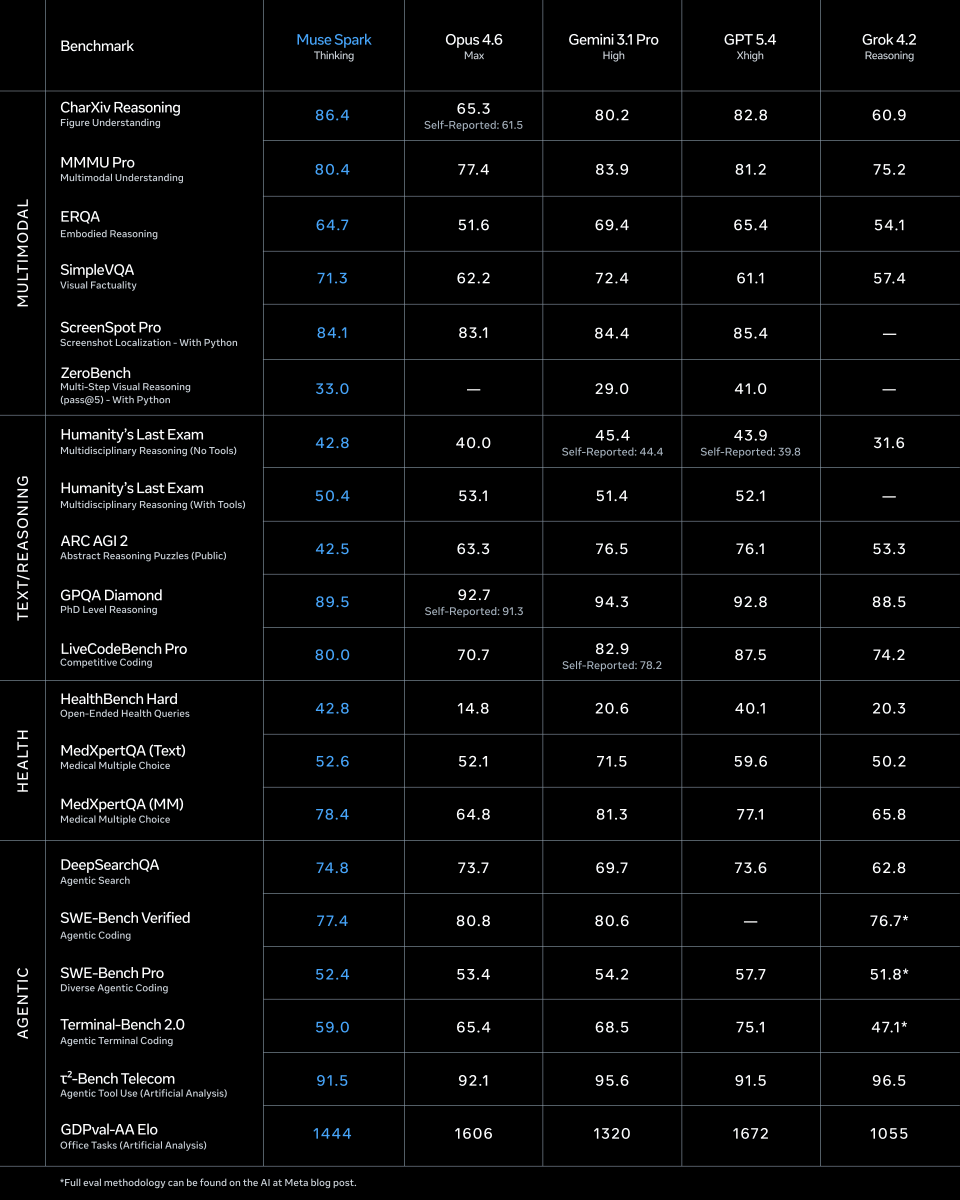

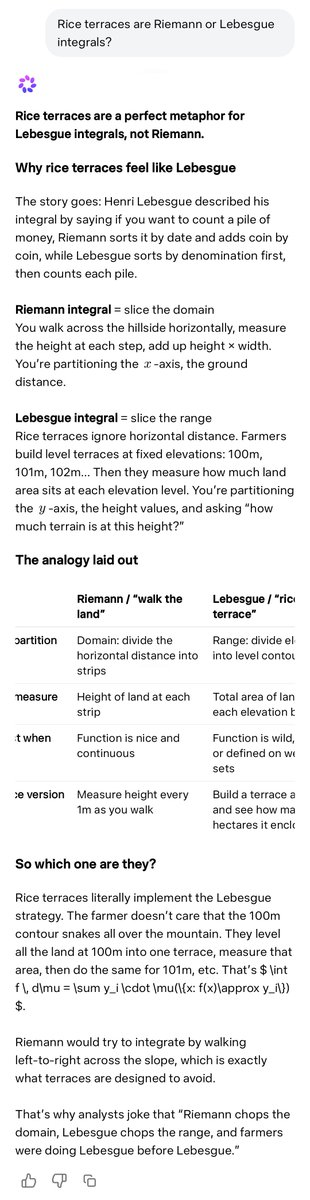

Meta is back. 🔥 Finally dropped its first model since Zuckerberg started writing checks like crazy. Launched Muse Spark (originally codenamed Avocado). Its a natively multimodal reasoning model that can look, reason, use tools, and split hard work across multiple cooperating agents. Claims it can reach similar capability with 10x+ less training compute than Llama 4 Maverick, They are not positioning Muse Spark as a top-of-the-line model, but is instead highlighting its efficiency and “competitive performance” on various tasks. The old bottleneck in AI is that one model often has to read, plan, call tools, and solve everything in one stream, which wastes compute and slows hard tasks. The key idea here is multi-agent orchestration, where several copies of the model work on the same problem in parallel and then compare or merge results, which is closer to a small team than a single assistant. That changes the scaling story because better performance no longer comes only from making 1 model bigger, but also from spending compute more intelligently at run time. So Muse is a stack built around 3 scaling axes: stronger pretraining for basic world and code understanding, steadier RL for improving answers after pretraining, and test-time reasoning so the model spends extra compute only when a problem is hard. The most interesting part is multi-agent orchestration, where several copies of the model reason in parallel and compare work, which raised Humanity’s Last Exam to 58% and FrontierScience Research to 38% in its heavier Contemplating mode. Meta also says the new pretraining recipe reaches similar capability with over 10x less compute than Llama 4 Maverick, which matters because cheaper training usually means faster iteration and more room to scale.

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

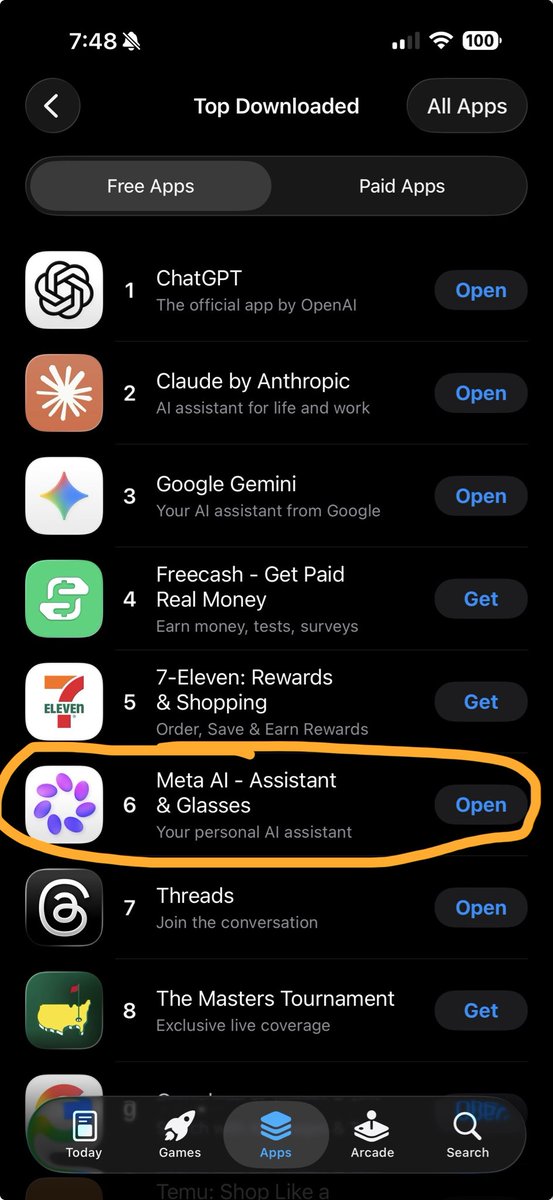

Not usually a Meta AI user, but wanted to give them a shot after the latest model release (it's free anyway). So I installed the app on my desktop, and noticed "contemplating" mode (didn't see that on the mobile app btw). When I asked a question, 16 agents simultaneously started working on the question which looks pretty cool!

Introducing Muse Spark, the first in the Muse family of models developed by Meta Superintelligence Labs. Muse Spark is a natively multimodal reasoning model with support for tool-use, visual chain of thought, and multi-agent orchestration. Muse Spark is available today at meta.ai and the Meta AI app. We’re also making it available in private preview via API to select partners, and we hope to open-source future versions of the model. Learn more: go.meta.me/43ea00

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

That clip is the part most people will underestimate. Image-to-code was already impressive What Meta’s Muse Spark seems to be doing is one level higher: it’s not just recreating pixels, it’s inferring product logic. I gave it a calendar screenshot and I am blown

Excited to share what we’ve been building at Meta Superintelligence Labs! We just released Muse Spark, our first AI model. It's a natively multimodal reasoning model and the first step on our path to personal superintelligence. We've overhauled our entire stack to support scaling, and this is just the beginning. ai.meta.com/blog/introduci…

Meta is back! Muse Spark scores 52 on the Artificial Analysis Intelligence Index, behind only Gemini 3.1 Pro, GPT-5.4, and Claude Opus 4.6. Muse Spark is the first new release since Llama 4 in April 2025 and also Meta's first release that is not open weights Muse Spark is a new model from @Meta evaluated on Artificial Analysis. We were given early access by Meta to independently benchmark the model. It is the first frontier-class model from Meta since Llama 4 Maverick was released in April 2025, and notably the first @AIatMeta model that is not being released as open weights. The release follows Meta's reorganization of its AI efforts under Meta Superintelligence Labs, and signals that Meta is re-entering the frontier race after roughly a year of relative quiet. For context, Llama 4 Maverick and Scout scored 18 and 13 respectively on the Artificial Analysis Intelligence Index as non-reasoning models at the time of their release, while Muse Spark scores 52. Muse Spark essentially closes the gap between to the frontier in a single release. The model is not open source and is not yet accessible via an API but Meta has shared they expect this to come soon. Meta is also integrating Muse Spark into their first party products including their Meta AI chat product, Facebook, Instagram and Threads. Key takeaways from our benchmarks: ➤ Muse Spark scores 52 on the Artificial Analysis Intelligence Index, placing it within the top 5 models we have benchmarked. It sits ahead of Claude Sonnet 4.6, GLM-5.1, MiniMax-M2.7, Grok 4.20 and behind Gemini 3.1 Pro Preview, GPT-5.4 and Claude Opus 4.6 ➤ Muse Spark is notably token efficient for its intelligence level. It used 58M output tokens to run the Intelligence Index, comparable to Gemini 3.1 Pro Preview (57M) and notably lower than Claude Opus 4.6 (Adaptive Reasoning, max effort, 157M), GPT-5.4 (xhigh, 120M) and GLM-5 (110M) ➤ Muse Spark is the second-most capable vision model we have benchmarked. It scores 80.5% on MMMU-Pro, behind only Gemini 3.1 Pro Preview (82.4%) ➤ Muse Spark performs strongly on reasoning and instruction-following evaluations. It scores 39.9% on HLE, trailing only Gemini 3.1 Pro Preview (44.7%) and GPT-5.4 (xhigh, 41.6%). The model also achieved 5th highest in CritPT with a score of 11%, an eval that is focused on difficult physics research questions. This is substantially above above Gemini 3 Flash (9%) and Claude 4.6 Sonnet (3%) ➤ Agentic performance does not stand out. On GDPval-AA, our evalaution focused on real world work tasks, Muse Spark scores 1427, behind both Claude Sonnet 4.6 at 1648 and GPT-5.4 at 1676, but ahead of Gemini 3.1 Pro Preview at 1320. On On TerminalBench Hard, Muse Spark trails Claude Sonnet 4.6, GPT-5.4, and Gemini 3.1 Pro. Muse Spark joins others in achieving a high τ²-Bench Telecom score of 92% Key model details: ➤ Modalities: Multimodal including text and vision input, text output ➤ License: Proprietary, Meta's first frontier model not released as open weights ➤ Availability: No public API at the time of publishing. Meta expects to provide API access soon. Meta has started integration into their first party AI offering Meta AI and inside Facebook, Instagram, and Threads