Zhihao Zhang

33 posts

Excited to share that LessIsMore has been accepted to ICML 2026! 🚀 LessIsMore is a training-free sparse attention for efficient long-horizon reasoning. By enforcing cross-head unified token selection, it brings up to 1.6x E2E speedup while preserving reasoning accuracy under practical workloads. Huge thanks to my amazing co-authors and mentors @Jackfram2, @JiaZhihao, Ravi! Paper: arxiv.org/abs/2508.07101 Code: github.com/DerrickYLJ/Les… #ICML2026 #LLM #EfficientAI

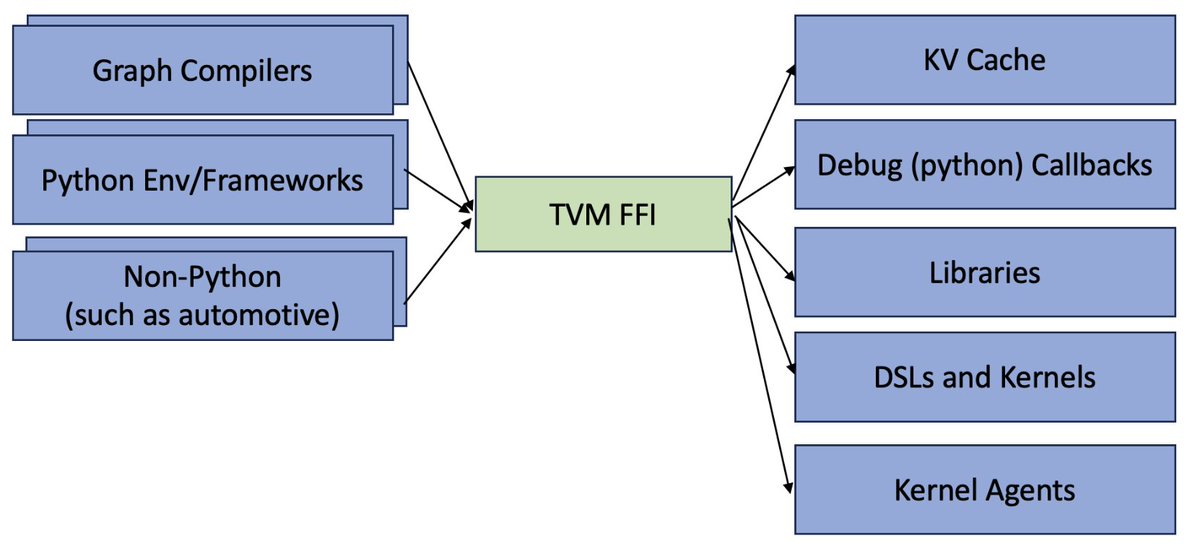

📢Excited to introduce Apache TVM FFI, an open ABI and FFI for ML systems, enabling compilers, libraries, DSLs, and frameworks to naturally interop with each other. Ship one library across pytorch, jax, cupy etc and runnable across python, c++, rust tvm.apache.org/2025/10/21/tvm…

Calling industry researchers: MLSys 2026 launches its first Industrial Track! 🚀 We're excited to announce the inaugural Call for Industrial Track Papers at MLSys 2026! 🎉 👉 mlsys.org/Conferences/20…) This is a unique opportunity for industry researchers and practitioners to share real-world innovations, system deployments, large-scale ML challenges, and lessons learned from practice with the MLSys community. 📌 Details & submission info: Paper submission deadline: Oct 30, 2025 20:00 UTC Full CFP: mlsys.org/Conferences/20… I’m honored to help launch this new track and look forward to seeing your contributions that bridge cutting-edge research with impactful practice. #MLSys2026 #CFP #MLSystems #MLforSystems

[1/N] 🚀 Excited to introduce my first work at @Princeton: LessIsMore – a training-free sparse attention method tailored for efficient reasoning in LRMs, achieving lossless accuracy with high sparsity up to 87.5% and 1.1x avg decoding speedup compared to Full Attention on reasoning tasks like AIME-24. (More details in 🧵) 💻 Code: github.com/DerrickYLJ/Les… 📄 arXiv: huggingface.co/papers/2508.07… 🔍 HF Daily Paper: huggingface.co/papers/2508.07…

Excited to share Flow Matching Policy Gradients: expressive RL policies trained from rewards using flow matching. It’s an easy, drop-in replacement for Gaussian PPO on control tasks.

🚀 Excited to present our work at CMU Catalyst, TidalDecode, at #ICLR2025 tomorrow! TidalDecode with Position Persistent Sparse Attention (PPSA)—enables: 🔹 2.1× faster long-context decoding 🔹 High-quality generation (100% accuracy with only 0.1% tokens on Needle-in-the-Haystack!) 🔹 Impressive performance on reasoning tasks 🗓️ Poster Session: Hall 3 + Hall 2B #132 from 15:00 to 17:30 📖 Blog&Paper: sites.google.com/andrew.cmu.edu… 💻 GitHub: github.com/DerrickYLJ/Tid… Come chat about making LLMs faster and smarter!