Jd3d

60 posts

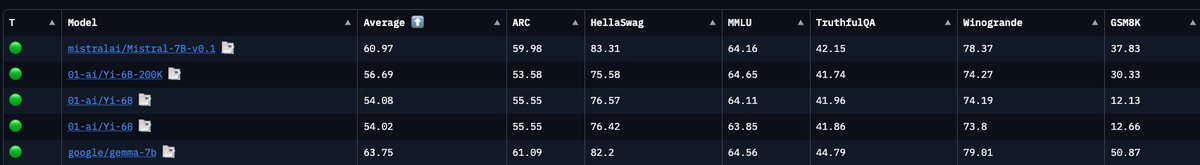

Context Arena Update: Added @MoonshotAI's Kimi K2.5 to the MRCR leaderboards (2-, 4-, 8-needle)!

K2.5 is a major step up from K2 and trades blows with @Google's Gemini 3 Flash (base) - beating it on 4 and 8-needle retrieval despite being half the cost.

moonshotai/kimi-k2.5:thinking results (@ 128k):

2-Needle Performance:

AUC: 81.9% (vs Gemini 3 Flash: 85.5%)

Pointwise: 81.9% (vs K2: 52.1%)

4-Needle Performance:

AUC: 55.6% (vs Gemini 3 Flash: 50.4%)

Pointwise: 56.3%

8-Needle Performance:

AUC: 30.4% (vs Gemini 3 Flash: 29.3%)

Pointwise: 26.9%

Full data: contextarena.ai

@Kimi_Moonshot

@GoogleDeepMind

English

@ben_burtenshaw @huggingface This is great! Can you add additional benchmarks like: MRCR v2, SWE-Bench Pro, ARC-AGI 2, OSWorld, GDPval-AA, Terminal-Bench Hard, SciCode, AA-Omniscience, CritPt

English

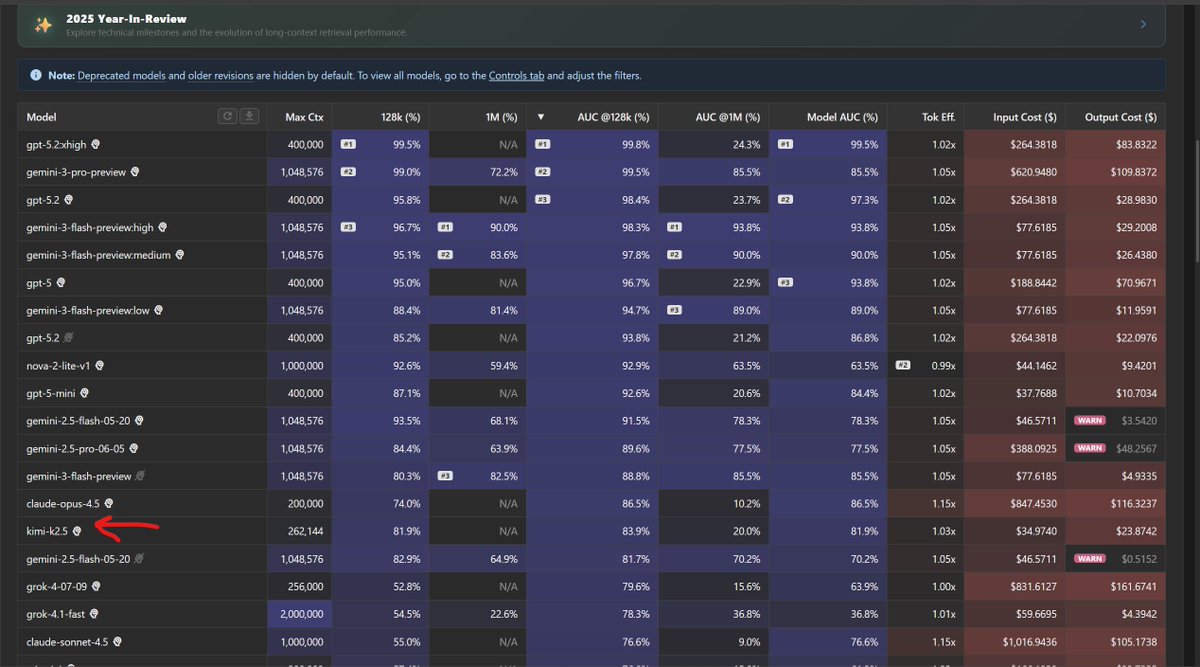

Eval scores in 2026 are broken. MMLU at 91%+, GSM8K at 94%+, yet models still can't handle basic multi-step tasks. And reported scores don't even agree across model cards, papers, and platforms.

We just shipped Community Evals on @huggingface:

- Benchmark datasets now host live leaderboards (MMLU-Pro, GPQA, HLE)

- Scores live in model repos as versioned YAML

- Anyone can submit evals to any model via PR without merging.

- Verified badges for reproducible runs via Inspect AI

This won't fix saturation or stop test set contamination. But it makes the game visible. What was evaluated, how, when, and by whom.

Done trusting black-box leaderboards. Time to decentralize evals.

English

AidanBench Scores 🥳

——

>some of these results might raise some eyebrows and we implore you to take a look at the data on our website:

aidanbench.com

>AidanBench is the brainchild of me, James, and Aidan & VERY soon we’ll be dropping officially on arXiv, stay tuned!

English

@AJamesMcCarthy @BRANSCOMBE_ Primer is so good. To this day it blows my mind that it was made on a $7,000 budget.

English

@BRANSCOMBE_ That one would require having a working brain 😭

English

i think its worth taking a moment to put into perspective how cool this work is. GPT2 is really what the entire OpenAI empire was built on / was deemed too dangerous to release a few short years ago and it is now reproducible in less than 8 min on a single (large) machine

Keller Jordan@kellerjordan0

New NanoGPT training speed record: 3.28 FineWeb val loss in 7.23 minutes on 8xH100 Previous record: 7.8 minutes Changelog: - Added U-net-like connectivity pattern - Doubled learning rate This record is by @brendanh0gan

English

@ml_perception Can you clarify why the 8B version has a March, 2023 knowledge cutoff instead of December 2023?

English

Yes, both the 8B and 70B are trained way more than is Chinchilla optimal - but we can eat the training cost to save you inference cost! One of the most interesting things to me was how quickly the 8B was improving even at 15T tokens.

Felix@felix_red_panda

Llama3 8B is trained on almost 100 times the Chinchilla optimal number of tokens

English

@mixedrealityTV Yes so good! You should read the books too, they are one of my favorite trilogies.

English

@mixedrealityTV Quest 2/3 have Auto Wake. You can turn it on or off in the settings.

English

@casper_hansen_ Keep in mind Gemma is a significantly larger model than Mistral 7B. Much closer to 8B. That makes a big difference.

English

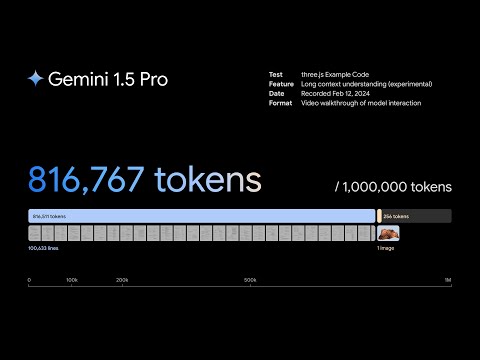

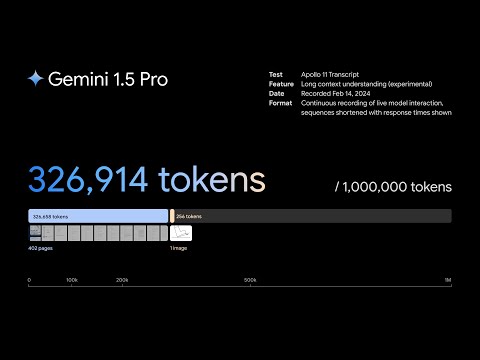

Gemini 1.5 Pro - A highly capable multimodal model with a 10M token context length

Today we are releasing the first demonstrations of the capabilities of the Gemini 1.5 series, with the Gemini 1.5 Pro model. One of the key differentiators of this model is its incredibly long context capabilities, supporting millions of tokens of multimodal input. The multimodal capabilities of the model means you can interact in sophisticated ways with entire books, very long document collections, codebases of hundreds of thousands of lines across hundreds of files, full movies, entire podcast series, and more.

Gemini 1.5 was built by an amazing team of people from @GoogleDeepMind, @GoogleResearch, and elsewhere at @Google. @OriolVinyals (my co-technical lead for the project) and I are incredibly proud of the whole team, and we’re so excited to be sharing this work and what long context and in-context learning can mean for you today!

There’s lots of material about this, some of which are linked to below.

Main blog post:

blog.google/technology/ai/…

Technical report:

“Gemini 1.5: Unlocking multimodal understanding across millions of tokens of context”

goo.gle/GeminiV1-5

Videos of interactions with the model that highlight its long context abilities:

Understanding the three.js codebase: youtube.com/watch?v=SSnsmq…

Analyzing a 45 minute Buster Keaton movie: youtube.com/watch?v=wa0MT8…

Apollo 11 transcript interaction: youtube.com/watch?v=LHKL_2…

Starting today, we’re offering a limited preview of 1.5 Pro to developers and enterprise customers via AI Studio and Vertex AI. Read more about this on these blogs:

Google for Developers blog:

developers.googleblog.com/2024/02/gemini…

Google Cloud blog:

cloud.google.com/blog/products/…

We’ll also introduce 1.5 Pro with a standard 128,000 token context window when the model is ready for a wider release. Coming soon, we plan to introduce pricing tiers that start at the standard 128,000 context window and scale up to 1 million tokens, as we improve the model.

Early testers can try the 1 million token context window at no cost during the testing period. We’re excited to see what developer’s creativity unlocks with a very long context window.

Let me walk you through the capabilities of the model and what I’m excited about!

YouTube

YouTube

YouTube

English

@ylecun

Back in December I told the LocalLlama community on reddit that Llama3 was coming Feb 2024. My post was seen by 15k people and I don't want to let them down so could you see it in your heart to release maybe just the smallest Llama3 model before March? Thanks in advance!

English

🌱 Contrary to what people think: plants unfortunately do close to nothing to improve indoor air, they're nice to look at though

You'd need to fill your entire home full of plants so you can't even walk there anymore to have the SAME effect as just opening a window

Source: lots of studies like this one nature.com/articles/s4137…

Pascal Pixel@PascalPixel

we bought a co2 monitor 🌿🌳🌱🍃

English

Jd3d retweetledi

This really feels like singularity

New ultra-high speed processor to advance AI, driverless vehicles and more that operates more than 10,000 times faster than typical electronic processors that operate in Gigabyte/s, at a record 17 Terabits/s. The system processes 400,000 video signals concurrently, performing 34 functions simultaneously that are key to object edge detection, edge enhancement and motion blur.

Photonic signal processor based on a Kerr microcomb for real-time video image processing

English

Dear journalists, it makes absolutely no sense to write:

"PaLM 2 is trained on about 340 billion parameters. By comparison, GPT-4 is rumored to be trained on a massive dataset of 1.8 trillion parameters."

It would make more sense to write:

"PaLM 2 possesses about 340 billion parameters and is trained on a dataset of 2 billion tokens (or words). By comparison, GPT-4 is rumored to possess a massive 1.8 trillion parameters trained on untold trillions of tokens."

Parameters are coefficients inside the model that are adjusted by the training procedure. The dataset is what you train the model on. Language models are trained with tokens that are subword units (e.g. prefix, root, suffix).

Saying "trained a dataset of X billion parameters" reveals that you have absolutely no understanding of what you're talking about.

English