Jianfei Yang

141 posts

Jianfei Yang

@Jianfei_AI

Assistant Professor @NTUsg Prev Researcher @Harvard @UCBerkeley @UTokyo_News U30 @Forbes

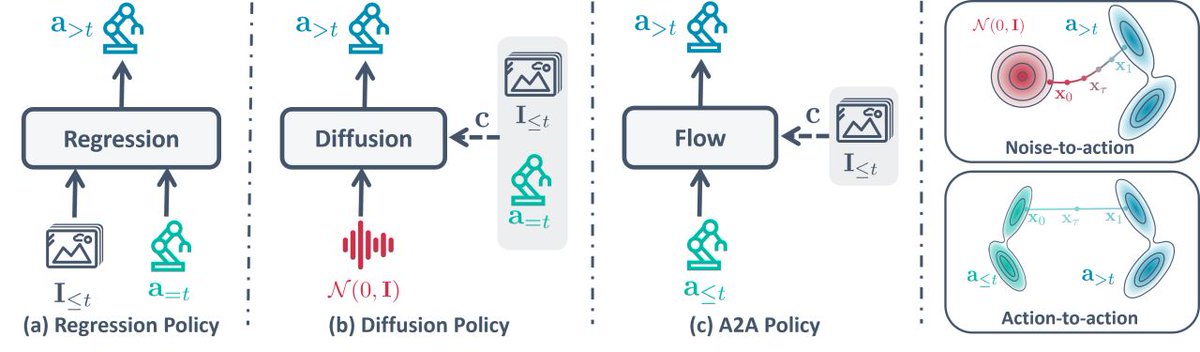

Diffusion/flow policies 🤖 sample a “trajectory of trajectories” — a diffusion/flow trajectory of action trajectories. Seems wasteful? Presenting Streaming Flow Policy that simplifies and speeds up diffusion/flow policies by treating action trajectories as flow trajectories! 🌐 streaming-flow-policy.github.io 🧵 1/15

📢 CVPR 2026 Workshop Call for Papers: ScaleBot @CVPR ! 🤖 Join the FIRST Workshop on Scalable Robot Learning Systems at #CVPR2026 in Denver, June 3/4! We’re bringing together researchers/engineers from CV, NLP, robotics & beyond to build scalable learning systems for general-purpose robots. Let’s unlock real-world robot generalization! 🚀 🔗 Website url: scalebot-workshop.github.io 🌟 Keynote Speakers (Tentative): • Joel Jang (Nvidia GEAR) @jang_yoel • Sergey Levine (UC Berkeley & Physical Intelligence) @svlevine • Jason Ma (Dyna Robotics) @JasonMa2020 • Chuan Wen (Shanghai Jiao Tong University) @ChuanWen15 📌 Topics We Love (not limited to!): • Robot data acquisition/strategies; • Data pyramids; • VLMs/VLAs; • World models; • Dual-system architectures; • Fair evaluations & more. ⏰ Two Submission Tracks - Don’t Miss Out! ✅ Track 1 (Proceedings): Original research | DDL: March 1, 2026 (AoE) ✅ Track 2 (Non-Proceedings): WIPs, datasets, tech reports, recent work | DDL: April 14, 2026 (AoE) 📝 Submit via OpenReview, see scalebot-workshop.github.io for more detailed guidelines! 📧 Questions? Reach us at: scalebot@googlegroups.com Retweet to tag robotics peers – let’s accelerate real-world general-purpose robots! 🤖✨ #Robotics #AI #MachineLearning #CVPR #ScaleBot2026 #ScalableAI

TL;DR: The Chinese are building programmable robots at scale already, and are looking for distributors to help them get the robots ready for specific tasks. They sacrifice some of the upside and share some of the risk. They wish to sell the robot to distributors, today. The Americans are trying to leapfrog the era of programmable robots by going straight for general intelligence robots that can do any job well without any integration. They want to do all hardware and software inhouse, and handle distribution themselves. They wish to rent the robot directly to the end customer, tomorrow.