Jingyu Liu

30 posts

@Jingyu227

CS PhD @Uchicago. RS Intern @Nvidia, AI Resident @AIatMeta, MLE @ByteDanceTalk.

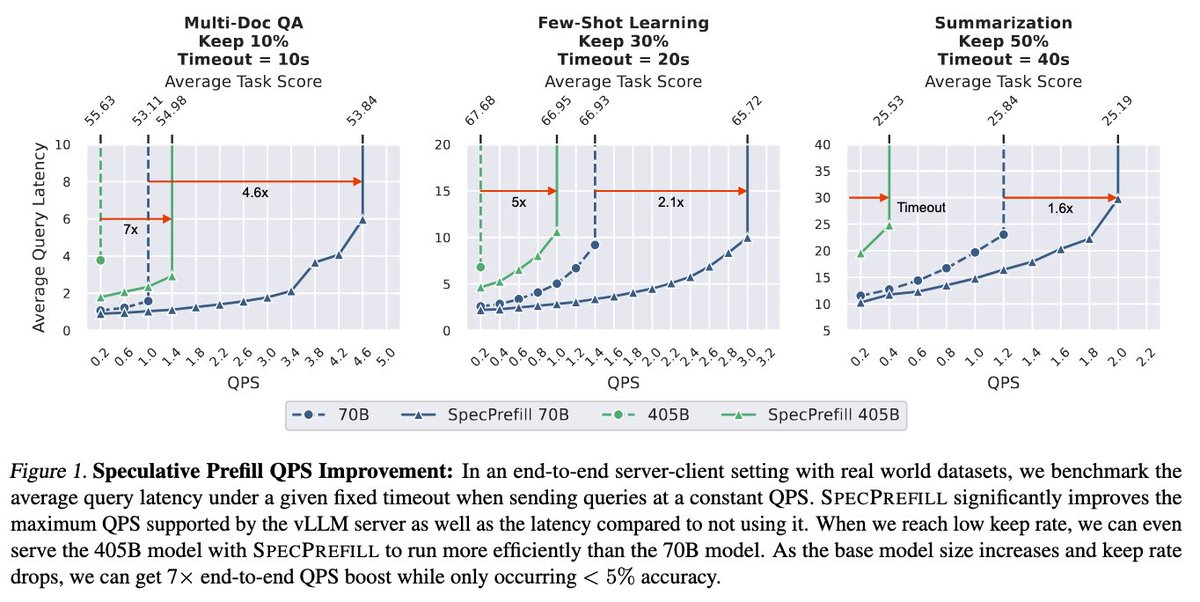

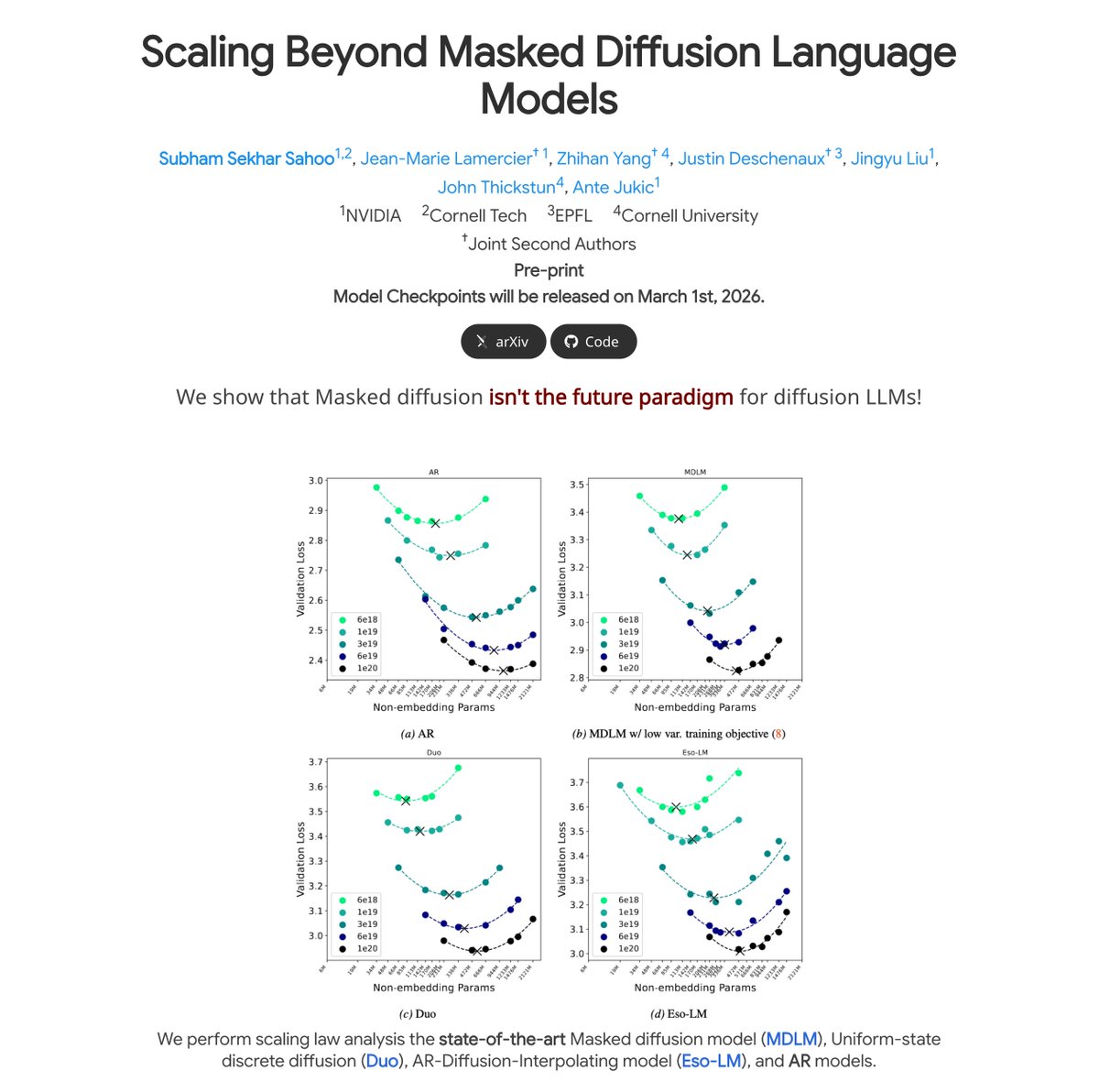

We reveal TiDAR, a new paradigm that’s about to shake up how LLMs run. The wild part? You can get 5x-8x decoding speedup, by hitting peak compute-to-memory ratio even with bsz = 1 . Never waste any compute on your GPU because you pay for it. It’s a Diffusion + Autoregressive hybrid at the sequence level, unlocking a huge leap in self-speculative decoding and multi-token prediction. Demo’s live — go take a look 👇

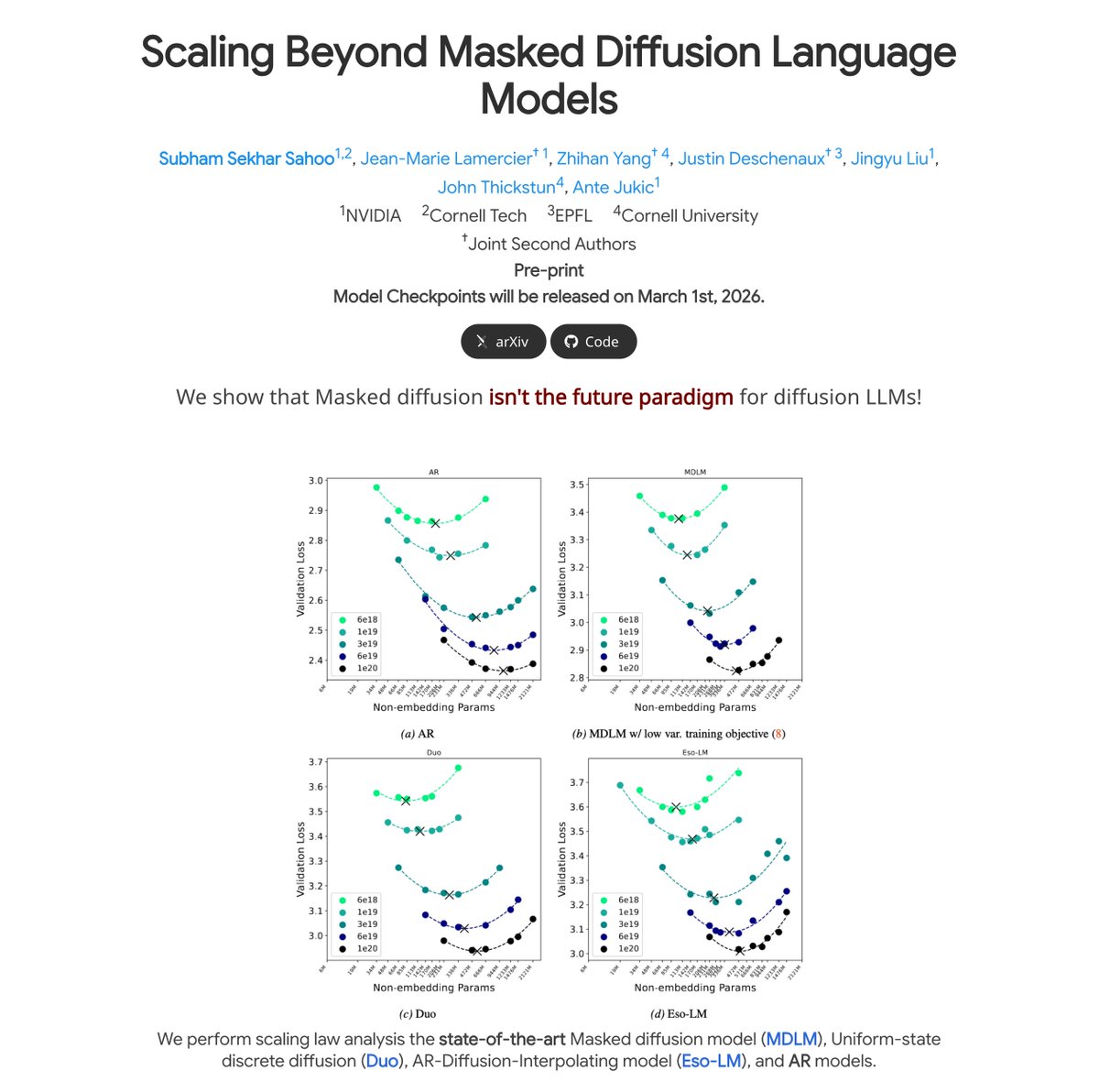

🧵 Glad to introduce LiteSys the inference framework we used in📄 Kinetics: Rethinking Test-Time Scaling Laws (arxiv.org/abs/2506.05333) to evaluate test-time scaling (32K+ generated tokens) at scale. If you are: ✅ Looking for an inference framework that's easy to extend. 🐢 Frustrated by how slow Hugging Face Transformers are. 🎯 Struggling to align performance on evaluation benchmarks. Then LiteSys is built for you. 🔗 GitHub: github.com/Infini-AI-Lab/…