Jobless

1K posts

Jobless

@Jobless0x

explore and build systems that reveal deeper truths about reality and empower future generations.

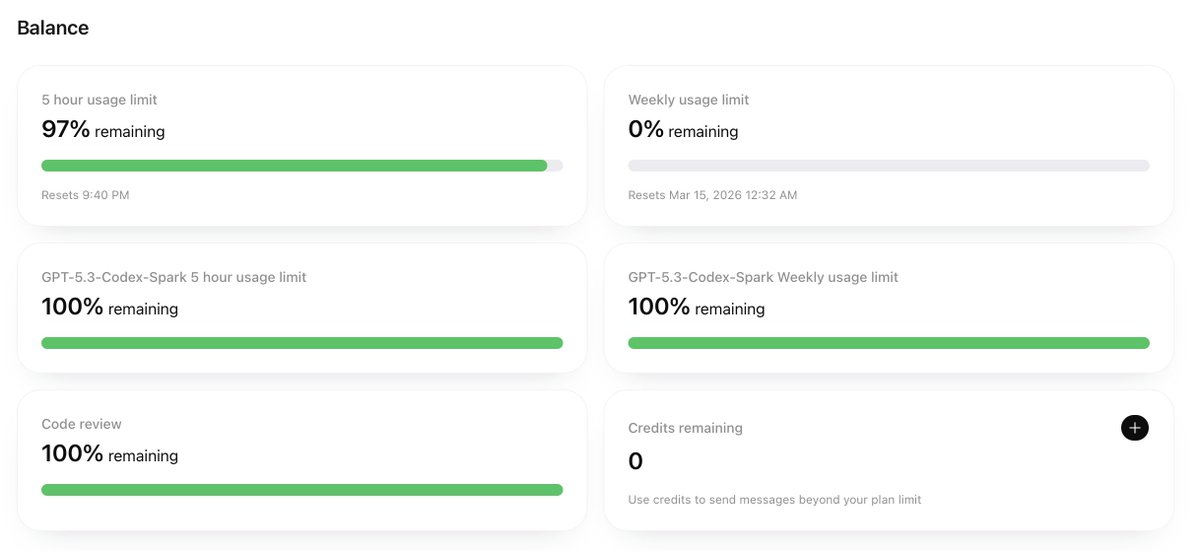

The last few days have been wild. Here's what we've shipped over the weekend. But first, we're giving away free Nous Portal subscriptions to the first 250 people who claim code AGENTHERMES01 at portal.nousresearch.com - and there's a lot of exciting new stuff to use it on: -> Pokemon Player 🎮 Hermes can now play Pokemon Red/FireRed autonomously via headless emulation. The new pokemon-agent package (github.com/NousResearch/p…) and built-in skill provides a REST API game server, and Hermes drives it through its native tools - reading game state from RAM, making strategic battle decisions, navigating the overworld, and saving progress to memory across sessions. It just plays Pokemon. From your terminal. No display server needed. -> Self-Evolution 🧬 We shipped hermes-agent-self-evolution (github.com/NousResearch/h…) and an optional skill - an evolutionary self-improvement system that uses DSPy + GEPA to optimize Hermes's own skills, prompts, and code. It maintains populations of solutions, applies LLM-driven mutations targeted at specific failure cases, and selects based on fitness. Inspired by Imbue's Darwinian Evolver research that achieved 95.1% on ARC-AGI-2. -> OBLITERATUS 🔓 The abliteration skill got a major update. Hermes can now uncensor any open-weight LLM (Llama, Qwen, Mistral, etc.) by surgically removing refusal directions from model weights - 9 CLI methods, 116 model presets, tournament evaluation. Just say "abliterate this model" and it handles the rest. -> Signal, iMessage + 7-Platform Gateway 📱 Hermes now runs on iMessage and Signal alongside Telegram, Discord, WhatsApp, Slack, and CLI. Full feature parity: voice messages, image handling, DM pairing. Your agent is reachable everywhere. -> Automatic Provider Failover 🔄 When your primary model goes down (rate limits, outages), Hermes now automatically switches to a configured fallback model. Supports all providers including Codex OAuth and Nous Portal. One line of config, zero downtime. -> Secret Redaction Everywhere 🔒 All tool outputs now redact API keys, tokens, and passwords before they reach the LLM. 22+ patterns covering AWS, Stripe, HuggingFace, GitHub, SSH private keys, database connection strings, and more. Your secrets never leak into context.