Joseph Jerome

521 posts

Joseph Jerome

@joejerome

Public Policy @DuckDuckGo. Used to spend too much time thinking about my privacy, and then gave most of it away on whatever this platform is.

An incredible run for such a small studio. 4 huge RPGs (2 of them remasters) and assistance on most of the biggest switch games. I’d love for gaming journalism to do an indepth piece on how they accomplish it

🚨 Holy shit… Stanford just exposed that every major AI company is using your private conversations to train their models by default. They analyzed the privacy policies of OpenAI, Google, Meta, Anthropic, Microsoft, and Amazon. Reviewed 28 separate documents across all 6 companies. The findings are worrisome. Every prompt you type. Every file you upload. Every personal detail you share. All of it feeds directly into model training the moment you hit send. That health question you asked ChatGPT at 2am? Training data. Legal situation you described to Claude? Training data. The photo you uploaded to Gemini? Training data. Some companies retain your conversations INDEFINITELY. Amazon, Meta, and OpenAI have no confirmed deletion timeline for certain chat data. Your most private conversations could sit on their servers forever. It gets worse for kids. Four out of six companies allow children aged 13-18 to use their chatbots, and most don’t treat children’s data any differently. Kids’ conversations are likely getting fed into model training by default. Kids who can’t legally consent to it. Here’s something most people missed: enterprise customers are opted OUT of training by default. You, the consumer paying $20/month? Opted IN. Companies paying thousands? Protected automatically. There’s a two-tiered privacy system and you’re on the wrong side of it. OpenAI even frames the opt-in with guilt. Their settings page says “Improve the model for everyone.” Stanford’s researchers flagged this as a textbook dark pattern designed to make you feel bad for protecting your own data. Meta’s contractors told reporters they routinely see identifiable personal information in the chat data they review. Journalists were able to positively identify at least one real person from chat transcripts shared with them. The privacy policies themselves? Stanford had to dig through 6 separate documents just for OpenAI alone. Most real disclosures were buried in sub-policies no normal person would ever find. The researchers said it was challenging for THEM to piece it together. For consumers? “Practically impossible.” Only Microsoft explicitly stated they try to remove personal data like names, phone numbers, and addresses before training. The rest are either vague about it or completely silent.

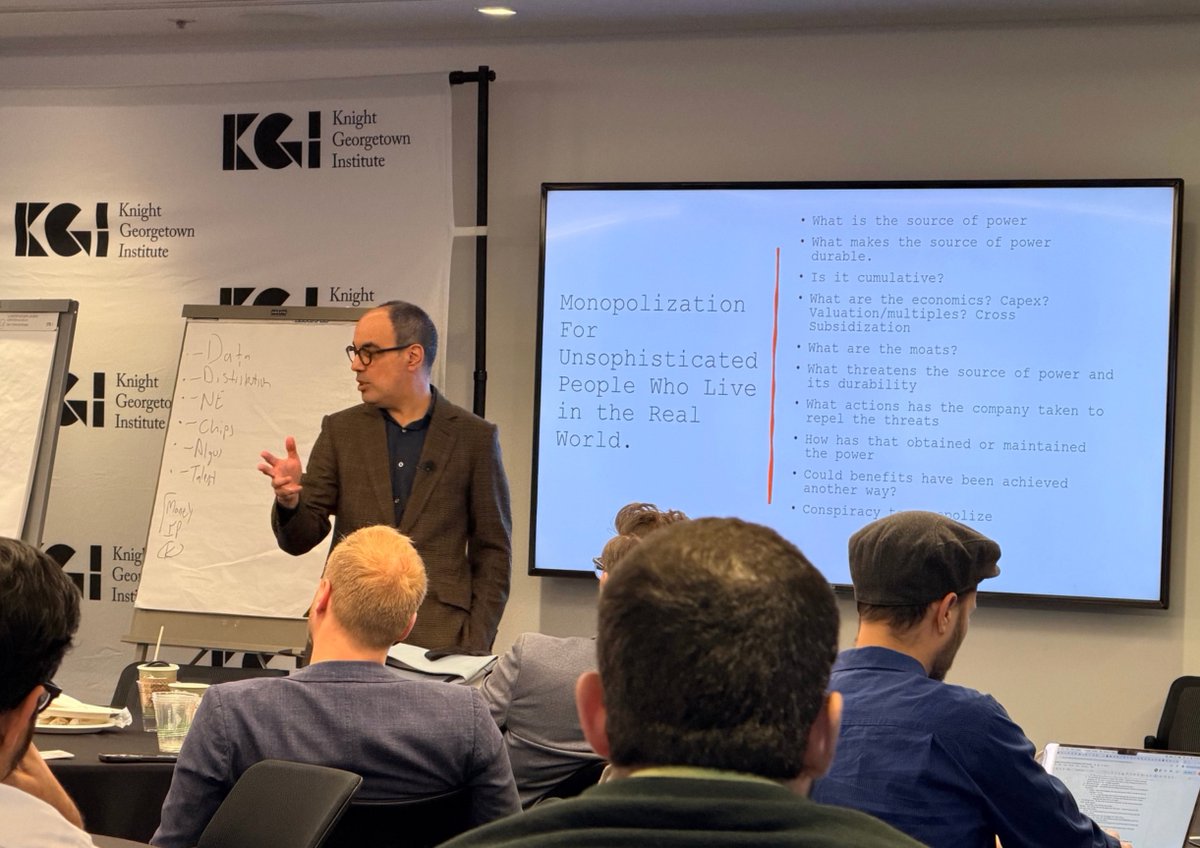

If you ask narrow questions, you get narrow answers Starting with defining a market isn't living in the real world Look at how competition works and harms

NOTEWORTHY: Pennsylvania Supreme Court rules that there are no 4th Amendment rights in your Google search terms. When you search on Google, you tell them your search terms; the government can get those queries without a warrant. The third-party doctrine applies.

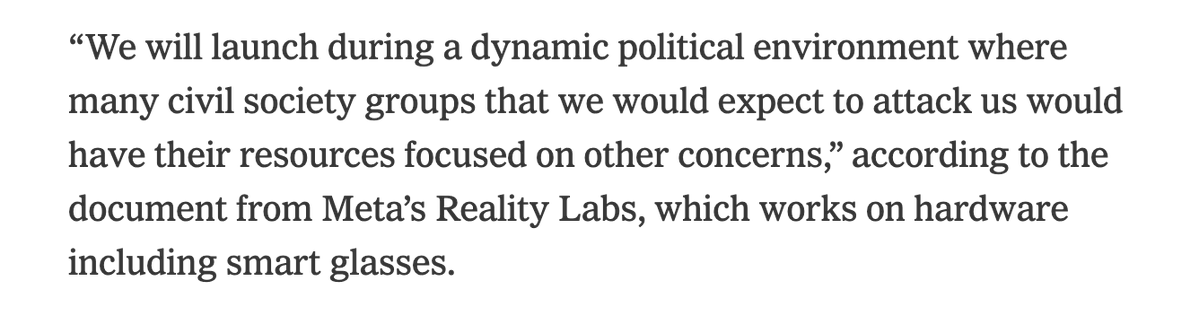

Stripping states of jurisdiction to regulate AI is a subsidy to Big Tech and will prevent states from protecting against online censorship of political speech, predatory applications that target children, violations of intellectual property rights and data center intrusions on power/water resources. The rise of AI is the most significant economic and cultural shift occurring at the moment; denying the people the ability to channel these technologies in a productive way via self-government constitutes federal government overreach and lets technology companies run wild. Not acceptable.

News: House Republican leaders are searching for a legislative vehicle they could attach language to that would effectively ban state regulation of artificial intelligence. More from @JakeSherman, @BenBrodyDC and @Dareasmunhoz: punchbowl.news/article/tech/h…