JoshXT

6.6K posts

JoshXT

@JoshXT

Founder, CTO & Software Engineer @ https://t.co/psDIioAvYS, https://t.co/q7ENklCk4W, https://t.co/AAgBOldMaL, https://t.co/142r1MAKUa . Forever curious & building open source.

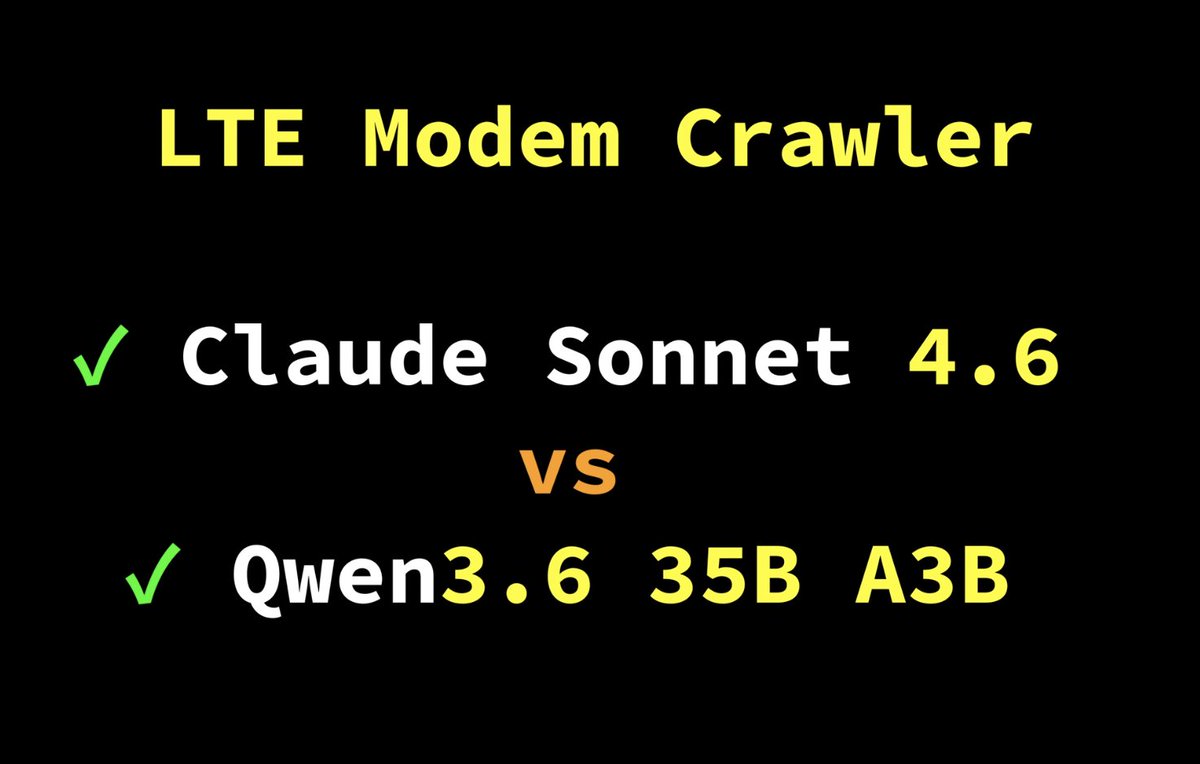

if you are starting local ai from zero, i'll drop the full guide in the open source release but the short version: any modern desktop with a single rtx 3090 or 4090 (24gb vram class), llama.cpp built with cuda, qwen 3.6 27b dense at q4_k_m gguf from unsloth, and hermes agent or opencode as the harness driving it. ngl 99 to offload everything to gpu, c 262144 for 256k context, fa on for flash attention, and the q4_0 kv cache to fit it all in 21gb. the rest is the prompt. that is the whole stack.

mcp was a mistake.

I'm shilling Codex so much at work and OpenAI is not even paying me for it it's so early, most people don't even know about the Codex app

/goal also lands in Codex CLI 0.128.0. Our take on the Ralph loop: keep a goal alive across turns. Don't stop until it's achieved. Built by my co-worker and OpenAI mentor Eric Traut, aka the Pyright guy. One of the GOATs I get to work with daily.

You can now keep codex going for days. With GPT-5.5 it will build an entire OS kernel for you if you ask, or find critical bugs in a codebase, or optimize your database schemas, or… the options are endless.