@CVPR @HaoSuLabUCSD @tolga_birdal 📣 Call for Papers: As part of C3DV, we invite researchers to submit papers on topics related to 3D compositional vision. 📅 Non-archival papers deadline: May 15th. bit.ly/4c2BsQD 🧵 2/4

KAUST Vision CAIR group

128 posts

@KAUSTVisionCAIR

KAUST VISION CAIR (Computer Vision, Core AI Research) group led by Prof. @moElhoseiny. @KAUST_News https://t.co/wpPoM4iiYj…

@CVPR @HaoSuLabUCSD @tolga_birdal 📣 Call for Papers: As part of C3DV, we invite researchers to submit papers on topics related to 3D compositional vision. 📅 Non-archival papers deadline: May 15th. bit.ly/4c2BsQD 🧵 2/4

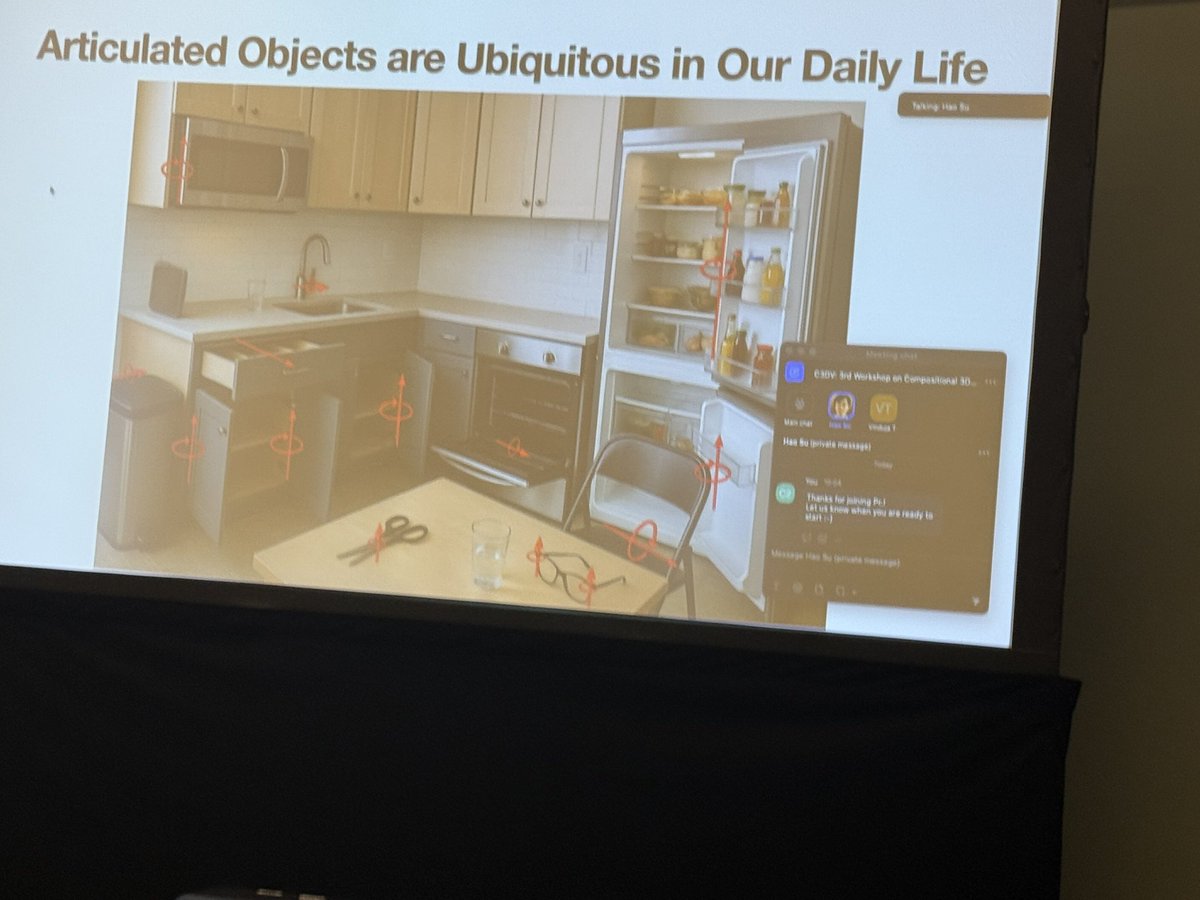

Join us for the Workshop on Compositional 3D Vision (C3DV) #CVPR2025! @CVPR 🏆 Challenges: bit.ly/4c8DfDS 📢 Call for Papers: bit.ly/4c2BsQD C3DV will feature two exciting challenges and a fantastic lineup of speakers! 🧵👇

Join us for the Workshop on Compositional 3D Vision (C3DV) #CVPR2025! @CVPR 🏆 Challenges: bit.ly/4c8DfDS 📢 Call for Papers: bit.ly/4c2BsQD C3DV will feature two exciting challenges and a fantastic lineup of speakers! 🧵👇

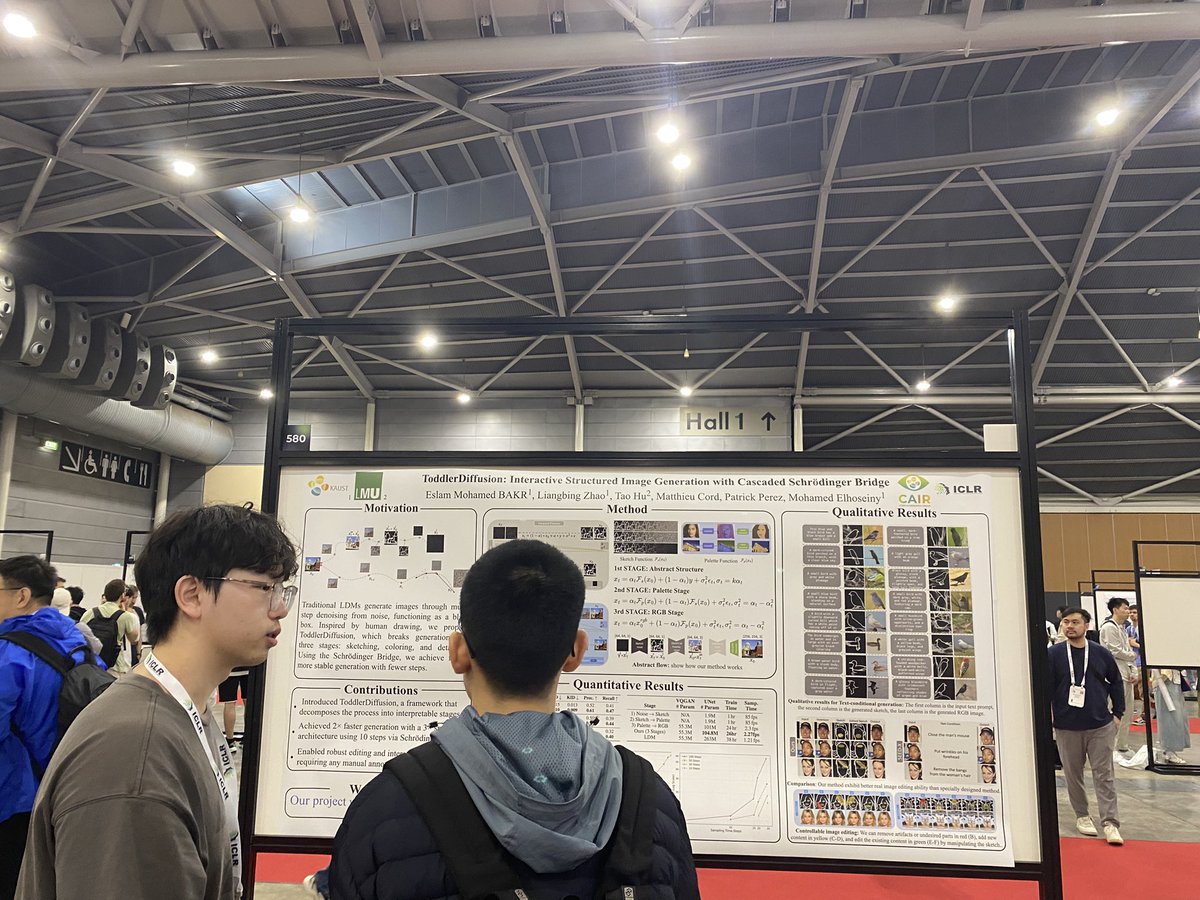

#ICLR 2025 🚀 Excited to share that three papers have been accepted at ICLR 2025! 🎉 Huge thanks to my incredibly talented students and collaborators for their dedication and hard work—this wouldn't have been possible without you!

🚨VideoLLM from Meta!🚨 LongVU: Spatiotemporal Adaptive Compression for Long Video-Language Understanding 📝Paper: huggingface.co/papers/2410.17… 🧑🏻💻Code: github.com/Vision-CAIR/Lo… 🚀Project (Demo): vision-cair.github.io/LongVU We propose LongVU, a video LLM with a spatiotemporal adaptive compression mechanism designed for real-world hour-long video understanding. LongVU adaptively reduces the number of video tokens by leveraging (1) DINOv2 feature similarity across frames, (2) Cross-modal text-frame similarity, and (3) temporal frame similarity. 1. High quality on video-based QA: 67.6% on EgoSchema, 66.9% on MVBench, 65.4% on MLVU and 59.5% on VideoMME long 2. +5% accuracy boost on average across various video understanding benchmarks compared to LLaVA-OneVision and VideoChat2 3. Our edge model, LongVU-3B, also outperformed 4B counterparts such as VideoChat2(Phi-3) and Phi-3.5-vision-instruct by a large margin. with: @xiaoqian_shen @liuzhuang1234 @Hu_Hsu @garvinchen2 @klightlm @zechunliu @balakrishnan_vr @Fanyi_Xiao @hyunwoojkim @bilgeesra @raghuraman @moElhoseiny @vikasc

Announcing our hybrid panel: Computer Vision in the Real World! 🌿 🗓️ 09/30 at 11:50 am CEST 📍 Attend in person at ECCV or virtually Explore the challenges in real-world CV deployment for biodiversity and conservation. #AIforConservation #CV4E