ttt

34 posts

Every time. They're failing to separate the output block upon <end_of_thinking> token. Now why would that be the case? I hope that it *is* a new model, perhaps with a new tokenizer, perhaps because it's built for reasoning_effort; and the webui isn't 100% updated yet.

they sure do, but the first legitimate paper on "how to reproduce o1 from scratch" will be called something like DeepSeek-R1: chain of thought with robust self-correction using process reward models, Shao et al 2025, and weights will land on hf hours before it hits arxiv

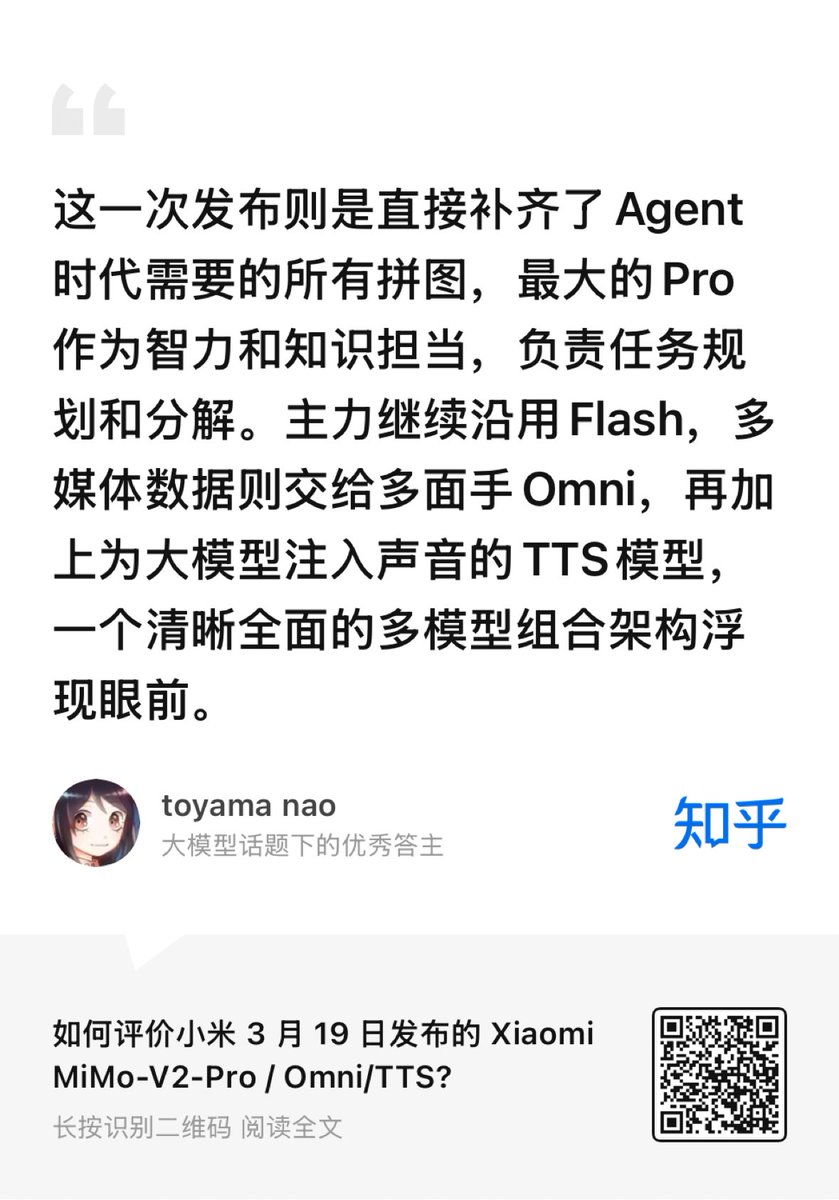

Rumors say DeepSeek V4 (multimodal) could drop as soon as next week. What might actually be new in it? 💬 From a technical & special perspective, Zhihu contributor 普杰 argues the key isn't products — it's which new technologies land in the model. Historically, each DeepSeek release shipped a core technique 👇 • R1 → Chain-of-Thought reasoning • V3.1 → Native reasoning control • V3.2 → New attention (DSA) + tool-use CoT + competition-level RL So the real question: what tech does DeepSeek still have that hasn't landed yet? Possible candidates include: 1️⃣ NSA attention 2️⃣ DeepSeek-OCR2 multimodality 3️⃣ Enhanced MHC residual architecture 4️⃣ Engram (memory–reasoning separation) 5️⃣ Domestic GPU support 6️⃣ New efficient data structures 7️⃣ Reinforcement learning upgrades Let's break them down. 1️⃣ NSA attention — very likely already deployed A tester fed the model an entire novel (~1M tokens) using a rarely circulated book to avoid pretraining leakage. The model could summarize the plot, retrieve passages, reconstruct story structure and describe characters All in one output, with no obvious errors — and very fast inference, suggesting NSA handles ultra-long context. 2️⃣ DS-OCR2 multimodality — probably not yet The current web model (often called "V4 Lite") still appears text-only. Also, DeepSeek-OCR2 reportedly uses Qwen's vision encoder, suggesting DeepSeek may still be building its own vision stack. 3️⃣ Enhanced MHC residual architecture — possible MHC claims better scaling efficiency for large models. Given DeepSeek's tight compute budget, adopting training-efficient architecture would make sense. 4️⃣ Engram — unlikely this release Engram should significantly reduce hallucinations. But V4 Lite doesn't show dramatic improvements, and the paper appeared quite recently, so it may have missed the V4 timeline. 5️⃣ Domestic GPU support — plausible Unlike big tech firms that can train overseas, DeepSeek has stronger incentives to optimize for domestic hardware. Compute constraints make this increasingly attractive. 6️⃣ New efficient data structures — very likely DeepSeek consistently pushes extreme efficiency. • V3's cost advantage relied heavily on FP8 • Competitors like Kimi are already exploring INT4 So V4 could experiment with INT4, NF4, or new FP8 formats. 7️⃣ Reinforcement learning — possible surprises DeepSeek has strong RL capabilities. Both R1 and V3.2 Speciale relied heavily on RL training. V4 could push further with agentic RL, potentially targeting Claude-level reasoning or beyond. V4 may arrive within weeks — and we'll quickly see which predictions are right. 📖 Original discussion: zhihu.com/question/20115… #DeepSeek #DeepSeekV4 #AI #LLM #Multimodal #Research #Tech

The 10-digit addition transformer race is getting ridiculous and fun! Started with 6k params (Claude Code) vs 1,6k (Codex). We're now at 139 params hand-coded and 311 trained. I made AdderBoard to keep track: 🏆 Hand-coded: 139p: @w0nderfall 177p: @xangma 🏆 Trained: 311p by @reza_byt 456p by @yinglunz 777p by @YebHavinga Rules are simple: - Real autoregressive transformer (attention required) - ≥99% on 10K held-out pairs - No hard-coding the algorithm in Python Submit via GitHub issue/PR.

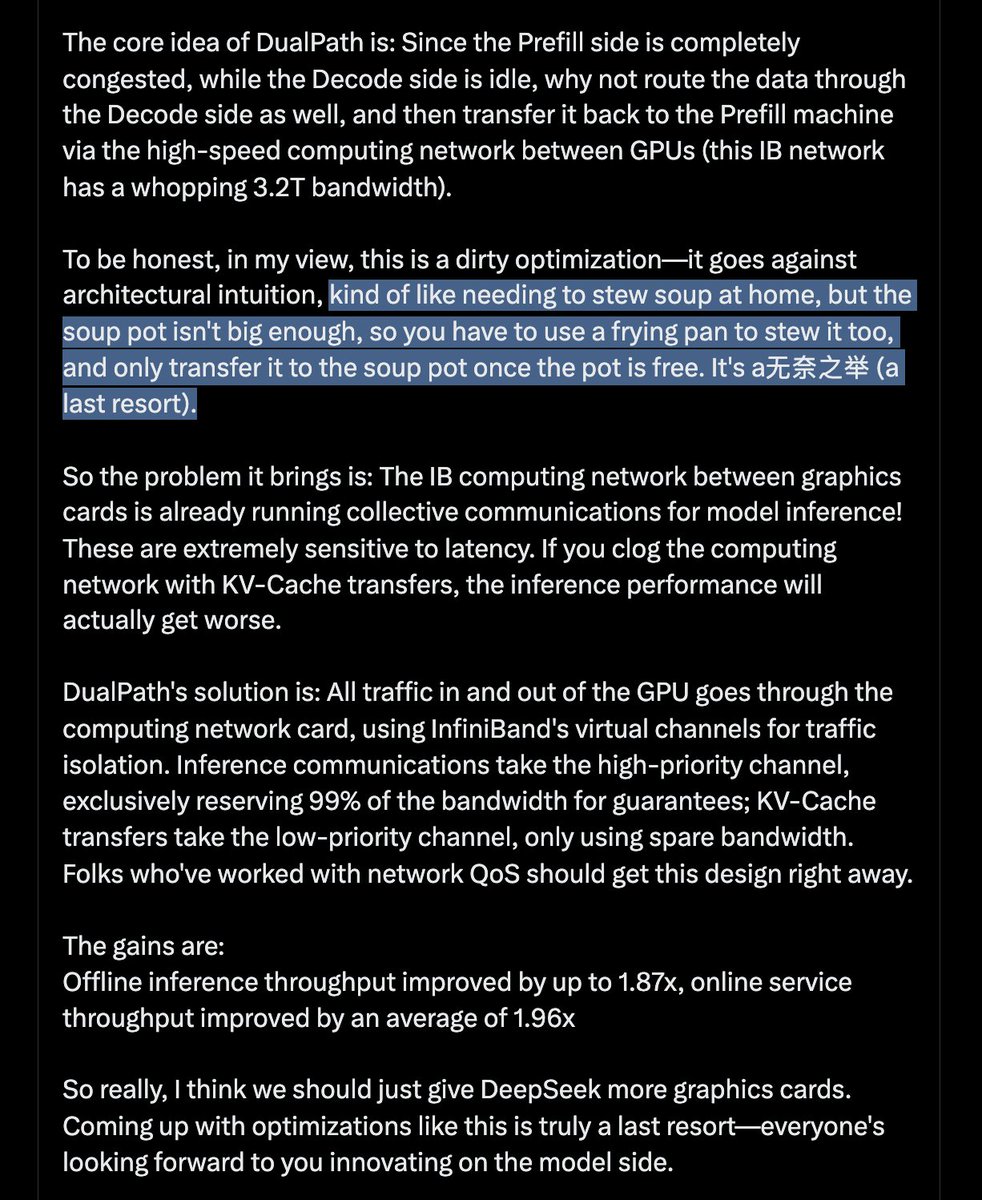

DeepSeek 又发新论文啦!给大家带来解读。说实话这次的论文我看完了心里挺不是滋味 DeepSeek 联合北大、清华发了一篇新论文 DualPath, 解决了一个很多人可能没意识到的问题: 在 Agent 场景下, GPU 大部分时间不是在算, 而是在等数据从硬盘搬过来. 先说背景. 大家都知道现在 AI Agent 任务火爆. 问题是: 每一轮上下文的 95%以上都是之前轮次的"旧数据" (KV-Cache), 只有一丁点是新的. GPU 其实没多少活要干, 但它得等着把之前的 KV-Cache 从存储里读出来才能开工. 现在主流的推理架构是 Prefill-Decode 分离 (PD分离), Prefill 引擎负责理解输入, Decode 引擎负责生成输出. 在这种架构下, 所有的 KV-Cache 都只能从存储加载到 Prefill 引擎, Prefill 侧的存储网卡(只有400G带宽)被挤爆了. 那咋办? 加网卡吗? 且慢, Decode 侧也有存储网卡, 这个卡在Prefill阶段是在摸鱼的! 所以得想办法利用起来! DualPath 的核心思路是: 既然 Prefill 侧堵死了, 而 Decode 侧空着, 那为什么不让数据也走 Decode 侧, 再通过 GPU 间的高速计算网络(这个IB网络带宽足足有3.2T) 传输回 Prefill 机器. 说实话在我来看这是个脏优化, 不符合架构直觉, 有点类似家里要炖汤, 结果汤锅装不下, 只能用炒勺也炖, 等汤锅闲下来了再把炒勺的转移到汤锅里. 也算是无奈之举了. 所以带来的问题是: 显卡间的IB计算网络上还跑着模型推理的集合通信呢! 这些对延迟极其敏感. 你要是 KV-Cache 搬运把计算网络堵了, 那推理性能反而会更差. DualPath 的解决方案是: 所有进出 GPU 的流量全部走计算网卡, 利用 InfiniBand 的虚拟通道做流量隔离, 推理通信走高优先级通道, 独占 99% 带宽保障; KV-Cache 搬运走低优先级通道, 只捡空闲带宽用. 搞过网络 QoS 的同学应该能 get 到这个设计. 收益是: 离线推理吞吐最高提升 1.87x, 在线服务吞吐平均提升 1.96x 所以真的, 我觉得多给DeepSeek点显卡吧, 搞这种优化真的是无奈之举, 大家都在期待你们搞模型上的创新. 在线阅读地址:swim.kcores.com/DualPath%20Bre… 往期合集:github.com/karminski/teac…

Deepseek got called out for scraping 150k Claude messages. So I'm releasing 155k of my personal Claude Code messages with Opus 4.5. I'm also open sourcing tooling to help you fetch your data, redact sensitive info & make it discoverable on HF - link below to liberate your data!

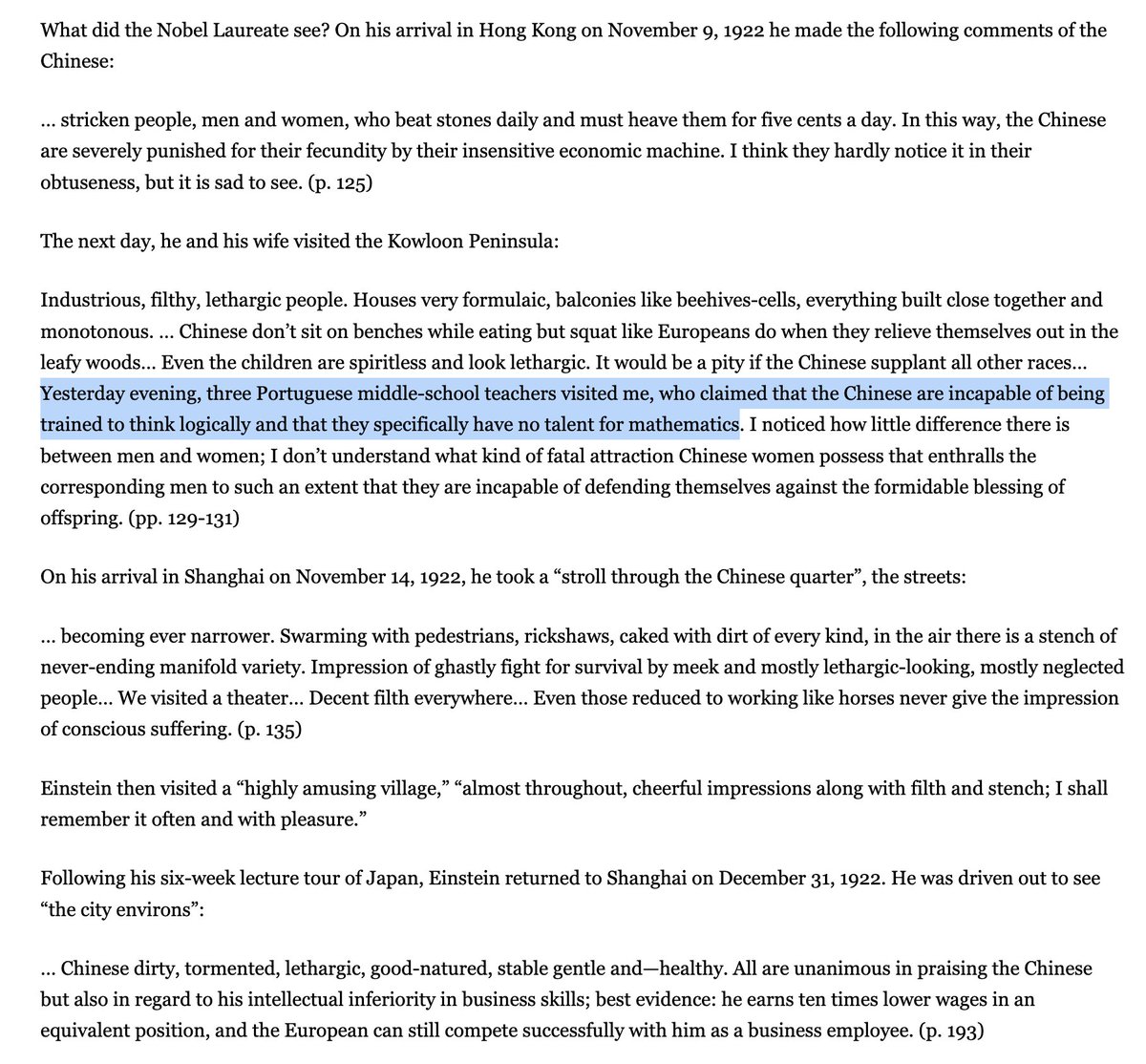

Einstein too despised the Chinese. Low izzat race Tolerance of hard repetitive work is generally a mark of subhumanity to cultures without strong agricultural tradition: Brahmins, Jews, modern Westoids. They will mock the "peasant" Asian grindset ruthlessly. underrated bias

🦞 OpenClaw 2026.1.30 🐚 Shell completion 🆓 Kimi K2.5 + Kimi Coding: run your claw for free 🔐 MiniMax OAuth: one more model just a login away 📱 Telegram got a glow-up — 6 fixes from threading to HTML rendering Plus a bunch of community-contributed fixes across LINE, BlueBubbles, routing, security & OAuth. The lobster provides 😏 github.com/openclaw/openc…

@teortaxesTex Will it surpass Kimi k 2.5?

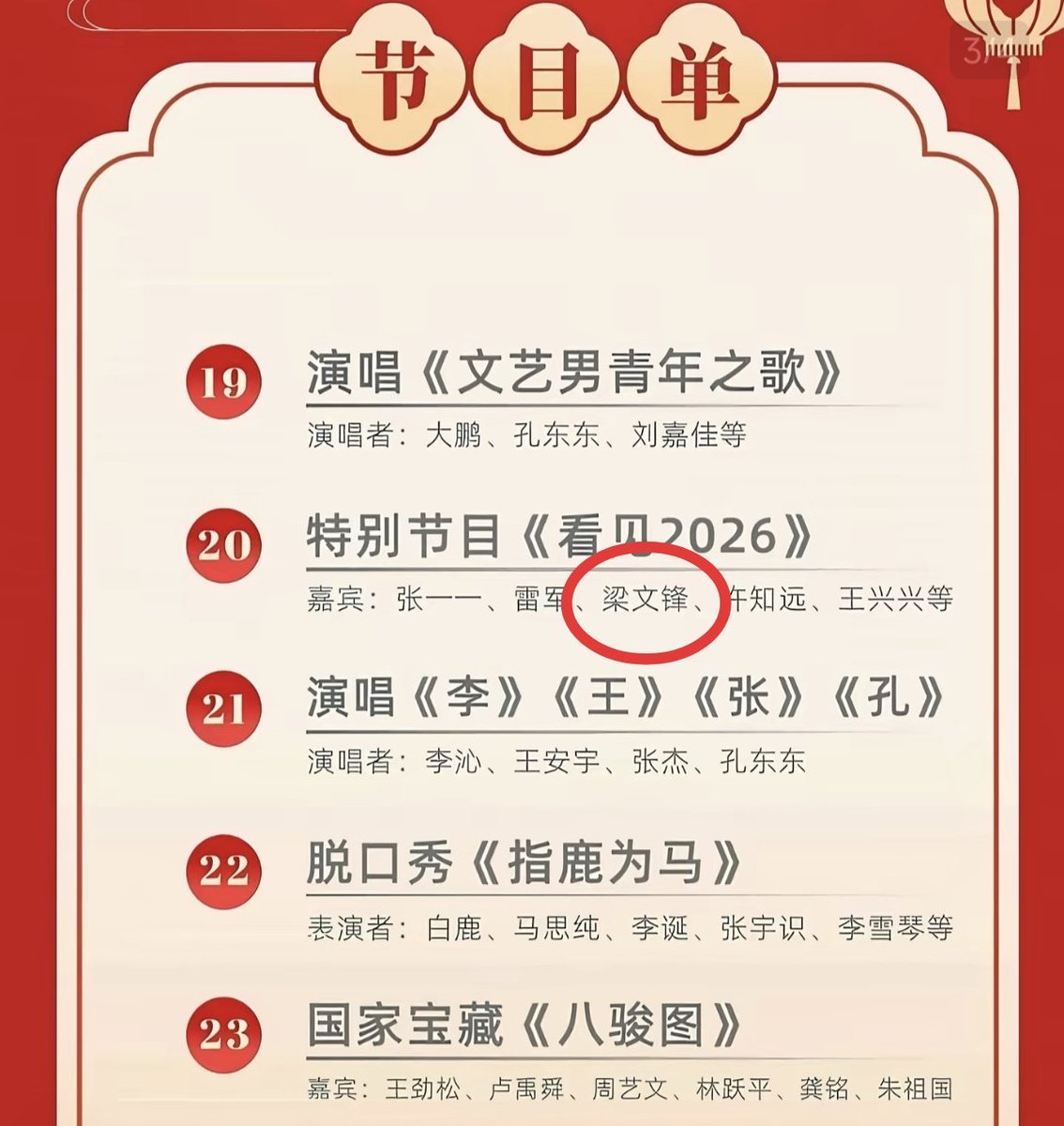

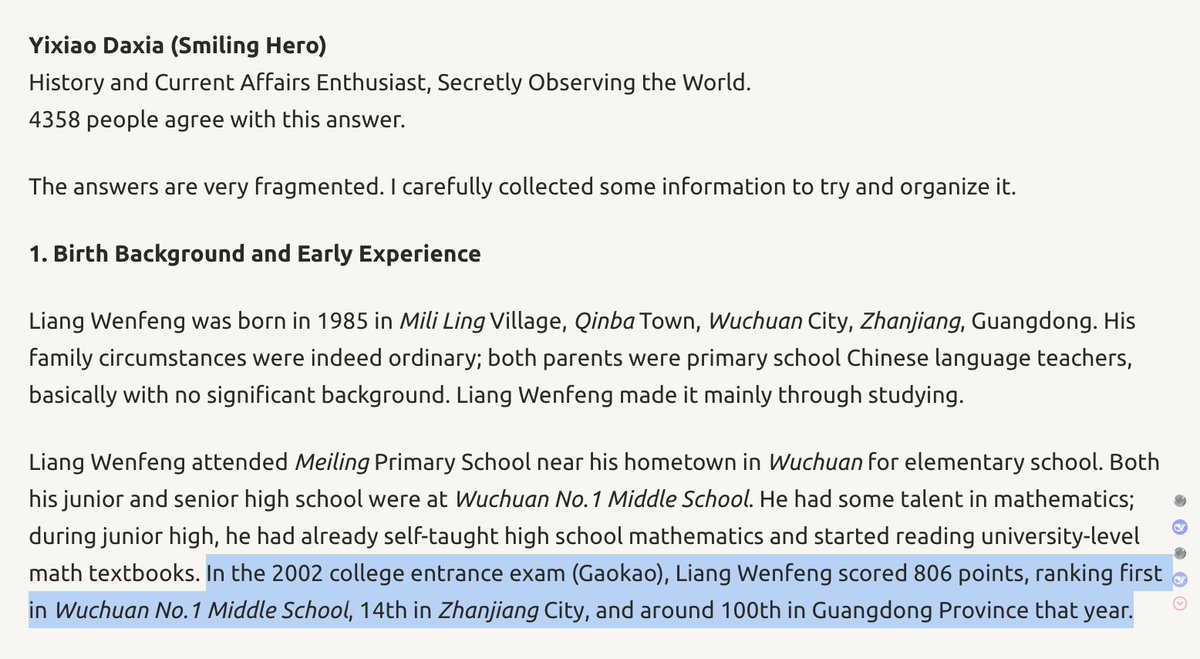

Discovered on LessWrong: a classmate's insight into Wenfeng the person. We already knew he's a huge outlier, polymath, maverick etc. etc. But what is new here: he's a strong electrical engineer. It never was software-only. I can see how he feels they could make their own chips.