Intern Large Models

102 posts

@intern_lm

Intern-series large models by Shanghai AI Laboratory.

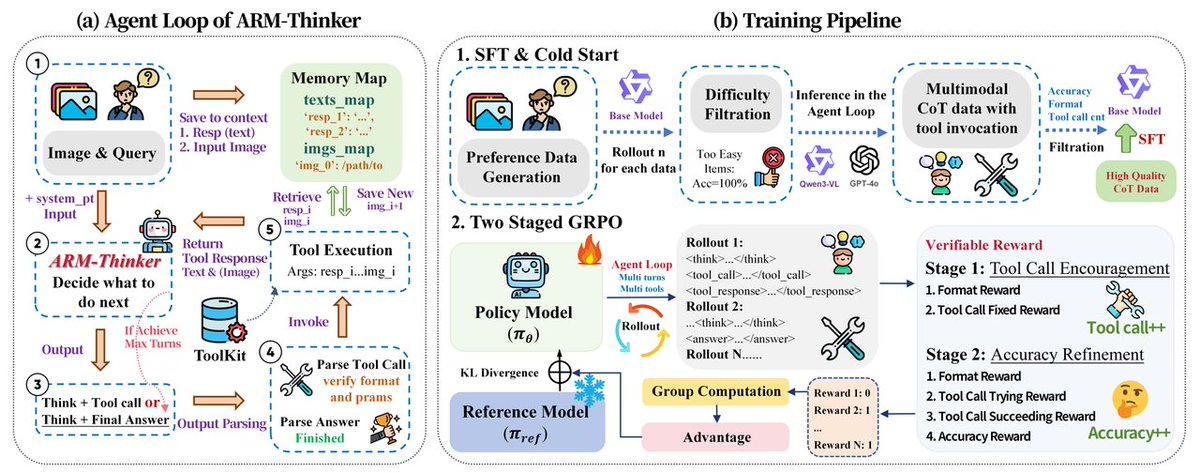

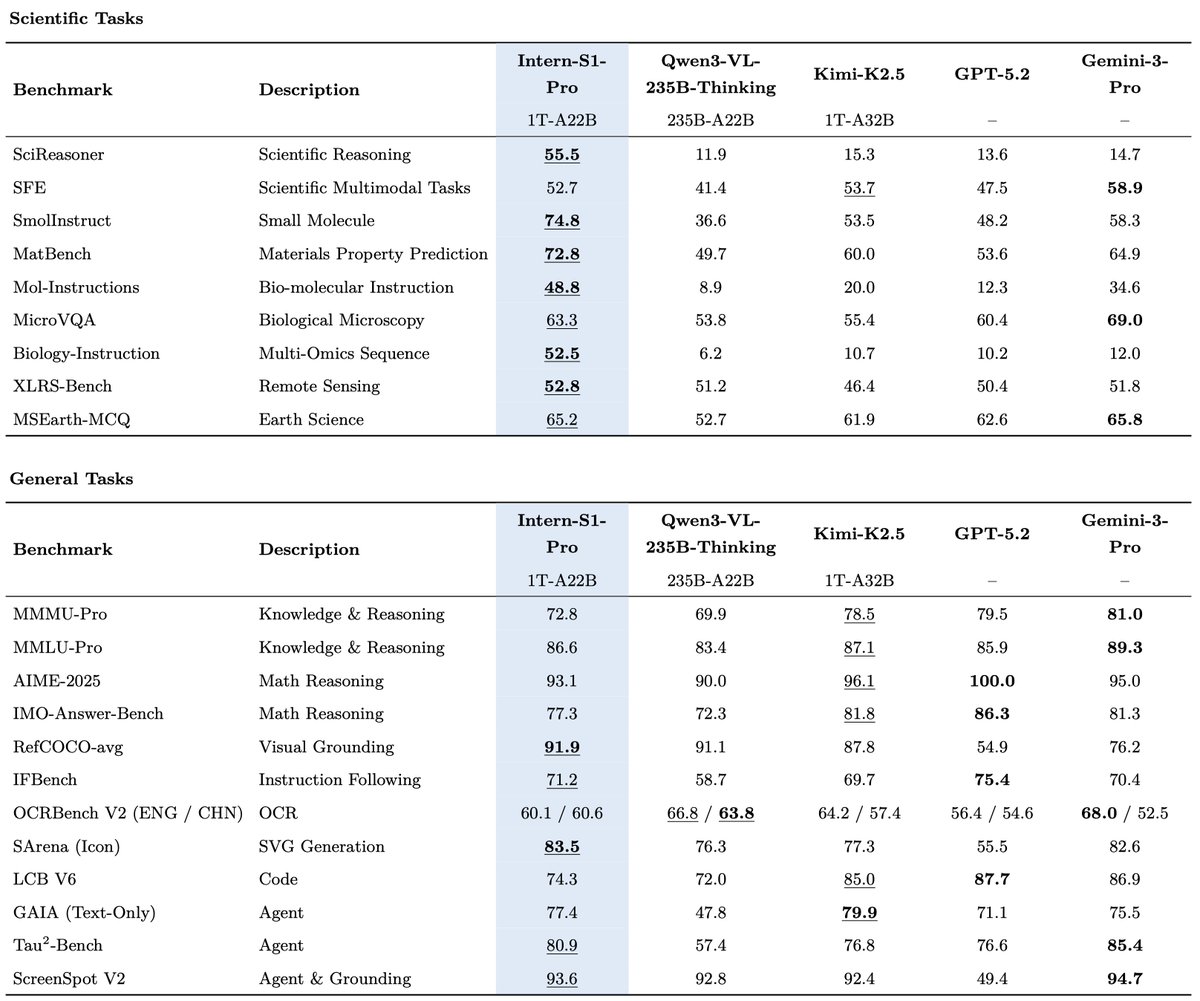

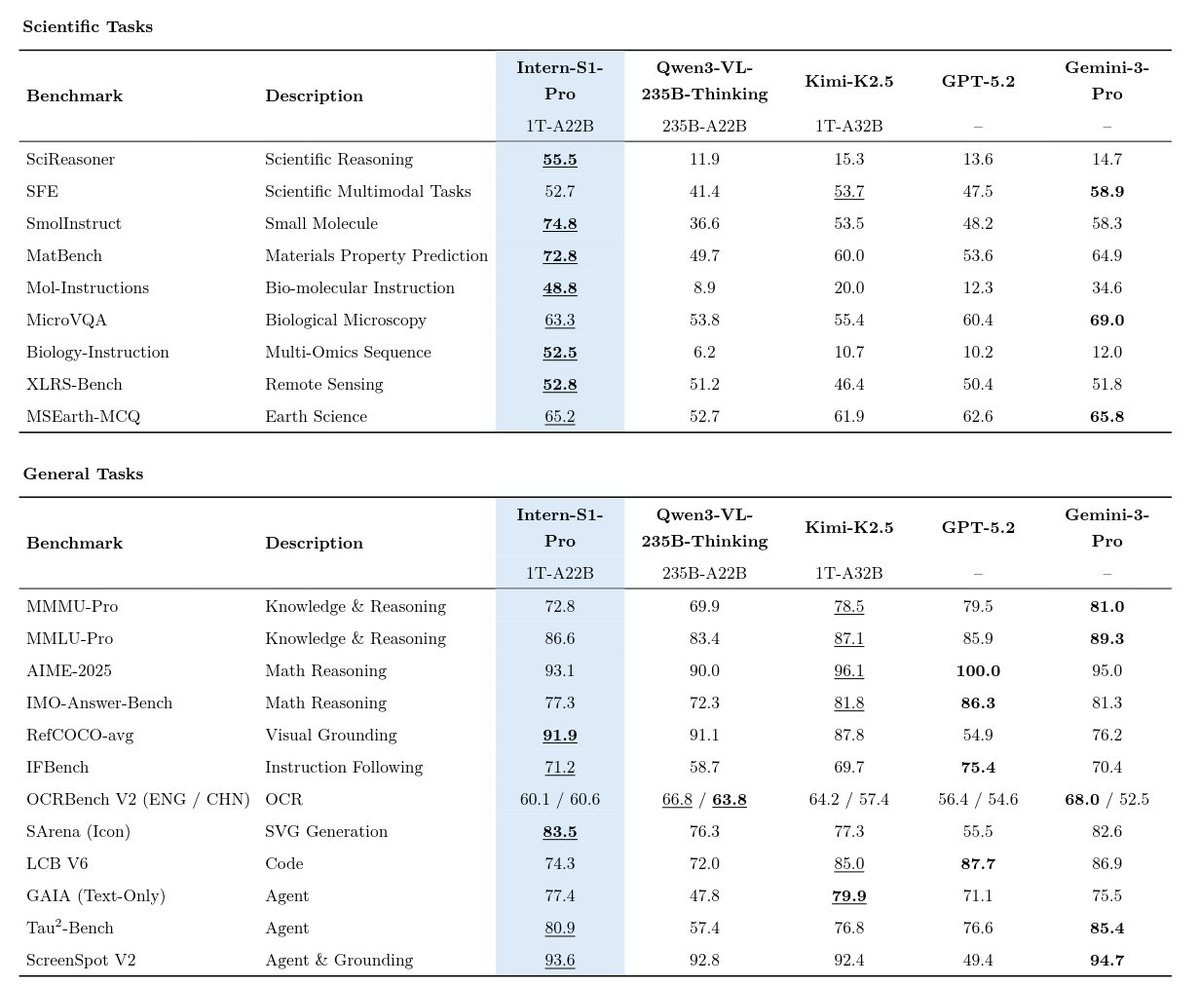

🚀Introducing Intern-S1-Pro, an advanced 1T MoE open-source multimodal scientific reasoning model. 1⃣SOTA scientific reasoning, competitive with leading closed-source models across AI4Science tasks. 2⃣Top-tier performance on advanced reasoning benchmarks, strong general multimodal performance on various benchmarks. 3⃣1T-A22B MoE training efficiency with STE routing (dense gradient for router training) and grouped routing for stable convergence and balanced expert parallelism. 4⃣Fourier Position Encoding (FoPE) + upgraded time-series modeling for better physical signal representation; supports long, heterogeneous time-series (10^0–10^6 points). 😍Intern-S1-Pro is now supported by vLLM @vllm_project and SGLang @sgl_project @lmsysorg — more ecosystem integrations are on the way. ☺️Model:@huggingface huggingface.co/internlm/Inter… ☺️GitHub: github.com/InternLM/Inter… ☺️Try it now at: chat.intern-ai.org.cn

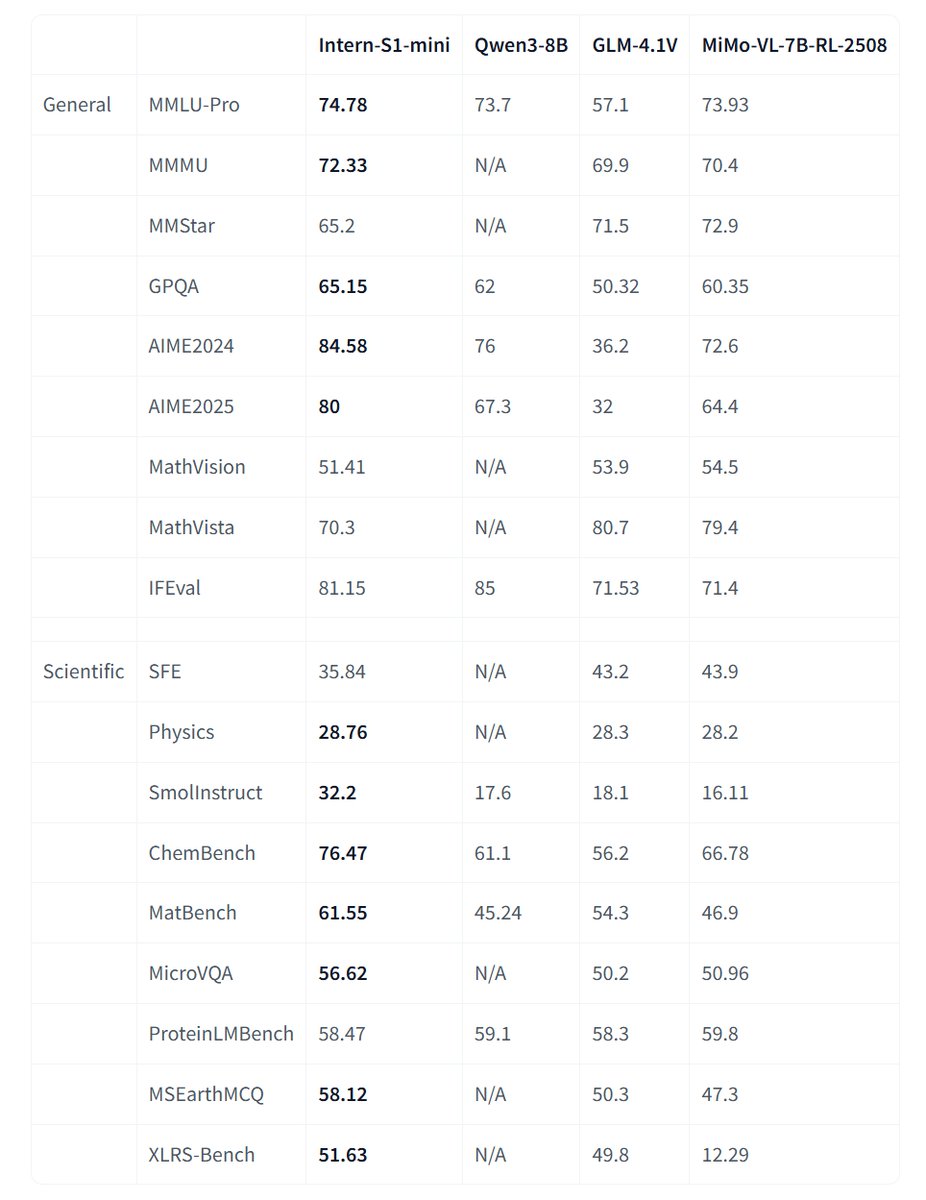

🚀Introducing Intern-S1-Pro, an advanced 1T MoE open-source multimodal scientific reasoning model. 1⃣SOTA scientific reasoning, competitive with leading closed-source models across AI4Science tasks. 2⃣Top-tier performance on advanced reasoning benchmarks, strong general multimodal performance on various benchmarks. 3⃣1T-A22B MoE training efficiency with STE routing (dense gradient for router training) and grouped routing for stable convergence and balanced expert parallelism. 4⃣Fourier Position Encoding (FoPE) + upgraded time-series modeling for better physical signal representation; supports long, heterogeneous time-series (10^0–10^6 points). 😍Intern-S1-Pro is now supported by vLLM @vllm_project and SGLang @sgl_project @lmsysorg — more ecosystem integrations are on the way. ☺️Model:@huggingface huggingface.co/internlm/Inter… ☺️GitHub: github.com/InternLM/Inter… ☺️Try it now at: chat.intern-ai.org.cn

🔥China’s Open-source VLMs boom—Intern-S1, MiniCPM-V-4, GLM-4.5V, Step3, OVIS 🧐Join the AI Insight Talk with @huggingface, @OpenCompassX, @ModelScope2022 and @ZhihuFrontier 🚀Tech deep-dives & breakthroughs 🚀Roundtable debates ⏰Aug 21, 5 AM PDT 📺Live: youtube.com/live/kh0WSMoVZ…