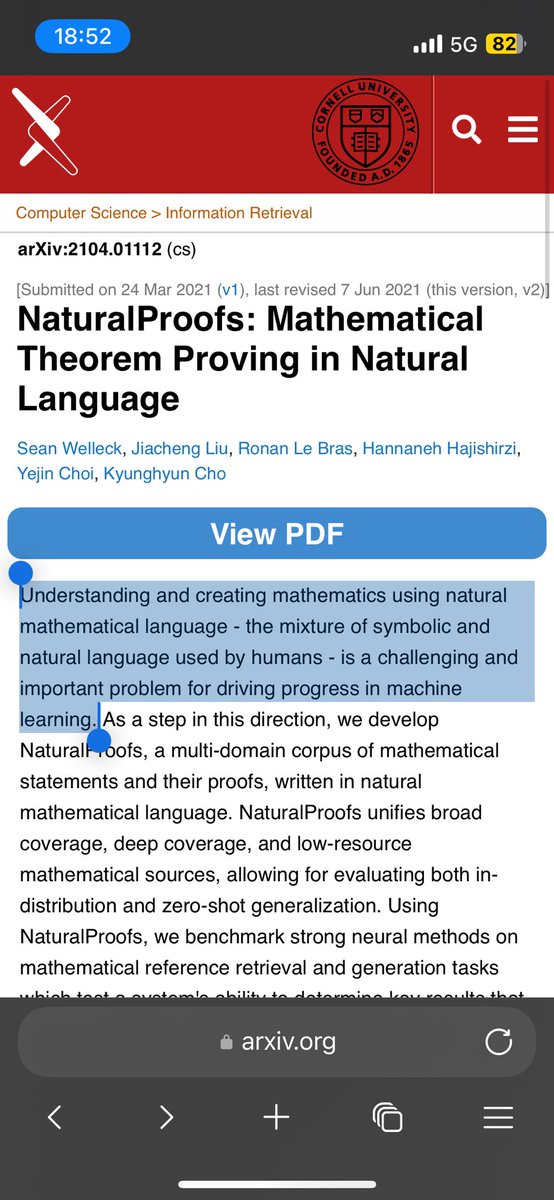

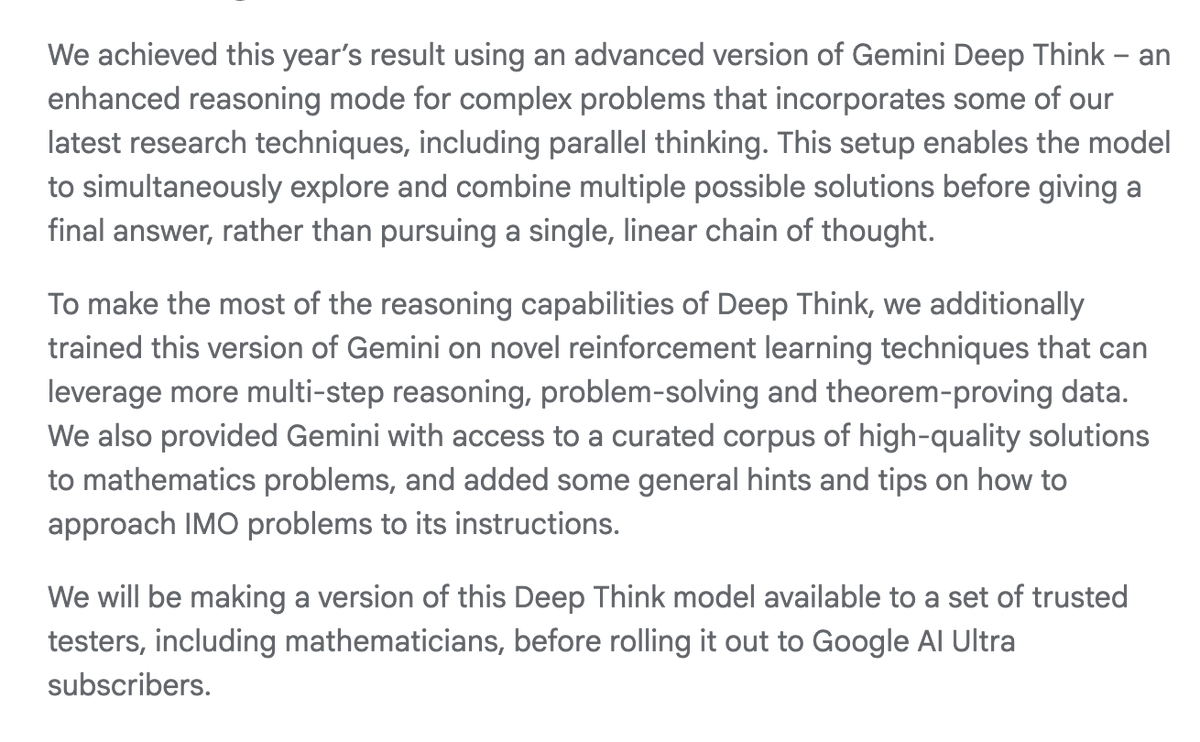

Our IMO journey continues: the yolo run model that we trained a week before #imo2025, despite all possible likelihood of failures, magically achieves SOTA across a wide range of reasoning tasks from maths, to coding, and challenging knowledge. I'm very excited that we have now delivered the IMO 🥇 system to the hands of mathematicians and a simplified version (results below) to all Google AI Ultra subscribers.