Andreas Kirsch 🇺🇦

13.8K posts

Andreas Kirsch 🇺🇦

@BlackHC

My opinions only here. 👨🔬 RS DeepMind, Midjourney 1y 🧑🎓 DPhil AIMS 4.5y 🧙♂️ RE DeepMind 1y 📺 SWE Google 3y 🎓 TUM 👤 @nwspk

You gotta be shitting me

Holy fucking shit. Finally found the name for it. Im going to cry Ive never been able to explain this to anyone they never know what im talking about

The Israeli/US murder of Kharazi at his house along with his wife was truly despicable act—killing a reformist diplomat who served an FM for Khatami and helped develop his “Concert of Civilizations” idea and was reportedly helping mediate the current situation.

Self-Distilled RLVR paper: huggingface.co/papers/2604.03…

Reporter: Deliberate attacks on civilian infrastructure violate the Geneva conventions and international law. Trump: Who are you with? Reporter: I'm with the New York Times Trump: Failing New York Times. Circulation is way down. Reporter: Are you concerned that your threat to bomb power plants and bridges amount to war crimes? Trump: No, not at all. These are disturbed people. Quiet. You no longer have credibility. You’re fake.

Those who don't know, I was an NSF postdoc with @SchmidhuberAI PhD's advisor (Schulten) back in the 90s. 1 of 2 in the country. And my PhD groupmate recently won the Nobel prize for AlphaFold. So I have some qualifications here to say 𝐲𝐞𝐚𝐡 𝐭𝐡𝐢𝐬 𝐢𝐬 𝐩𝐫𝐞𝐭𝐭𝐲 𝐚𝐜𝐜𝐮𝐫𝐚𝐭𝐞. The core learning principle behind JEPA is predicting one representation from another in latent space. And this was already explicitly formulated in the early 1990s PMAX work. PMAX does not merely hint at this idea; it sets up the same structure: two related inputs are encoded, and a predictor learns to map one latent representation to the other, while the encoder is trained to make this prediction possible without collapsing the representation. That is exactly the defining mechanism of JEPA. When you strip away modern terminology and architectures, both are instances of the same objective: learn representations by maximizing cross-view predictability under constraints that preserve information. What JEPA adds is not a new theoretical framework. It's just larger models, better architectures, and scaling. Of course, we could not do that in the 90s. In that sense, Jürgen Schmidhuber made the real and original conceptual breakthrough: non-generative, latent-to-latent predictive learning This is typical of @ylecun 's work; it's mostly derivative of others' ideas, scaled up and promoted. In contrast, @SchmidhuberAI really did pioneer a lot of these ideas. The JEPA work should have cited him. Politics >> Integrity.

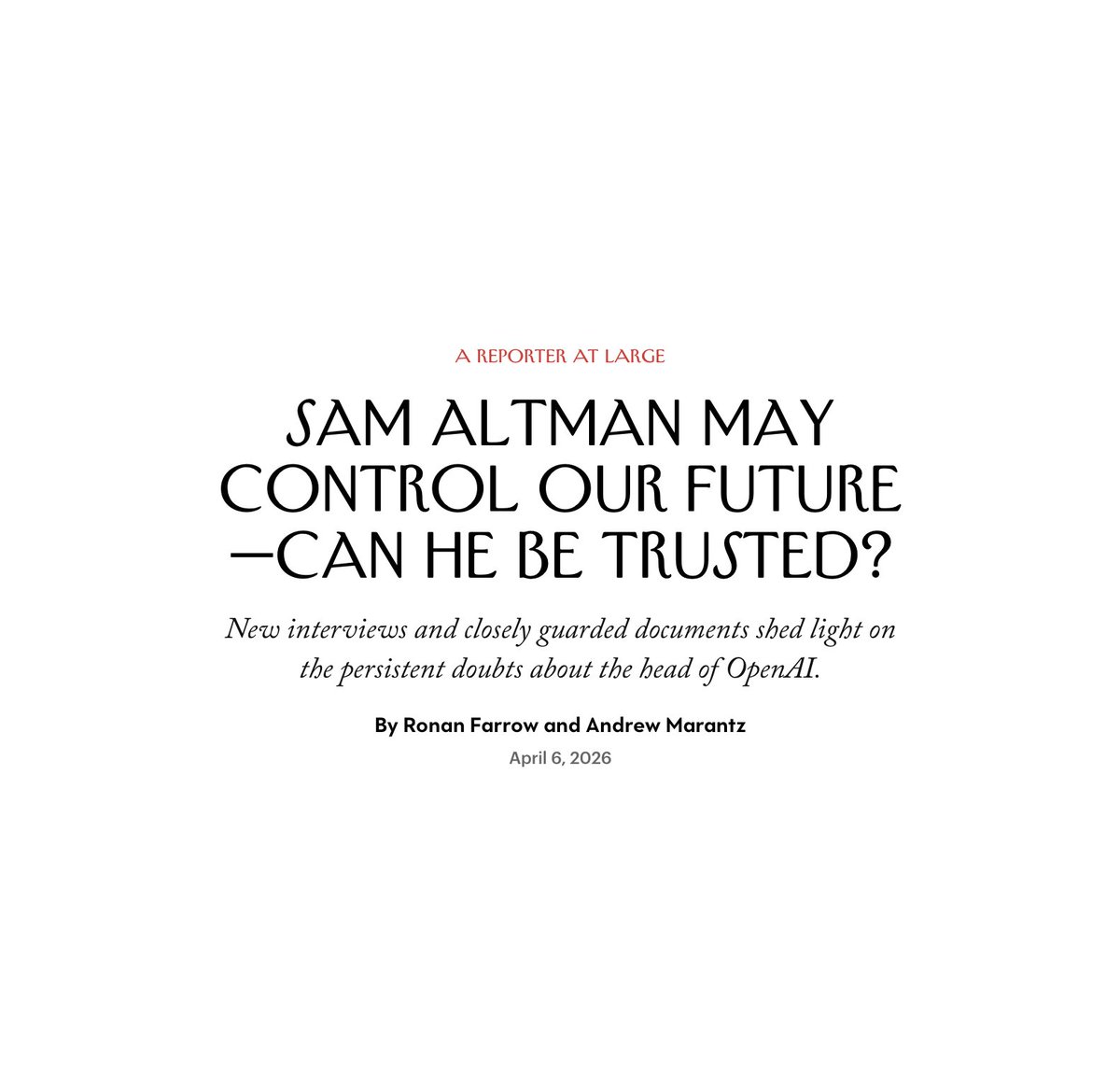

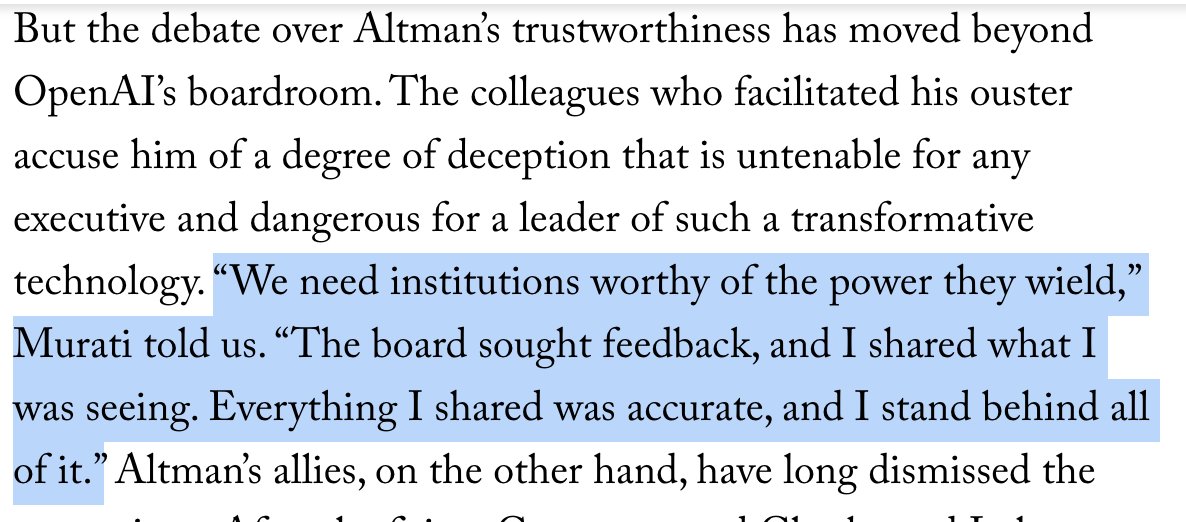

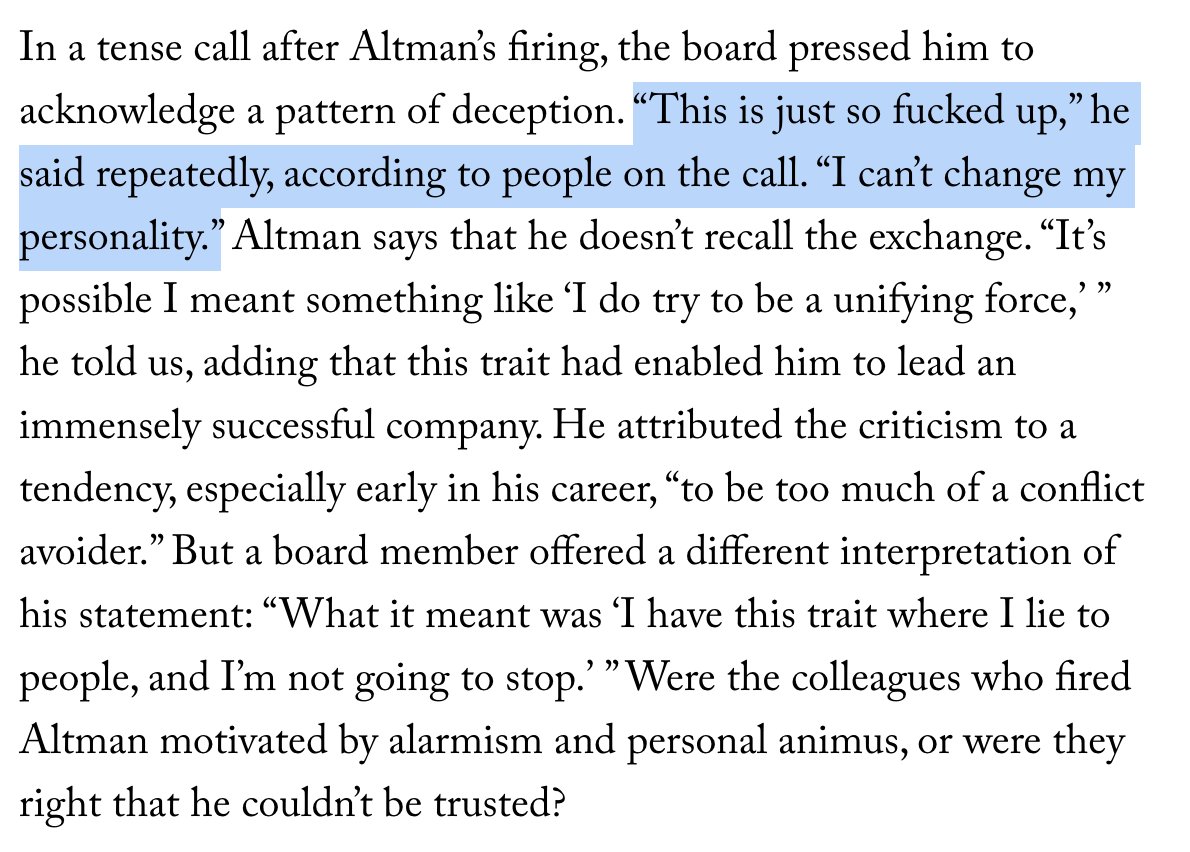

New interviews and closely guarded documents, some of which have never been publicly disclosed, shed light on the persistent doubts about the OpenAI C.E.O. Sam Altman. @AndrewMarantz and @RonanFarrow report. newyorkermag.visitlink.me/ejw-Ob

NEWS: Massive budget cuts for US science proposed again by Trump administration "It's an extinction-level event for science". The US government is proposing massive cuts to almost every branch of science, from NASA to the National Institutes of Health. NSF would completely eliminate the social, economic and behavioral sciences directorate. This would decimate the world's leading scientific system. nature.com/articles/d4158…

realized the door im running in front of every day of is anthropics office let's see attendance on a saturday

1/ As compute continues to grow and simulators continue to improve, it is becoming feasible to train RL agents for billions or trillions of timesteps. However, this is only useful if agents can continue learning over such long training horizons, which is far from given 👇