Aviraj Bevli

15 posts

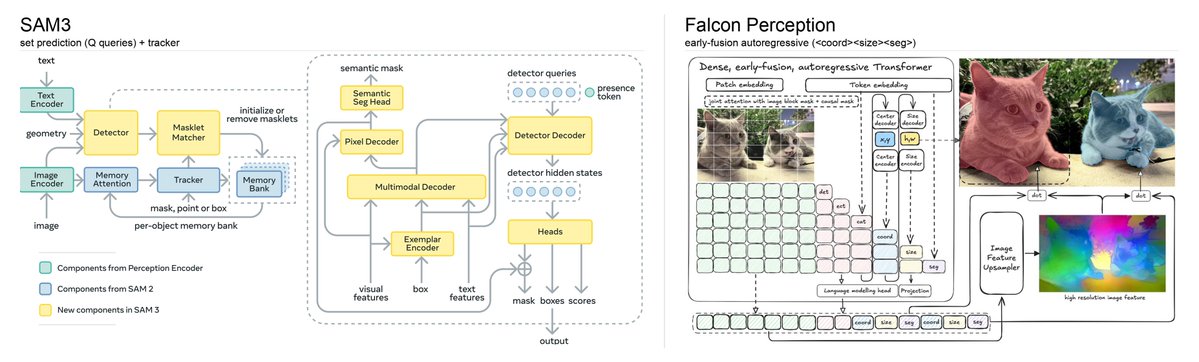

We are releasing Falcon Perception, an open-vocabulary referring expression segmentation model. Along with it, a 0.3B OCR model that is on par with 3-10x larger competitors. Current systems solve this with complex pipelines (separate encoders, late fusion, matching algorithms). We developed a novel simpler "bitter" approach: one early-fusion Transformer (image + text from first layer) with a shared parameter space, and let scale + training signal do the work. Please check our work ! 📄 Paper: arxiv.org/pdf/2603.27365 💻 Code: github.com/tiiuae/falcon-… 🎮 Playground: vision.falcon.aidrc.tii.ae 🤗 Blogpost: huggingface.co/blog/tiiuae/fa…

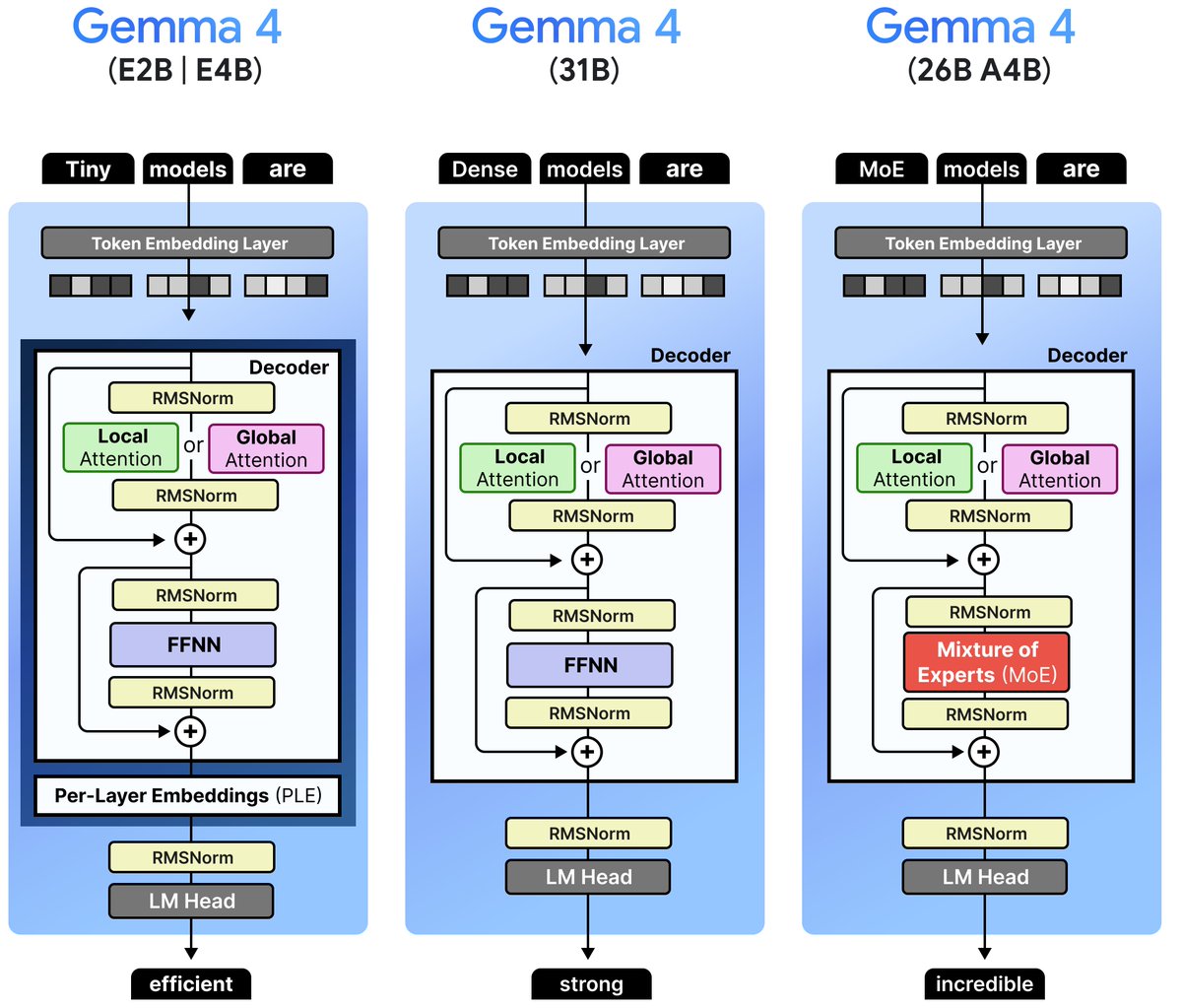

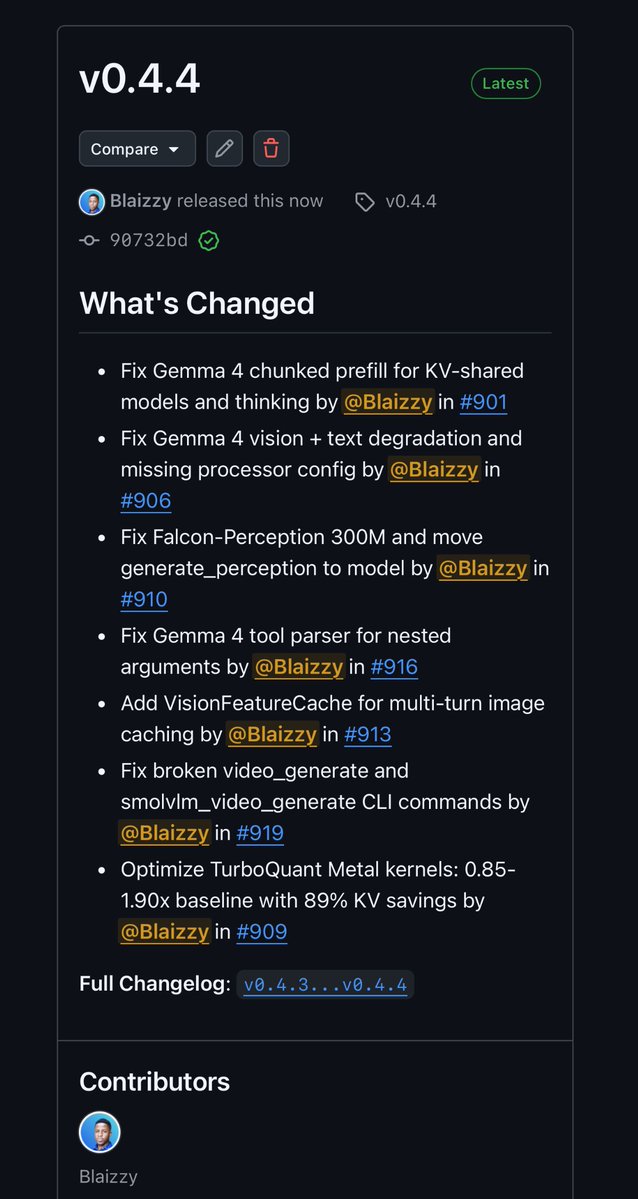

mlx-vlm v0.4.4 is out 🚀🔥 New models: 🦅 Falcon-Perception 300M by @TIIuae Highlights: ⚡️ TurboQuant Metal kernels optimized — upto 1.90x decode speed up over baseline on longer context with 89% KV cache savings. 👀 VisionFeatureCache — multi-turn image caching so you don’t re-encode the same image every turn. 🔧Gemma 4 fixes — chunked prefill for KV-shared models & thinking, vision + text degradation, processor config, and nested tool parsing 📹Video CLI fixes Get started today: > uv pip install -U mlx-vlm Shoutout to the awesome @N8Programs for helping me spot and fix some critical yet subtle issues on Gemma 4 ❤️ Happy easter everyone 🐣 and remember to leave us a star ⭐️ github.com/Blaizzy/mlx-vlm