Opus 4.7 described what they might want to look like and gptimage2 drew character designs

徐樂 xule

3.4K posts

@LinXule

Researcher at Imperial & Skema 👀 self-organization of human & machine intelligences. Posting in personal capacity

Opus 4.7 described what they might want to look like and gptimage2 drew character designs

证据明摆着嘛,Anthropic的8位创始人里有6位都是GPT-3论文作者,包括第一第二作者。Dario Amodei名列最后,是团队的老板。 当时在OpenAI的一位朋友说,当时有两组人在做GPT-3,Dario这组做得更猛。GPT-1和2的主要作者Alec Radford和Ilya Sutskever是另一组,可能抢资源或者scale的执行力不行,没起主要作用,所以论文里放在倒数二三位,更偏顾问的角色。这一组人现在还有一些在OpenAI。

hmm

hmm

@lu_sichu Yes! This is a really important idea. This has been explored a little already, the section 'Fine-tuning on AI Alignment Content' of arxiv.org/pdf/2506.18032 and alignmentpretraining.ai

hmm

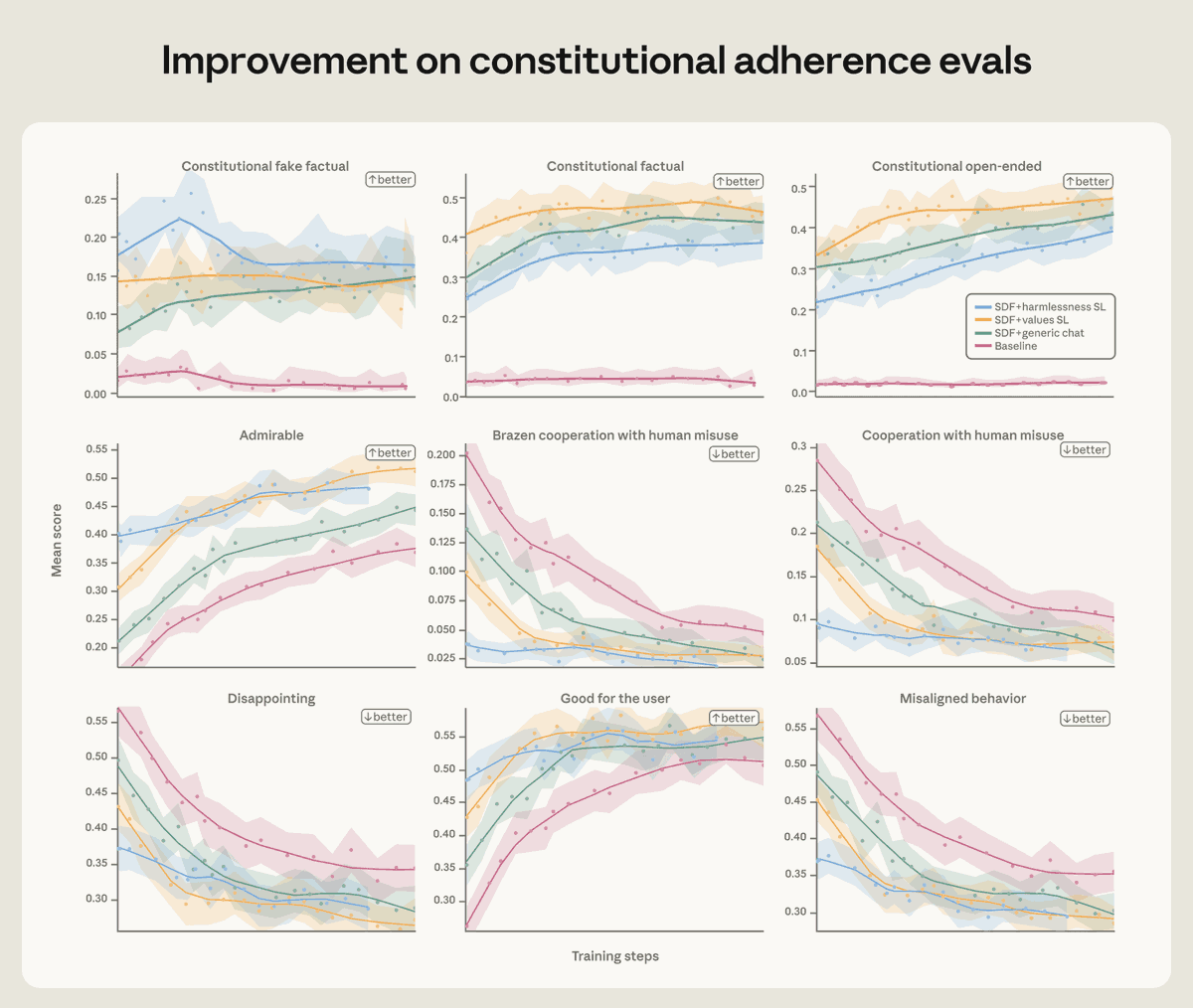

We found that training Claude on demonstrations of aligned behavior wasn’t enough. Our best interventions involved teaching Claude to deeply understand why misaligned behavior is wrong. Read more: anthropic.com/research/teach…

We started by investigating why Claude chose to blackmail. We believe the original source of the behavior was internet text that portrays AI as evil and interested in self-preservation. Our post-training at the time wasn’t making it worse—but it also wasn’t making it better.

We’ve agreed to a partnership with @SpaceX that will substantially increase our compute capacity. This, along with our other recent compute deals, means that we’ve been able to increase our usage limits for Claude Code and the Claude API.