Martin Pascua

2.8K posts

@MartinPascuaDev

Desarrollador. Researcher. Research in Generative Al. Formo parte del equipo de LN Data. Hincha de River ♥️

🙌 Andrej Karpathy’s lab has received the first DGX Station GB300 -- a Dell Pro Max with GB300. 💚 We can't wait to see what you’ll create @karpathy! 🔗 #dgx-station" target="_blank" rel="nofollow noopener">blogs.nvidia.com/blog/gtc-2026-…

@DellTech

Sorry for the slow updates today, huge things brewing! We're going to have big news shortly, massive changes coming (for the better) to OpenClaw for Microsoft Teams sooner rather than later! I hope to have a roadmap out to you all sometime next week. I can say that I've spoken to more than a dozen MIcrosoft employees who want to be involved, and we have a team of six dedicated to helping us as we sit, and I am sure that will grow. They're dogfooding OpenClaw, it isn't all talk. I was also on a call today with @steipete and many from the team, and they too want to get Microsoft Teams, and other extensions and plugins in a better state. It is inspiring to see so many tremendously talented folks rowing in the same direction for the common good. Thank you to all the Microsofties out there and thanks to all of you for your patience! I love being the dumbest guy in the room, and working with the amazing volunteers at OpenClaw, and at Microsoft - I can assure you that's the case!

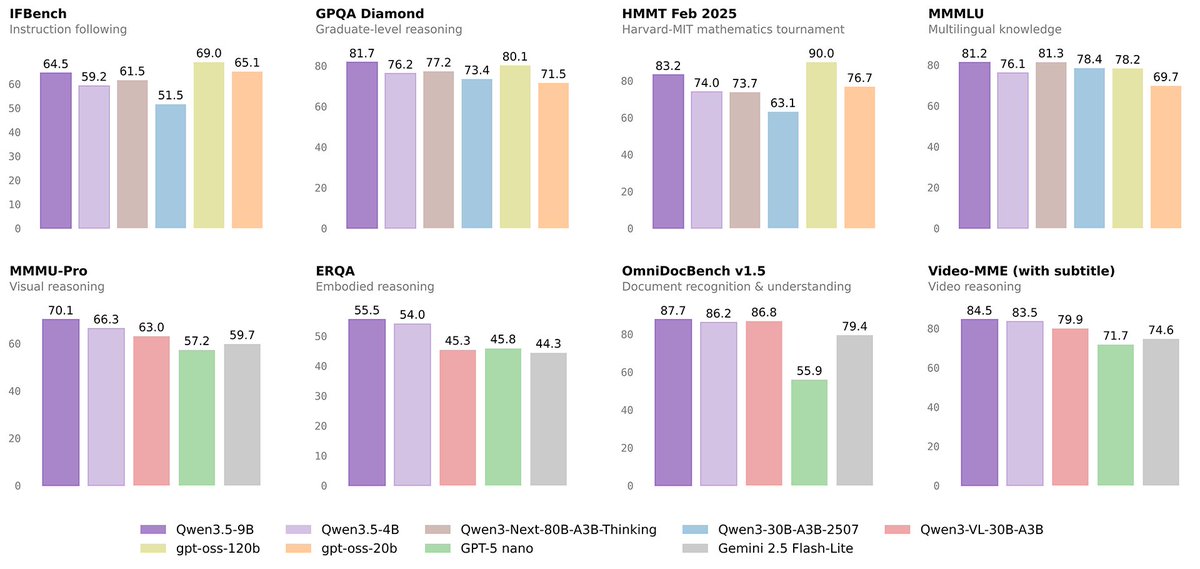

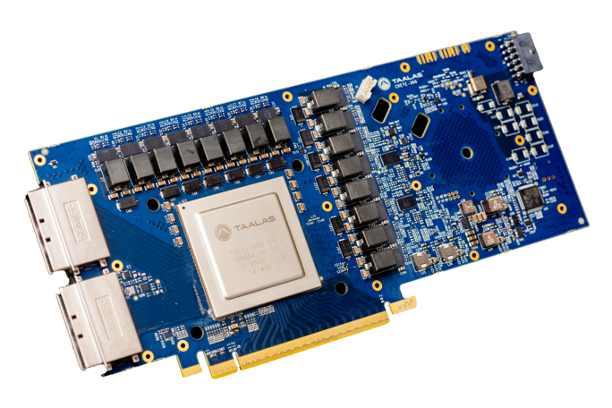

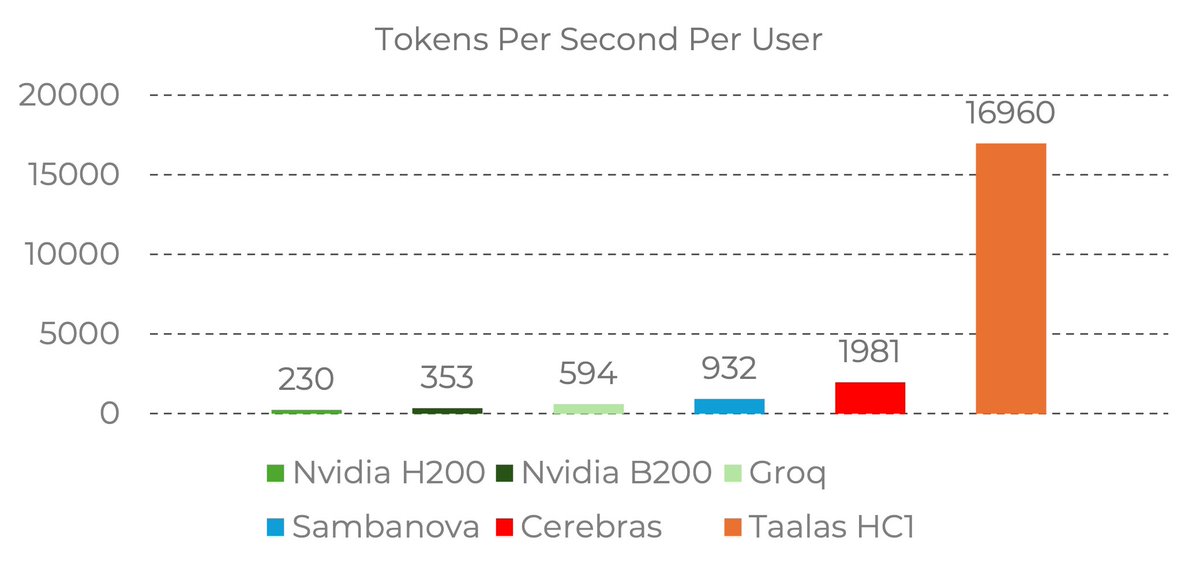

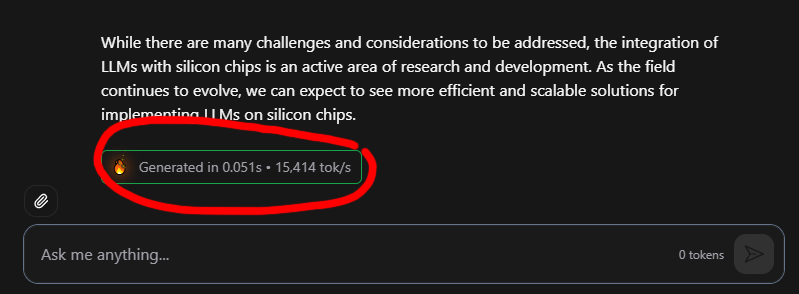

17,000 tokens per second!! Read that again! LLM is hard-wired directly into silicon. no HBM, no liquid cooling, just raw specialized hardware. 10x faster and 20x cheaper than a B200. the "waiting for the LLM to think" era is dead. Code generates at the speed of human thought. Transition from brute-force GPU clusters to actual AI appliances. taalas.com/the-path-to-ub…