Mathurin Dorel

12.7K posts

Mathurin Dorel

@MathSRIsh

Bioinformatician, Cellular Biologist, Techbio Founder. Collecting data in a complex world @[email protected] @mathsrish.bsky.social Also #boardgames

🇰🇪 EXPOSED: France got humiliatingly kicked out of the Sahel for decades of neo-colonial plunder, military bases, and resource extraction. Now Macron is in Nairobi, smiling with Ruto at State House as they secretly sign 11 new “instruments” between Kenya and France. No public details. No debate in Parliament. No explanation to suffering Kenyans paying killer taxes. This isn’t “partnership.” This is Françafrique 2.0 — hunting for new hosts after West Africa said “enough.” Kenya is NOT for sale, Africa is NOT for sale. What exactly did Ruto sign away? Our sovereignty? Our resources? Our future? Kenyans deserve full transparency NOW.

FinalDose is building the first programmable drug platform - a single smart drug molecule that finds diseased cells by their DNA and destroys them. They're starting with all cancers. Congrats on the launch, @Jeffliu6068Liu, @sklin_lite, and @liyaohuang2! ycombinator.com/launches/QKj-f…

@MathSRIsh pmc.ncbi.nlm.nih.gov/articles/PMC12… 1-2k proteins was state of the art maybe 10 years ago

🐠 Everything we know about biology has been built on an incomplete picture. DNA tells us what a cell might do. Proteins tell us what it’s actually doing. Pumpkinseed announced their $20M Series A today (led by Future Ventures and NfX) to build the platform that reads proteins directly—for the first time. Proteomics has always faced a fundamental constraint: you can only measure what you already know to look for. The current workhorse, mass spectrometry, requires matching protein fragments against reference databases. If a protein isn't in the database, or doesn't ionize reliably, it's invisible. Other approaches rely on fluorescent labels or antibody-based affinity methods, which introduce their own biases and blind spots. The result is a field that has spent decades generating an increasingly detailed map of a small, well-lit corner of the proteome, while biology’s most important data layer remains hidden. This isn't a sensitivity problem. It's a category problem. Existing tools were never designed to read proteins directly de novo. They were designed to find what researchers already suspected was there. Pumpkinseed is built to find everything else. And proteomics is harder than most people outside the field appreciate. When we account for post-translational modifications, non-canonical amino acids, and glycan decorations, there are roughly a thousand distinct chemical monomers in the proteomic alphabet, compared to the four bases of DNA. deSIPHR (de novo Sequencing and Identification of Proteins with High-throughput Raman spectroscopy) is Pumpkinseed's proprietary nanophotonic chip platform, fabricated with semiconducting manufacturing. With over 100 million sensors per square centimeter, it reads proteins, known or unknown, letter by letter — amino acid by amino acid — without a reference catalog of proteins, and at high-throughput. The result is direct, high-resolution proteomic data, including post-translational modifications, non-canonical amino acids, and single-cell detail, that mass spectrometry-based approaches cannot match. What is Raman spectroscopy? Rather than tagging or fragmenting proteins, Raman spectroscopy reads the molecular vibrations of individual molecules. Each amino acid vibrates at a characteristic frequency, producing a unique physical signature that deSIPHR detects directly. This is physics reading biology in the most literal sense. With conventional Raman spectroscopy, only about one in ten million photons interacts with a molecule usefully, far too weak for single-molecule work. Pumpkinseed's answer is a silicon photonic chip patterned with a billion sensors per wafer. Those sensors concentrate light into volumes smaller than a single protein, amplifying Raman scattering efficiency by over 10 million-fold. And their future ventures? “The longer-term ambition is the virtual cell, a computational model that simulates not just how proteins fold but how they interact, respond to drugs, and behave under perturbation inside a living system. AlphaFold demonstrated what structural AI can do once a sequence is known. The gap that cannot be closed is determining the sequence itself from biological samples, particularly for proteins carrying modifications absent from existing databases. Pumpkinseed is designed to supply that input layer. "If the Human Genome Project was the data infrastructure that enabled genomic medicine, we believe the high-resolution proteomic dataset Pumpkinseed is building could be the analogous foundation for AI-driven biological discovery," co-founder Dr. Jen Dionne says. "In our vision, the molecular signatures driving disease, aging, and ecosystem health become fully legible. Medicine shifts from reactive to proactive. Optimal healthspan moves from aspiration to achievable reality." —synbiobeta.com/read/pumpkinse… • The biology mining company: Pumpkinseed.Bio • Today’s News: pumpkinseed.bio/news/pumpkinse…

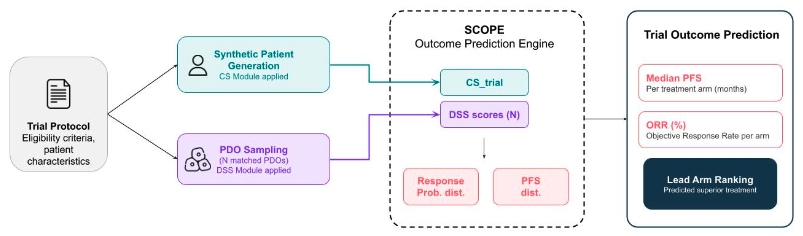

Introducing SCOPE (Screening-to-Clinical Outcome Prediction Engine): a translational platform integrating patient-derived organoid (PDO) drug screening with clinical prognostic features to forecast arm-level efficacy in oncology trials.

Is there a legit complete IND publicly available on the internet anywhere? Like the 1k page document? It would be really instructive to see what these look like.

this isn’t to say they aren’t important but theres a *lot* of extremely interesting types of biological data outside of unconditional protein structures, sequences, and small molecules. it is good to leave the PDB bubble sometimes and explore what else is possible