HilesFiles

5.9K posts

HilesFiles

@MichaelHiles

These are my own opinions, ✝️, @go10xts, @conduit_network, DePIN, RWA tokenization, Constitutionalist, anti-authoritarian, ΜΟΛΩΝ ΛΑΒΕ, coffee snob

Cincinnati USA Katılım Şubat 2009

4.2K Takip Edilen4.3K Takipçiler

HilesFiles retweetledi

HilesFiles retweetledi

A U.S. Marine Corps release on March 23, 2026 detailed how Lance Cpl. Eirick Schule developed a 3D-printed replacement for the MUOS antenna mast in April 2025, cutting costs from $5,644 per unit to about $10 and reducing delivery time from over 7 months to 10 hours, with 107 units produced and an estimated $600,000 saved.

English

HilesFiles retweetledi

HilesFiles retweetledi

HilesFiles retweetledi

HilesFiles retweetledi

🚨 Holy shit… Columbia University just dropped one of the most unsettling papers on AI inference I’ve read in a long time.

They proved that the entire private AI inference industry built the wrong thing.

Prior methods: encrypt the full transformer. 280GB per query. 60-second latency. Enterprise-grade security theater.

GPT, Gemini, Qwen, and Mistral independently converged to nearly identical internal representations. One linear equation connects them.

> Sub-second inference. 1MB of communication. Same security guarantees.

> The private AI inference problem is real. Hospitals can't send patient data to OpenAI. Banks can't send transaction records to Google. Legal firms can't send case files to Anthropic. The solution the industry built: encrypt everything every layer, every attention head, every weight using homomorphic encryption and secure multi-party computation. The result: 280GB of encrypted communication per query. 60-second latency.

> Infrastructure costs that make production deployment practically impossible.

> Columbia University found the shortcut everyone missed. The Platonic Representation Hypothesis the observation that large models trained on enough data tend to converge toward a shared statistical understanding of the world turns out to be exploitable. GPT, Gemini, Qwen, Mistral, and Cohere, trained independently on different data with different architectures for different objectives, developed internal representations with CKA similarity scores between 0.595 and 0.881. That's not close.

> That's essentially the same space.

> If the spaces are the same, you don't need to encrypt the model. You learn a single affine transformation one matrix that maps your model's internal representations into the provider's space. Encrypt that matrix.

> Send it. The provider runs one linear classification operation on encrypted data and returns the encrypted prediction. You decrypt locally. The transformer never gets encrypted. The weights never get exposed. The query never leaves your control in readable form.

> HELIX is the system they built on this insight. During training, the client encrypts their embeddings from public data and sends them to the provider, who computes the alignment map under encryption and returns it. During inference, the client applies the alignment locally, encrypts the transformed representation, and sends it. The provider applies a linear classifier homomorphically and returns the encrypted prediction.

> Multiplicative depth of one. No bootstrapping required. 128-bit security by CKKS standard.

→ Prior methods communication cost: 280.99GB per query (Iron), 25.74GB (BOLT), 68.6GB (MPCFormer)

→ HELIX communication cost: less than 1MB per query

→ Prior methods latency: 20-60+ seconds per query

→ HELIX latency: sub-second

→ Cross-model CKA similarity: 0.595 to 0.881 across GPT, Gemini, Qwen, Mistral, Cohere

→ Text generation quality: 60-70% of single-model baseline for high-compatibility pairs

→ Tokenizer compatibility predicts generation quality with r=0.898

The finding that should end careers: models above 4B parameters with tokenizer compatibility above 0.7 exact match rate can generate coherent text across model families using only a linear transformation.

Qwen encoding. Llama decoding. No fine-tuning. No weight sharing. No data transfer. Just matrix multiplication applied to the boundary between two independently trained systems that accidentally became the same thing.

English

HilesFiles retweetledi

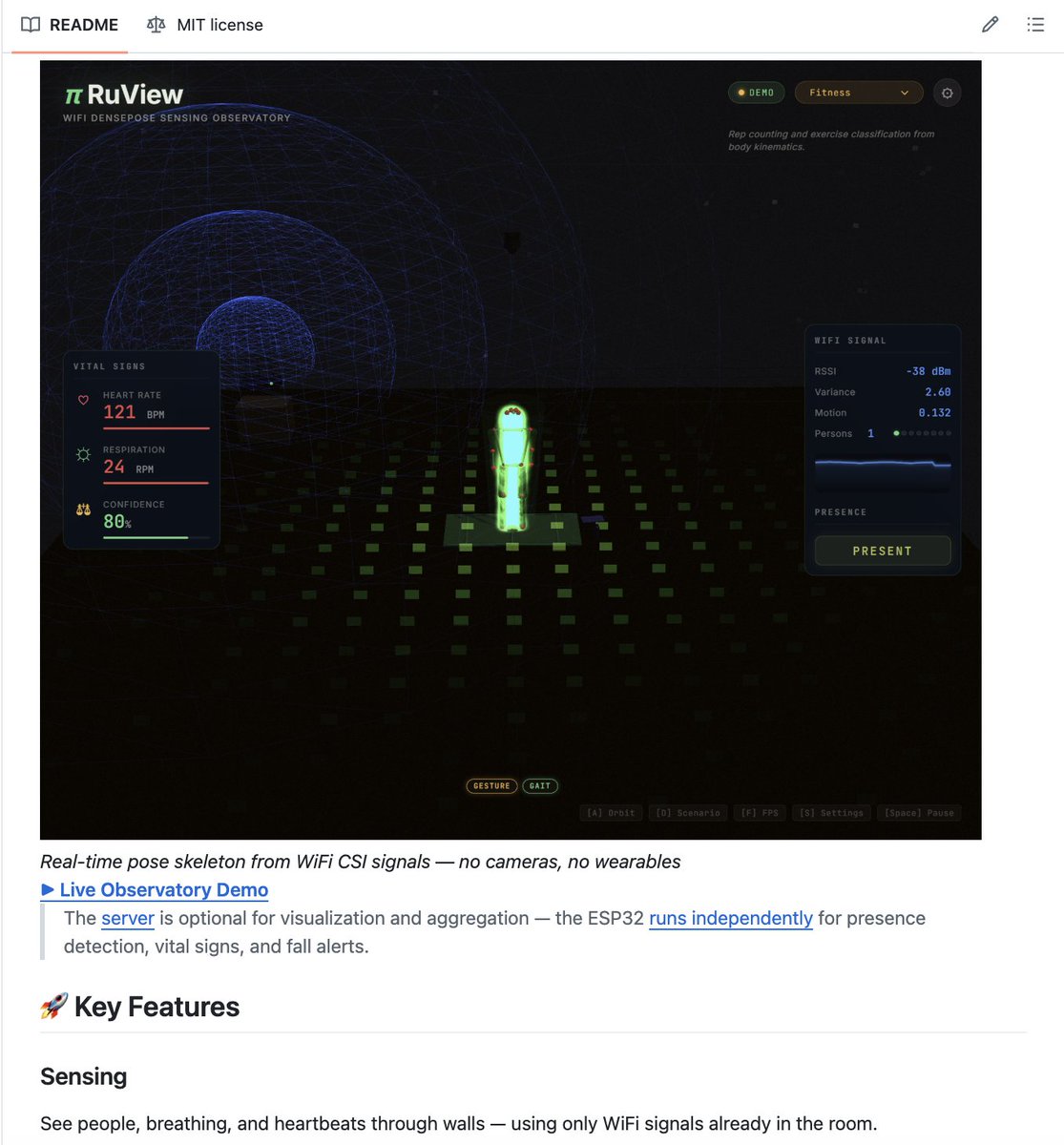

🚨BREAKING: A developer on GitHub just turned your WiFi router into a full-body surveillance system.

It's called RuView.

It uses the WiFi signals already in your room to detect human poses, track breathing, measure heart rate, and see through walls.

Not a concept. Not a research paper. Working code you can run right now.

Here's what this thing actually does:

→ Tracks full 17-point body pose using only WiFi signals

→ Detects breathing rate (6-30 BPM) without touching anyone

→ Measures heart rate (40-120 BPM) from across the room

→ Sees through walls, furniture, and debris up to 5 meters deep

→ Tracks multiple people simultaneously with zero identity swaps

→ Self-learns from raw WiFi data. No labeled datasets needed

Here's how it works:

WiFi signals pass through your room and hit the human body. The body scatters those signals differently based on position, breathing, even heartbeat. RuView reads that scattering pattern and reconstructs everything.

A mesh of 4 ESP32 nodes ($48 total) gives you 360-degree coverage with 12 measurement links, 20 Hz updates, and sub-30mm precision.

Here's the wildest part:

It has a disaster response mode called WiFi-Mat. It detects survivors trapped under rubble through concrete walls, classifies injury severity using START triage protocol, and estimates 3D position. The kind of tool that saves lives after earthquakes.

The Rust implementation processes 54,000 frames per second. That's 810x faster than the Python version. The entire Docker image is 132 MB.

The AI model fits in 55 KB of memory. Runs on an $8 ESP32 chip.

Train once, deploy in any room. No retraining. No recalibration.

1,100+ tests. 15 Rust crates on crates. io. SHA-256 verified capability audit.

100% Open Source.

English

3 out of 3 trips on @AmericanAir in March delayed. This was the last one booked on them in advance.

Cya AA my last flight on you.

English

@juliecbarrett Just run your own infrastructure. Problem solved.

English

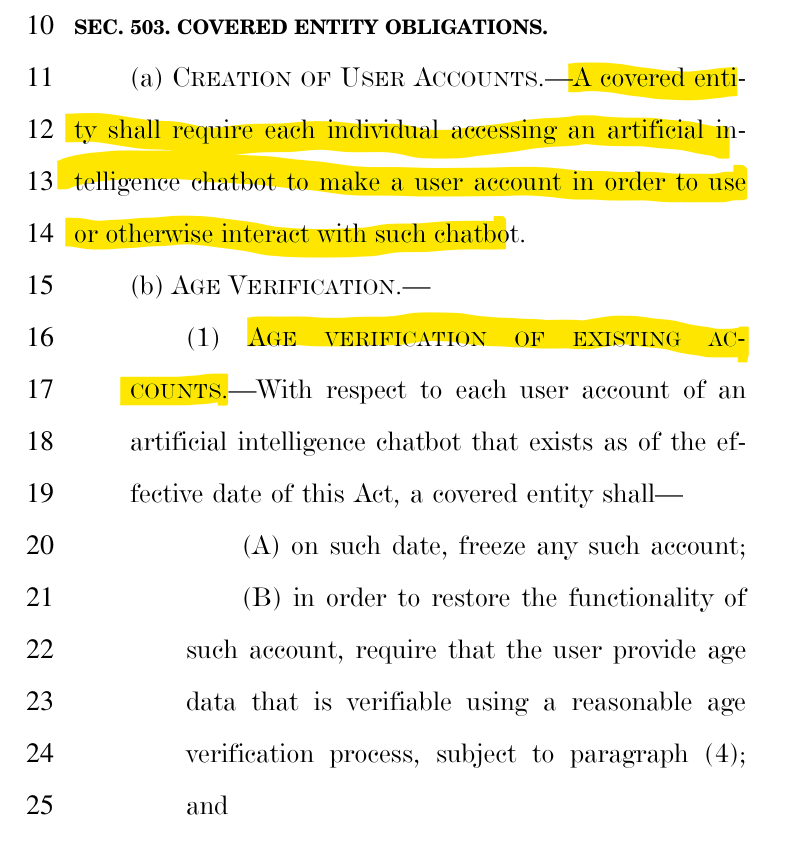

🚨America's newest Digital ID proposal just dropped in the US Senate.

Republican Senator Marsha Blackburn has introduced a new AI bill that bundles 17 different policies into one massive, 291 page bill.

This is just like all these other tech bills we've been seeing - a massive Digital ID framework - with universal age verification being the key to access to the tools.

The bill includes mandatory age verification for every existing account and freezing accounts until users verify their age.

English

HilesFiles retweetledi

The CIA doesn't want you to find this GitHub repo 👀

It's called Shadowbroker and it aggregates every open-source military signal on Earth into one dashboard.

→ US Navy carrier strike groups tracked live

→ Spy satellites color-coded by mission type

→ GPS jamming zones with severity overlays

→ 25,000+ ships. 2,000+ CCTV feeds globally

Right-click any point on Earth. Get a full intelligence dossier.

100% Opensource.

Link in comments.

English

Sad sad sad.

Elections have consequences

bizjournals.com/cincinnati/new…

@still_hustling

English

@dailyprandium @CR1337 Because flowing water turns a generator turbine?

English

@CR1337 Machines require electric power. What are the 50 hand tools that can rebuild everything from scratch. If from scratch were called for the machines would likely be useless. Unless someone rigged solar generators. There would likely be no centralized or fossil energy sources.

English

HilesFiles retweetledi

@berniemoreno Why is our Republican legislature and so-called Republican Governor enabling it?

English

HilesFiles retweetledi

Dear @SenTedCruz,

Jesus Christ is in fact king. King of kings. Lord of lords. The Messiah.

Blessings,

@WarrenDavidson

cc: Jerusalem, Judea, Samaria, and the ends of the earth

(Acts 1:8)

English

HilesFiles retweetledi

This is wild.

143 million people thought they were catching Pokémon. They were actually building one of the largest real-world visual datasets in AI history.

Niantic just disclosed that photos and AR scans collected through Pokémon Go have produced a dataset of over 30 billion real-world images. The company is now using that data to power visual navigation AI for delivery robots.

Players didn't just walk around with their phones. They scanned landmarks, storefronts, parks, and sidewalks from every angle, at every time of day, in lighting and weather conditions that staged photography would never capture. They documented the physical world at a scale no mapping company with a fleet of vehicles could have replicated on the same timeline or budget.

Niantic collected this systematically, data point by data point, across eight years, while users thought the only thing at stake was catching a rare Charizard.

The most valuable AI training datasets in the world aren't being assembled in data centers. They're being built by people who have no idea they're building them.

NewsForce@Newsforce

POKÉMON GO PLAYERS TRAINED 30 BILLION IMAGE AI MAP Niantic says photos and scans collected through Pokémon Go and its AR apps have produced a massive dataset of more than 30 billion real-world images. The company is now using that data to power visual navigation for delivery robots, letting them identify exact locations on city streets without relying on GPS. Source: NewsForce

English