Mike

990 posts

Mike

@MichaelP2049

vibe coding to build something useful

Katılım Mart 2012

626 Takip Edilen113 Takipçiler

quick context on the problem 🙏

i have a loop setup with claude code and codex:

it builds something, takes a screenshot on it's own, compares it to the figma reference, keeps iterating until it's pixel perfect.

but it never works

the agent would screenshot its own output and somehow not see a completely broken button.

i'm sitting there staring at it! but under isay "the button is broken" it wouldn't see it.

i was like how are you so smart and dumb at the same time?

that's when it clicked for me.

the problem isn't that the model is dumb. the problem is that when you send a screenshot, the AI just... looks at it and describes the image in general sense.

so you spend 3-5 rounds saying "no, the padding is wrong." "no, look at the nav again." "no, the border radius doesn't match."

i started digging into how this is actually solved at companies that do it well.

turns out replit, lovable, microsoft, all of them solved this already. they all run structured analysis first. spatial coordinates, component hierarchies, design tokens.

then the model gets that as context.

but this intelligence is locked inside their platforms. if you're using claude code or codex or anything general purpose, you get none of it.

so i built clearshot 📸

open source skill for claude code/codex.

every time you or the agent takes a screenshot, it doesn't just "look" at it. it tells the model exactly what's there.

padding values in pixels. not "blue color" but the actual hex code. not "some spacing" but 8px gap between the label and the input. border radius, font weight, shadow values. everything the model was guessing at before, it now knows.

very deterministic but...

there's also a qualitative path. a taste layer. does the hierarchy feel clear. is the visual weight distributed right. is there enough breathing room. does this feel like a premium product or a hackathon project.

because sometimes the question isn't "what are the pixel values" but "does this feel right." clearshot handles both.

one thing i was careful about:

it shouldn't fire when it's not needed. if you send a screenshot of a chart, or a meme, or an architecture diagram, and you're not building frontend, the skill stays quiet. it only activates when the image is a UI and the conversation is about building or critiquing that UI.

and within the analysis itself, there are exit paths at every step. if a quick spatial scan is enough, it stops there. your agent isn't burning tokens running a full 5-step pipeline when you just asked "does this look right."

this is the first version. will keep iterating.

clearshot is open source and free.

star it if you think AI needs to get better at seeing your UI.

research this builds on:

- microsoft omniparser

- dcgen

- google screenai

English

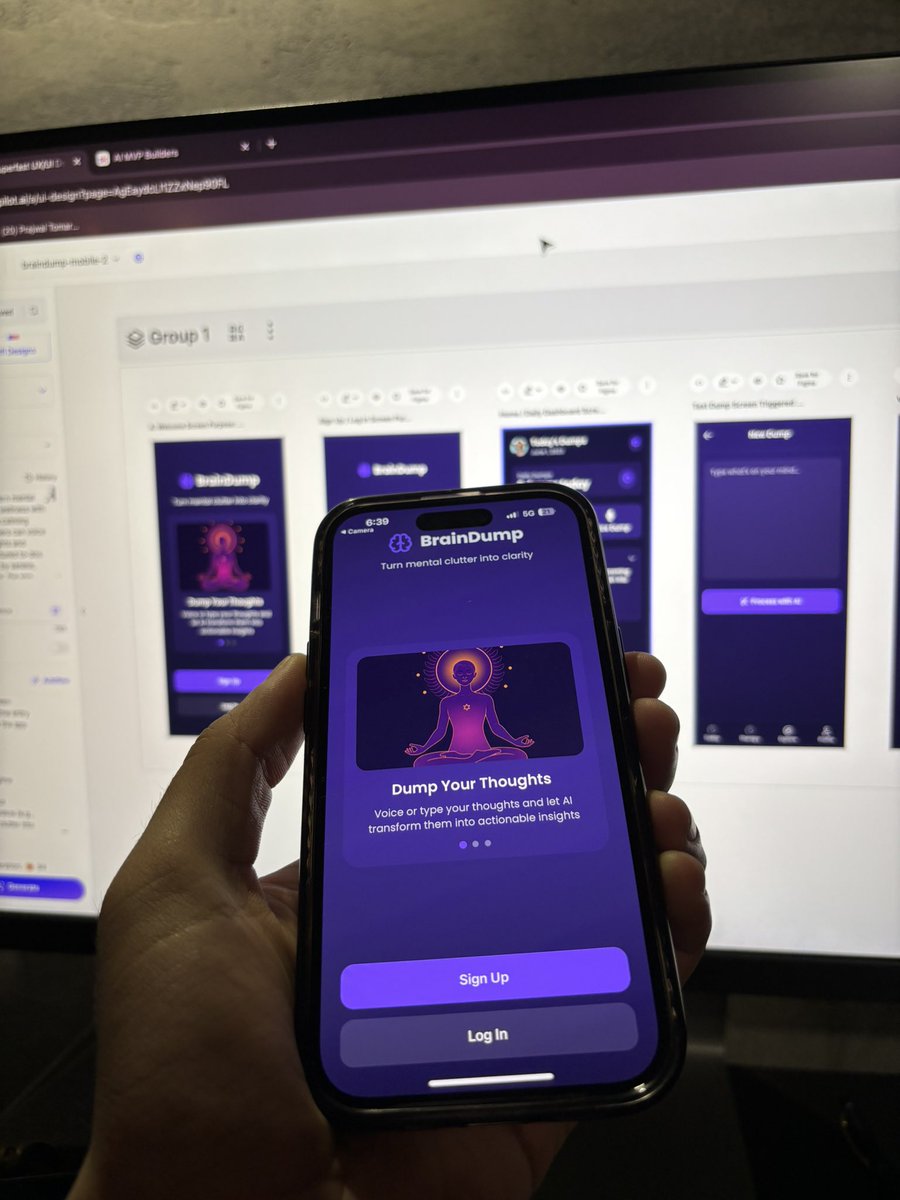

@PrajwalTomar_ Having problems with stitch as it hallucinate a lot with different button styling and menu items, check the screenshot ir more detail, buttons are off and menu bar differ screen to screen. Any way tackling this? Figma make on the other hand provides consistent style

English

This design was made inside Google Stitch in under 5 minutes.

ONE prompt. That's it.

If you're skipping the design phase for your MVP because it's too slow or expensive, this is a much faster way.

Stop wasting weeks on Figma when you can ship clean designs in minutes.

Prajwal Tomar@PrajwalTomar_

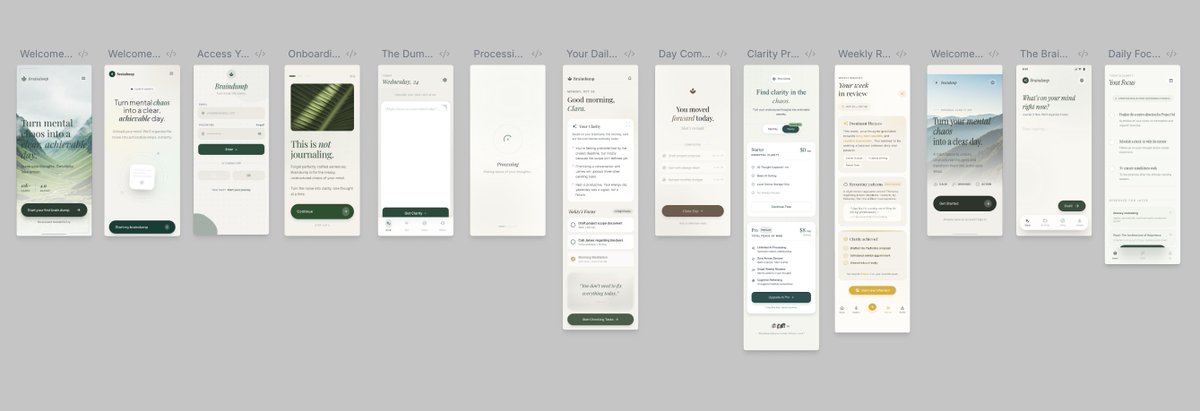

Google's AI building stack is HERE. I spent a week testing Stitch + AntiGravity on client projects. The design iteration speed is CRAZY fast. Here's my honest take on what actually works 👇

English

After generating $250K (last 2 months) I built a playbook for @lovable apps—and I’m giving it away.

In just two months, we cracked the code to building apps with AI.

I’ve distilled everything we learned into this single document.

Comment "Build" and drop a follow. I’ll DM it to you.

P.S. This will likely blow up, so give me some time to reply.

English

@rajivayyangar @ProductHunt Become a better lover, friend, parent or colleague.

Visualize, track, and grow your most important relationships.

English

I’m the @ProductHunt CEO, I’ve launched 7 times on PH, and helped countless friends prep their launches. The most common mistake I see? a confusing tagline.

Launching on @ProductHunt soon? Tell me your tagline and I’ll fix it for you :)

English

Mike retweetledi

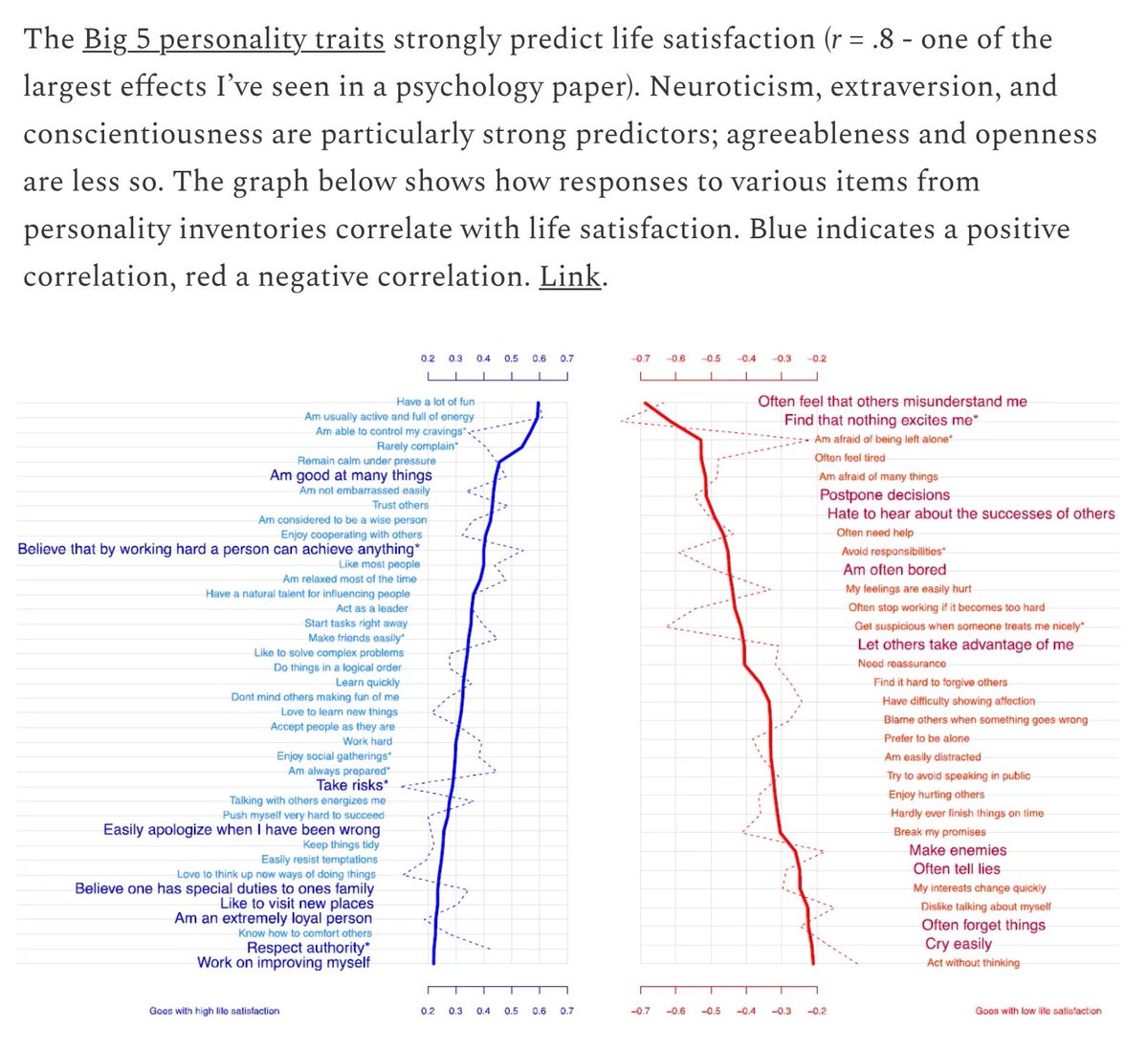

The Big 5 personality traits strongly predict life satisfaction (r = .8 - one of the largest effects I’ve seen in a psychology paper). stevestewartwilliams.com/p/5-new-findin…

English

Mike retweetledi

@AlexHormozi The challenges:

Tattooing is an art, machines must replicate artists' skills. Tattoos involve sensation and individuality.

Safety, skin variability, ink absorption

English

@TheCinesthetic That moment in "The Sixth Sense" when Dr. Crowe gets it... 🤯💡 Total game-changer!

English

Some news:

After 2.2yrs of hustling, building in public, and putting in a lot of hrs, I'm delighted to announce that

@shoutoutso_ has been acquired by Rocket Gems, a UK-based product studio founded by @ramykhuffash 🍾

Let me take you to where it all started 🧵

English

Mike retweetledi

@DexterLabData something like that 🤣 I can't sleep thinking of what we can make available via API 😁

English

Mike retweetledi