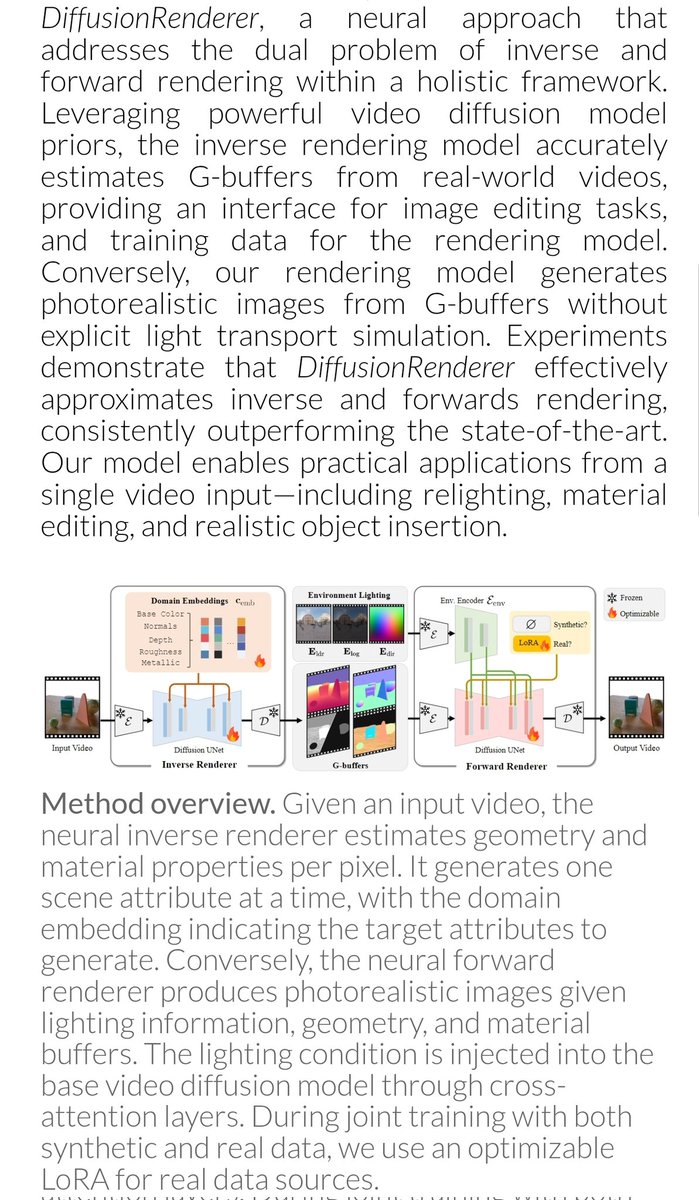

🚨 CG Paper, EG 2025 As scenes & lighting in games grow in complexity, we introduce Neural Incident Radiance Cache (NIRC) – a real-time, online-trainable cache that: 🚄 Costs just ~1ms/neural-sample for 1080p ☘️ Decreases MC variance 🥳 Saves on bounces youtube.com/watch?v=Y791Sl…