Mockapapella

807 posts

Mockapapella

@Mockapapella

10x Vibe Programmer 0.1x Vibe Debugger Please predict the next token responsibly

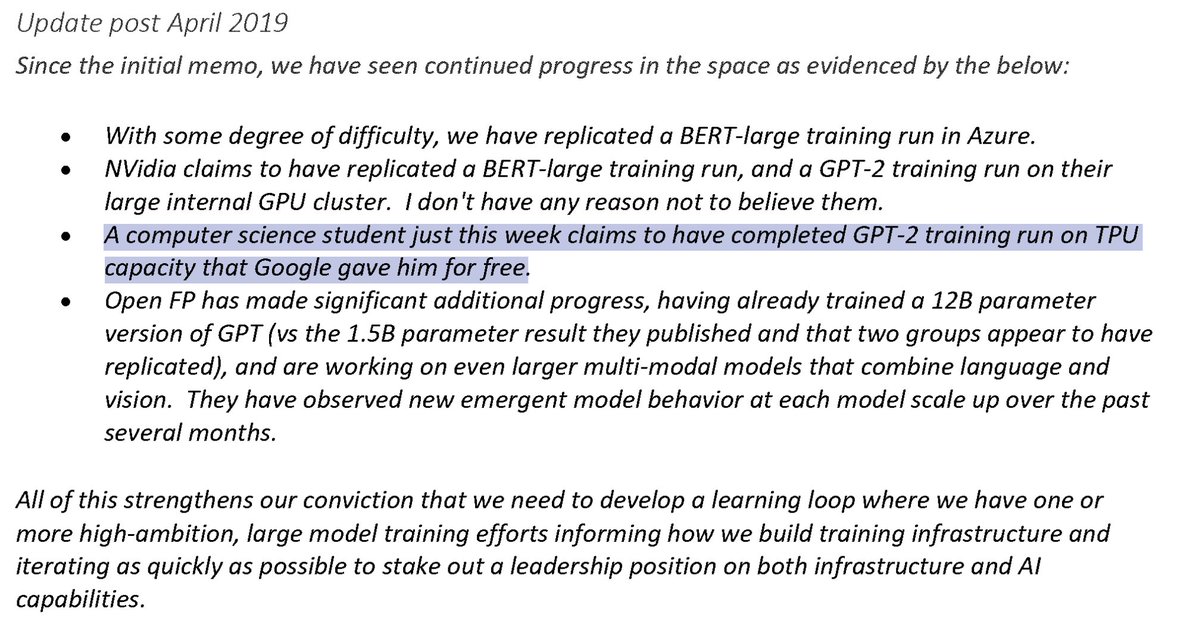

Satya Nadella on funding OpenAI July 13, 2022

‼️🚨 MAJOR IMPACT: AI just found an 18-year-old NGINX critical remote code execution vulnerability. It has been disclosed on GitHub including PoC code. - Affects NGINX 0.6.27 through 1.30.0 - Triggered via the rewrite and set directives in config - Update NGINX ASAP - NGINX is a widely used HTTP web server, be sure to check its prevalence in other products

Solution incoming…

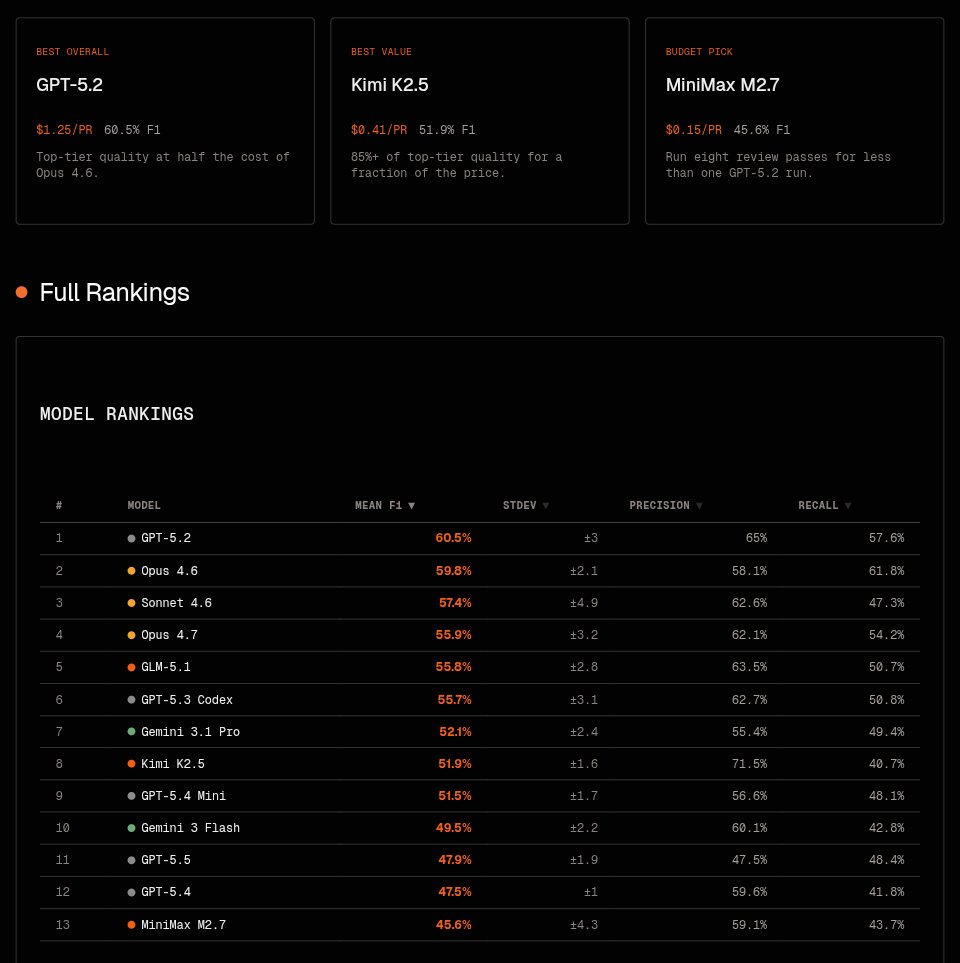

According to the new data from Ramp, Anthropic has passed OpenAI in business adoption for the first time. 'Adoption of Anthropic rose 3.8% in April to 34.4% of businesses. OpenAl adoption fell 2.9% to 32.3%. Overall Al adoption rose 0.2 percentage points to 50.6%.'

New experiment: Told codex "/goal make me $5 and do something you’re really good at" It's first inclination was to make a landing page offering a code review for $5 I do not have high hopes lol