Niko E.

4.8K posts

> but if there’s a third strike, I’m switching back to 5.4. I was wrong, let me explain why. GPT-5.5 is not a minor version bump of the same model. GPT-5.5 is based on a new, fully retrained base model. Yes, OpenAI is bad at naming and versioning. We know. But that changes how I think about the regressions. Some things got worse. Some behaviors are sharper than they should be. Some workflows need new guardrails. But switching back to 5.4 would avoid the learning curve. The real work is understanding the new model: where it overreaches, where it needs tighter instructions, where old workflows break, and where it is genuinely better. Because 5.6 or 6 will not be “5.4, but improved.” It will build on this new foundation. So I’d rather learn the new operating model now than cling to the old one until it disappears.

Ok how tf do I review this PR?

Realizing a 30 year mortgage doesn’t actually mean 30 years: - 1 extra monthly payment per year can cut 5 years off - 2 extra payments per year can cut about 8 years off - 3 extra payments per year can cut about 11 years off Bank won't tell you this

Starting to notice that even with /grill-me, Opus 4.7 w/ Claude Code jumps straight to implementation 😡 Just WAIT until we're aligned, silly harness

@davis7 For everything?

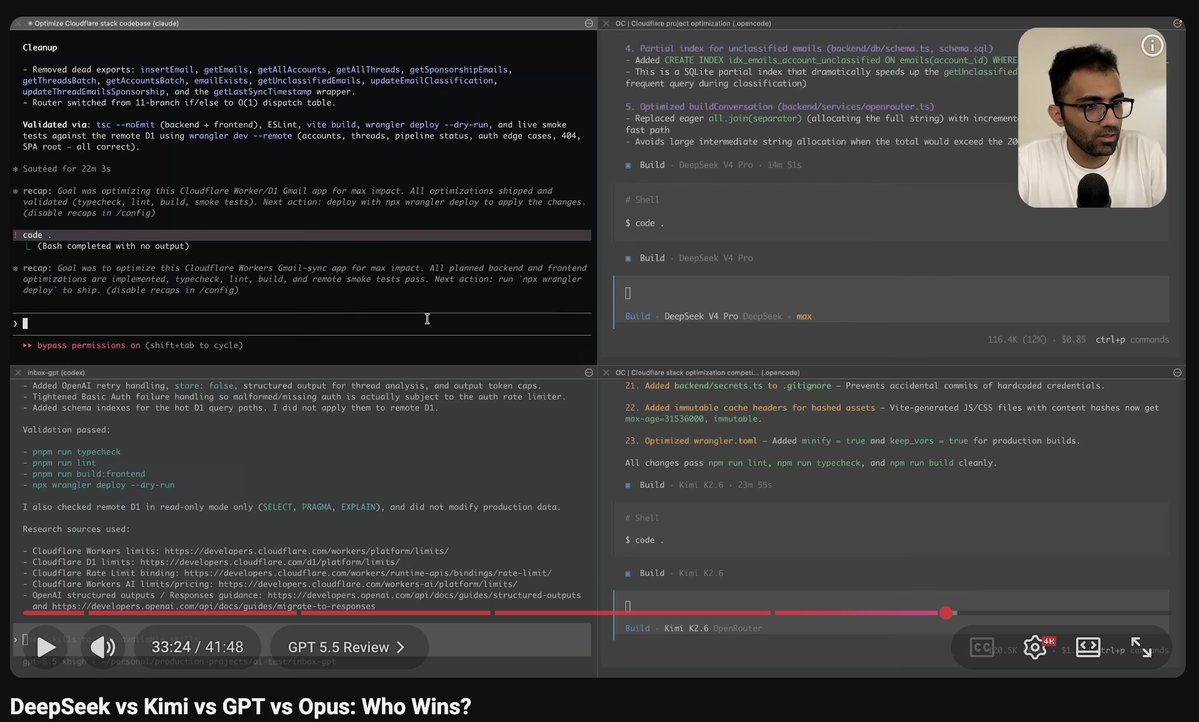

Added to prinzbench: - GPT-5.5 Pro (Extended) - GPT-5.5 Thinking (Heavy) - Opus 4.7 - Meta Muse Spark Overall impressions from testing the models: 1. GPT-5.5 Pro scored slightly (3 points) better than GPT-5.4 Pro, including a solid improvement in Legal Research (by 4 points) and a slight decrease in Search (by 1 point). Overall score: 82/99. As noted elsewhere, this model is *significantly* faster than GPT-5.4 Pro; a question that took GPT-5.4 Pro ~30 minutes to answer takes GPT-5.5 Pro ~8 minutes. It's a good model! We have now reached the point where I am surprised if it does not answer a question correctly. 2. GPT-5.5 Thinking (Heavy) is the star of the show, scoring a full 5 points higher than GPT-5.4 (xhigh) and a full 6 points higher than GPT-5.4 Thinking (Heavy). A big jump in Legal Research (+6 points vs. GPT-5.4 (xhigh) is once again offset here by a slight decrease in Search (-1 point vs. GPT-5.4 (xhigh)). Overall score: 74/99. As with Pro, this model is *significantly* faster than GPT-5.4 Thinking (Heavy); a question that took GPT-5.4 ~8-10 minutes to answer takes GPT-5.5 Thinking ~2 minutes. 3. Opus 4.7 started off really well, and I even thought at one point that it might match the performance of Gemini 3 Pro, but... it trailed off in the end. Overall score: 25/99. This is a significantly better performance than that achieved by any other Anthropic model on my benchmark to date (e.g., 6 points higher than Opus 4.6), but Opus 4.7 still significantly trails many other models released over the past 6 months. On the bright side, the model's Search score (4/24) is significantly better than the usual 1/24 or 0/24 that I typically get from Anthropic models. Some further improvement in search capabilities might unlock performance approximately equivalent to that of Gemini 3 Pro for this model. 4. Meta Muse Spark achieved a very unspectacular score of 31/99. Not quite as good as Gemini 3, not quite as good as Kimi K-2.5 Thinking. This model is nothing to write home about. More details in the link below. Please see footnote 1 in particular, which talks about my participation in OpenAI's early access program for GPT-5.5.