Pratyay Banerjee (নীল)

8K posts

Pratyay Banerjee (নীল)

@Neilblaze007

I live in the shadows, but I watch everything.

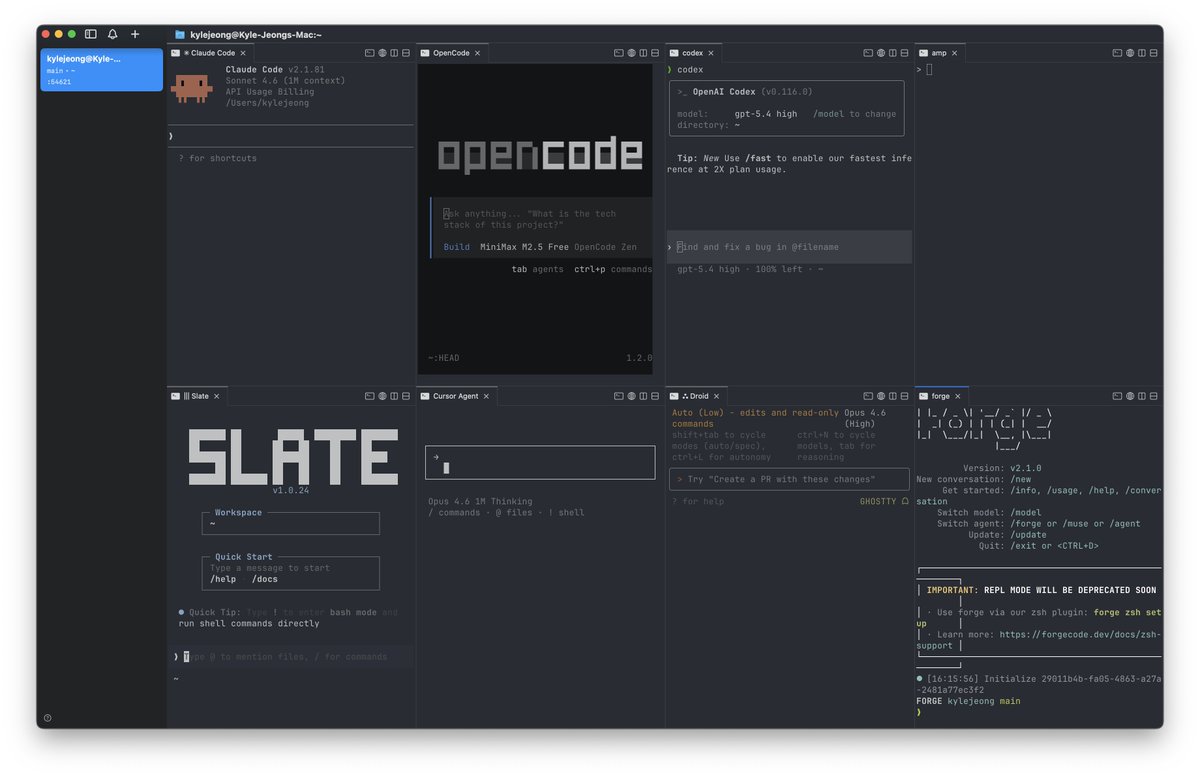

he's a 10 but he only runs 1 claude code session at a time

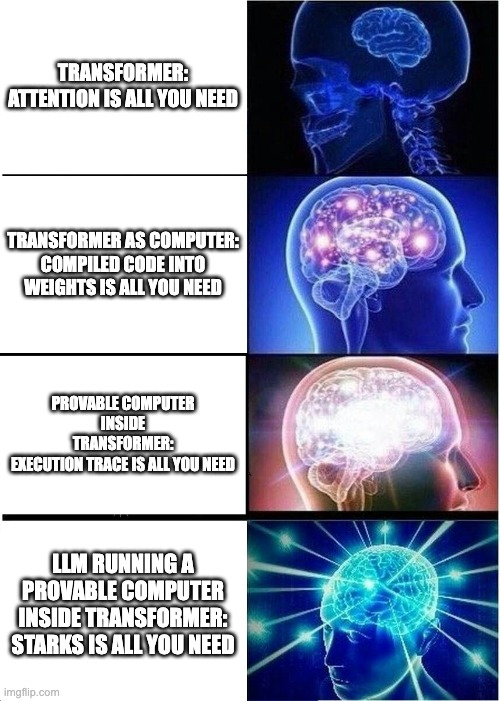

Can LLMs be PROVABLE computers? Percepta showed that a transformer can BE a computer. Compiled weights, deterministic execution, 30k tokens/sec. But nobody asked the obvious follow-up: how do you know it computed correctly? So I built the verification layer. A STARK that proves it 👇

I got a 1T-parameter model running locally on my MacBook Pro. LLM: Kimi K2.5 1,026,408,232,448 params (~1.026T) Hardware: M2 Max MacBook Pro (2023) w/ 96GB unified memory Running on MLX with a flash-style SSD streaming path + local patching. This is an experimental setup and I haven’t optimized speed yet, but it’s stable enough that I’ve started testing it in an autoresearch-style loop. #LocalAI #MLX #MoE

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only.

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only.

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only.

"Just read the chain of thought" is one of our best safety techniques. Why does it work? Because models can only think opaquely for a short time, long thinking must be transparent Can we quantify this? Yes! In our new paper, we show how to measure "time" for arbitrary networks.