Sabitlenmiş Tweet

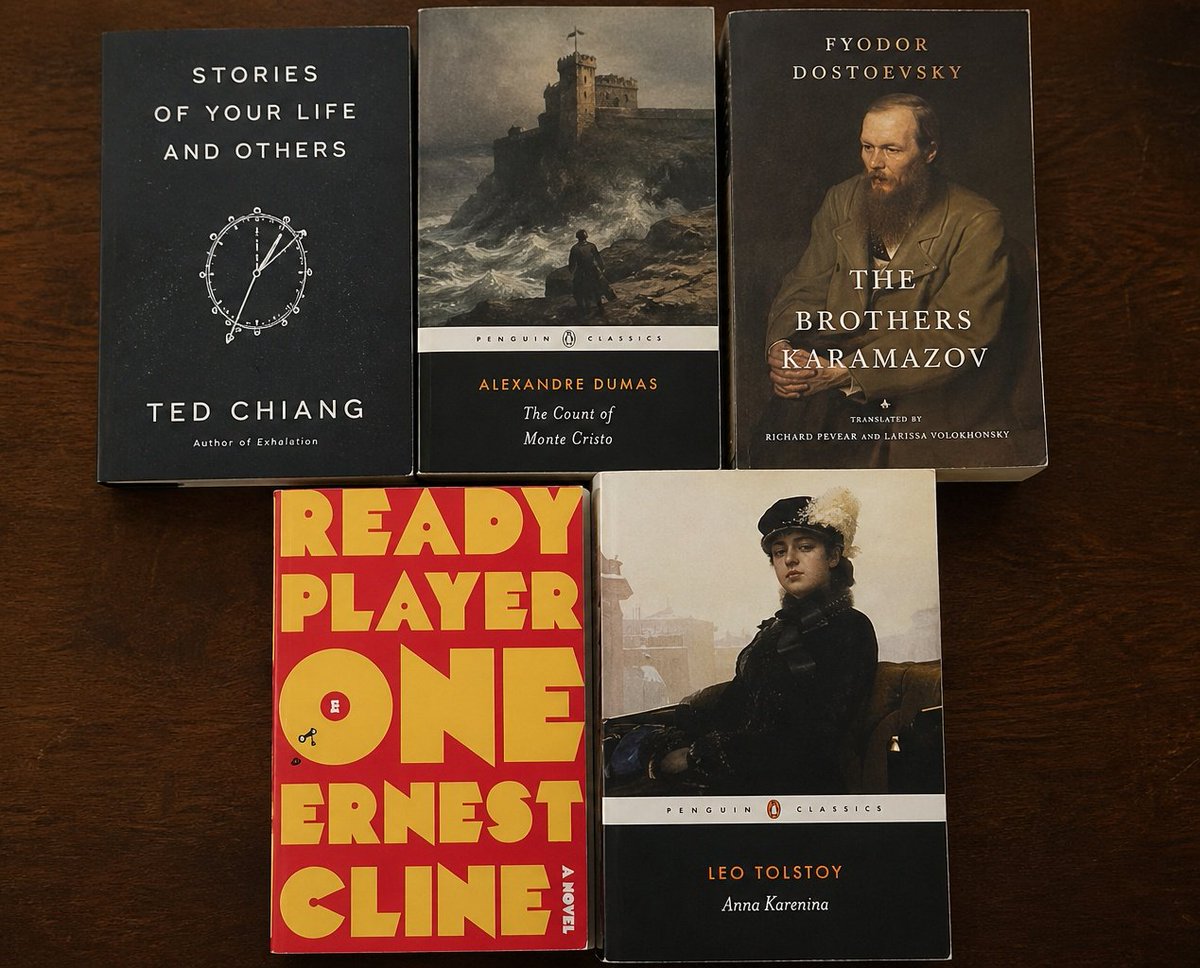

1.Stories of your life and others (Ted Chiang)

2.The count of monte cristo (Alexander Dumas)

3.The brothers karamazovs (Fydor Dostoevsky)

4.Ready player one (Ernest Cline)

5.Anna Karenina (Leo Tolstoy)

Brian Keene@BrianKeene

Five Books Everyone Should Read Once In Their Lives In no particular order: 1. Of Mice and Men - John Steinbeck 2. The Stand - Stephen King 3. The Bottoms - Joe R. Lansdale 4. Lonesome Dove - Larry McMurty 5. The Rum Diary - Hunter S. Thompson Now, let’s see your list.

English