Truth

30 posts

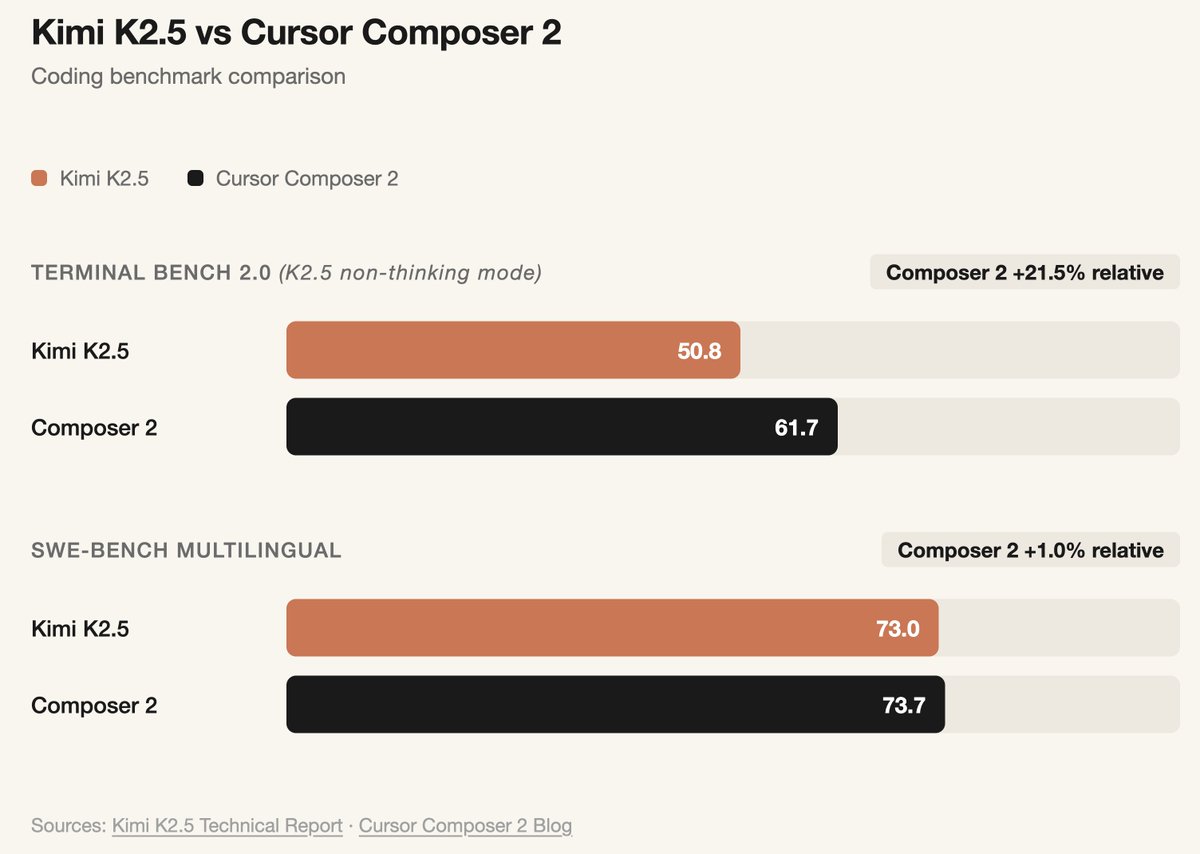

Congrats to the @cursor_ai team on the launch of Composer 2! We are proud to see Kimi-k2.5 provide the foundation. Seeing our model integrated effectively through Cursor's continued pretraining & high-compute RL training is the open model ecosystem we love to support. Note: Cursor accesses Kimi-k2.5 via Fireworks' hosted RL and inference platform as part of an authorized commercial partnership.

was messing with the OpenAI base URL in Cursor and caught this accounts/anysphere/models/kimi-k2p5-rl-0317-s515-fast so composer 2 is just Kimi K2.5 with RL at least rename the model ID

I’m extremely excited about working together with @cursor_ai and @Kimi_Moonshot to pushing the most cutting edge quality and inference performance – Kimi-k2.5 served as the foundation for Cursor continued pretraining & high-compute RL, powered by @FireworksAI_HQ RL infra & ultra-fast inference stack, delivering frontier coding performance.

Looks like it’s confirmed Cursor’s new model is based on Kimi! It reinforces a couple of things: - open-source keeps being the greatest competition enabler - another validation for chinese open-source that is now the biggest force shaping the global AI stack - the frontier is no longer just about who trains from scratch, but who adapts, fine-tunes, and productizes fastest (seeing the same thing with OpenClaw for example).

ok, hear me out on this one..

Yep, Composer 2 started from an open-source base! We will do full pretraining in the future. Only ~1/4 of the compute spent on the final model came from the base, the rest is from our training. This is why evals are very different. And yes, we are following the license through our inference partner terms.