OpenGraph Labs 🧤

70 posts

OpenGraph Labs 🧤

@OpenGraph_Labs

Building multimodal data infrastructure

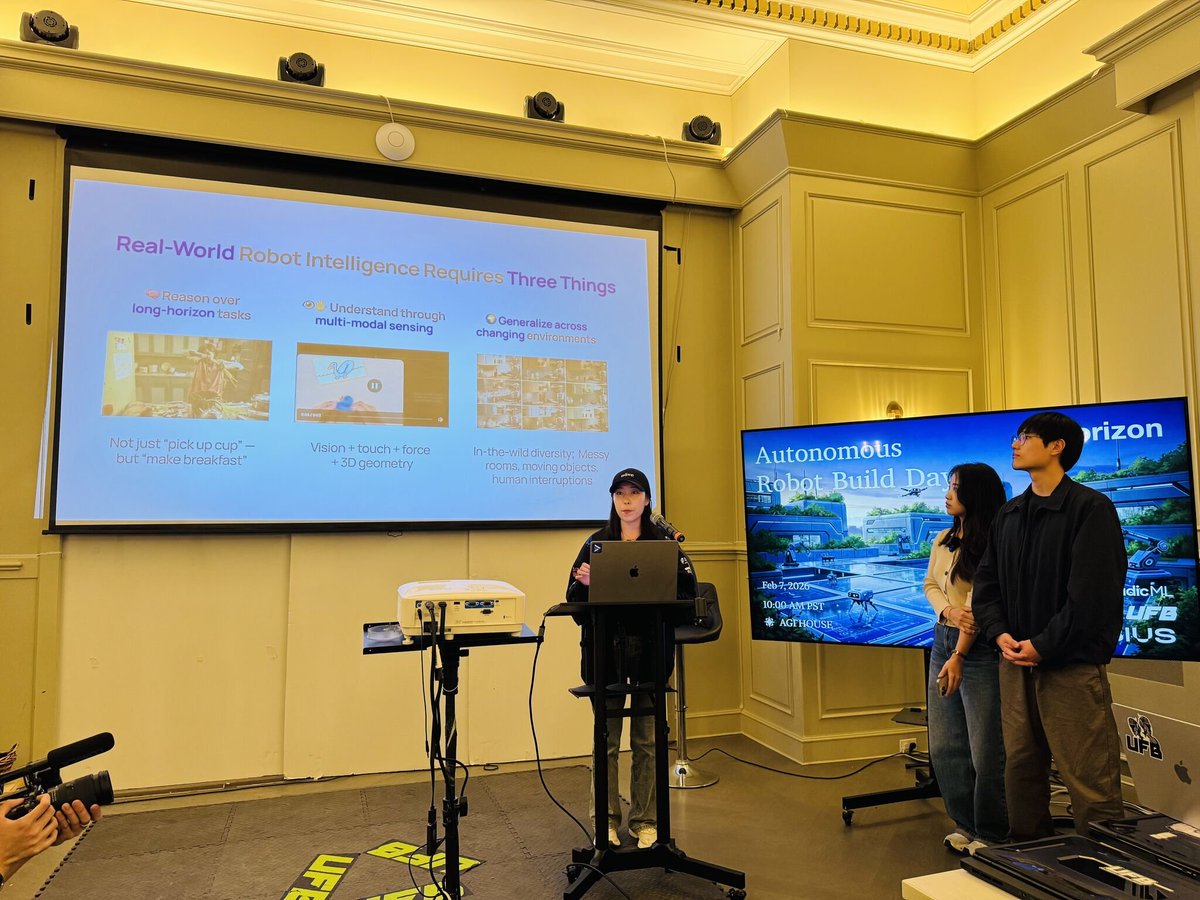

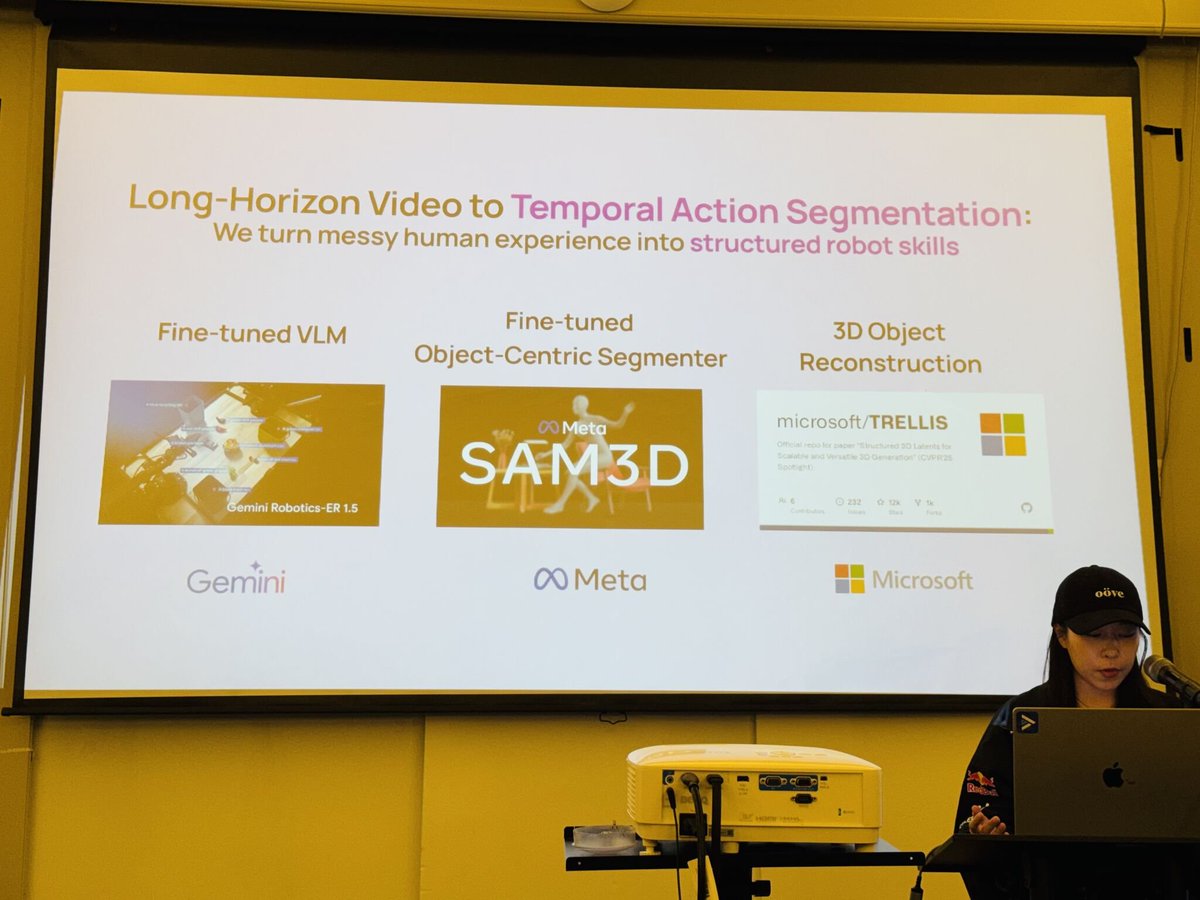

Robotics today looks a lot like NLP in 2005. We hand-code physics simulations the same way linguists hand-coded grammar rules. And it doesn't scale. A new class of models — world models — learns physics from video instead. The early results are striking. The gaps are real. Here's what you need to know. → bvp.com/atlas/can-worl… cc: @TaliaGold, @bhavikvnagda, @gracejhma

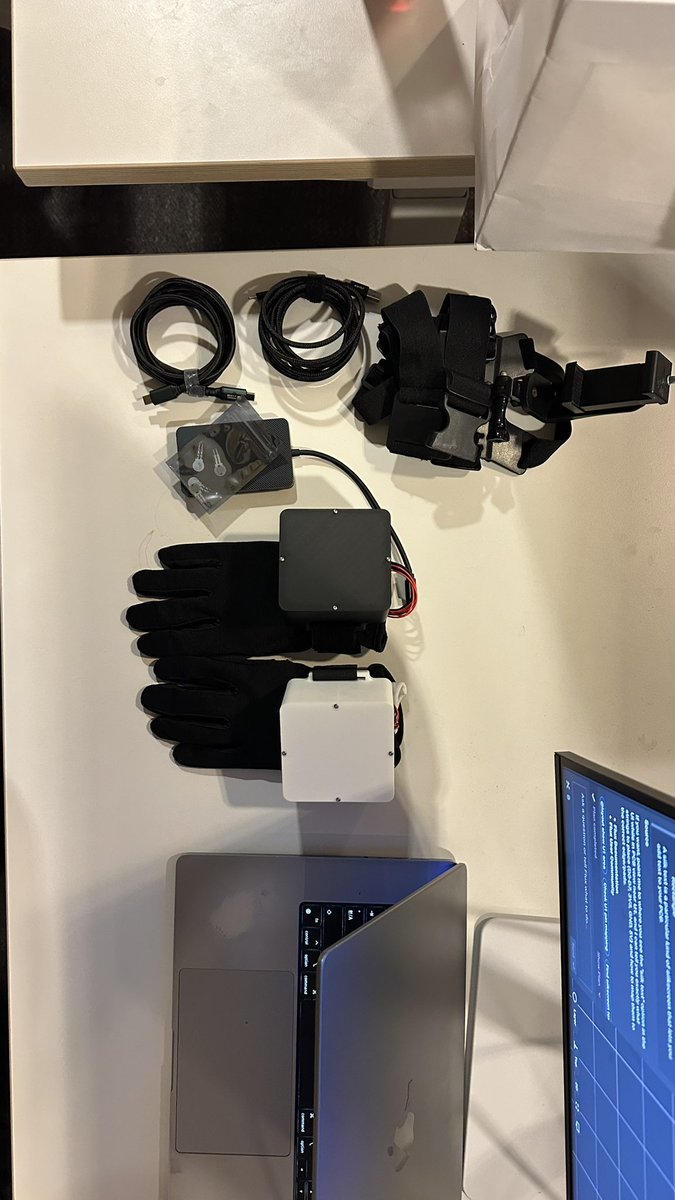

Data can’t just be outsourced🤯 To iterate fast, robotics teams must own their data infrastructure Introducing SyncField: turnkey data infrastructure for in-the-wild data collection (Best for UMI-style & Embodied human) #Robotics #UMI #DataCollection

Dexterous hands vary widely—so do tactile modalities. 🖐️🌈 Our vision on tactile human-to-robot transfer: 🔓 Not tied to specific hardware ♻️ Reuse human tactile demos across embodiments Presenting TactAlign, a cross-sensor tactile alignment for cross-embodiment policy transfer.