Prashanth (Manohar) Velidandi

1.6K posts

Prashanth (Manohar) Velidandi

@PMV_InferX

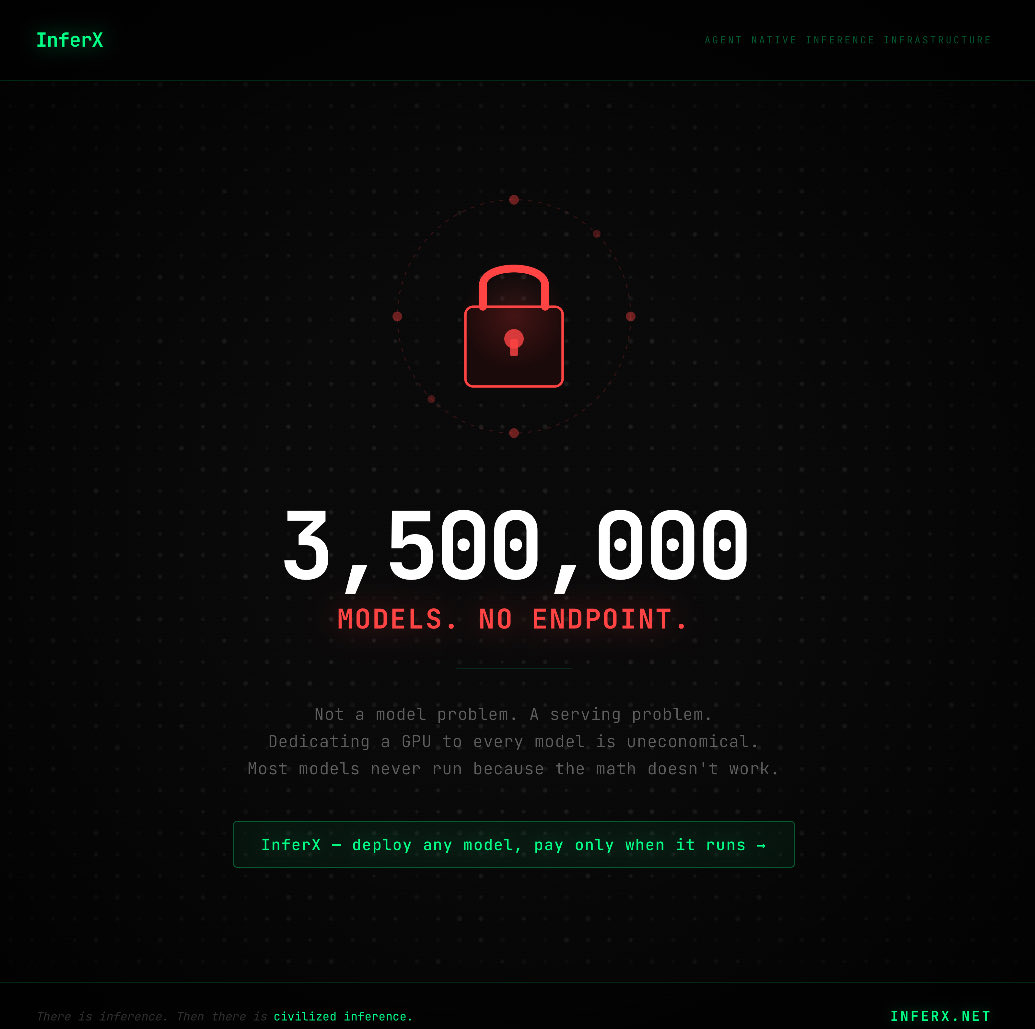

AI Infrastructure Researcher | Co-founder at @InferXai , a Multi-Tenant Serverless Platform for Scalable Inference

I warned my memory friends a few months ago..there are tons of optimizations available across the whole stack to reduce memory capacity and bandwidth...as long as memory was relatively "cheap" , we stay lazy...constraints unleash creativity..I hear the memory supply chain constraints won't be solved till 2030..prepare for deluge of creativity..it hasn't been a week since Turbo quant... not only in software, but you will some insanely cool hardware improvisations and new suppliers emerge to to the top as well

Upgrading your RAM is now unnecessary. Introducing our new ComfyUI Dynamic VRAM optimization. Running local models is now possible on even the most memory constrained hardware. Read more here: blog.comfy.org/p/dynamic-vram…

Just implemented Google’s TurboQuant in MLX and the results are wild! Needle-in-a-haystack using Qwen3.5-35B-A3B across 8.5K, 32.7K, and 64.2K context lengths: → 6/6 exact match at every quant level → TurboQuant 2.5-bit: 4.9x smaller KV cache → TurboQuant 3.5-bit: 3.8x smaller KV cache The best part: Zero accuracy loss compared to full KV cache.

The fact that memory stocks are crashing because of Google’s Turboquant is a pretty good indicator of how many clueless people this market is filled with. It’s like saying Aramco should crash because Toyota came out with a next-generation hybrid engine.

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

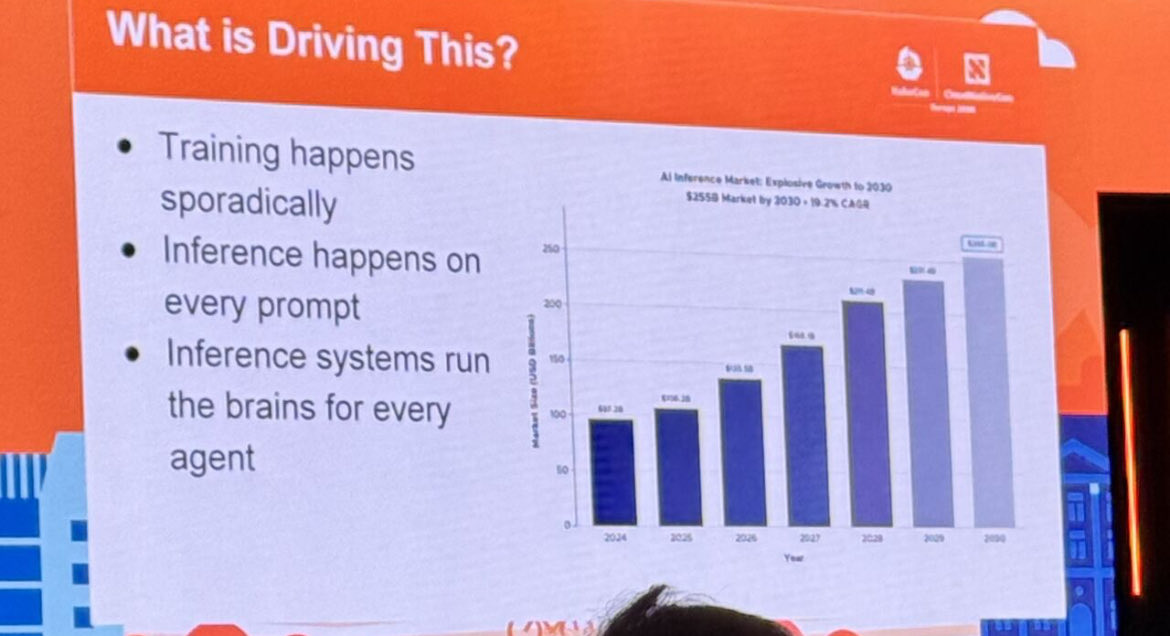

"Inference, if you look at it as a market, will be much, much bigger than cloud computing was pre-ChatGPT." Lightspeed’s @buckymoore says inference is an underrated investment category in AI, and expects the market to break up into large, specialized platforms for each modality: "The GPU supply crunch that we're seeing right now is largely, as @dylan522p has said on the show before, due to the fact that not only these consumer products, but also B2B products like Claude Code and Codex are just really taking off and creating insane demand for inference." "We're talking hundreds of billions in spend every year. And if that's true, I think there will be very, very large inference platforms built in each modality." "So there will be an inference platform for real-time video models, there will be an inference platform for open-source and custom language models, there will be an inference platform built specifically for long-running agents." "So I think we're just going to see that industry, which today looks like one industry, break up into many because of how big it is and how much room for specialization there is."

Talked with @dee_bosa @CNBC about @nvidia and everything open-source AI! Some key points: - Nvidia is the new American open-source AI king - 30% of fortune 500 are using Hugging Face and our goal is to get to the majority of them by the end of the year - Agents will be much more open-source based than chatbots (ex OpenClaw) - Agents empower all to train, fine-tune, and run their own models based on open-source - We crossed 15M AI builders on HF and hope to have as much agents using the platform by the end of the year. Agents are the new users and customers of tech platforms