perfectMan👨🌾🌍🌐

29K posts

@Perfectoparah

Agripreneur || Fabulist || Web3-IoT enthusiast || Sagittarius || Dancing farmer || Advisor to nothing || Real OG @get_optimum || stewpid @ 19

OPTIMUM building the missing layer for Web3 @get_optimum aims to solve one of the fundamental problems in blockchain: the lack of an efficient memory layer. Currently, data propagation in blockchain networks still faces several challenges, such as: - relatively slow data propagation - high bandwidth usage - high network latency These issues can limit network performance and make data distribution less efficient. To address this, Optimum is building a decentralized memory layer that functions similarly to RAM in traditional computers enabling faster and more efficient data access and movement within blockchain systems. To achieve this vision, Optimum leverages its core technology, RLNC (Random Linear Network Coding). This technology helps: - Accelerate data propagation - Reduce network load - Improve bandwidth efficiency - and enhance overall scalability Optimum also introduces two main components: > mump2p → a protocol designed to accelerate the distribution of transactions, blocks, and block data across nodes > deRAM → a data access layer that functions like RAM, enabling fast and real-time data usage CC : @MurielMedard @blockchainjeff @CryptoSundayz @PostMaklone

I'm a very simple person. If I mistakenly anyone crying on my TL after reading the terms and condition, I'll simply mute or block them

i think most people still misunderstand privacy is about. they think it only matters when someone is hiding something but not everything private is suspicious. - some things are just personal - some things deserve space - some things does not need to be exposed just to be useful for example, medical data should be useful for care without becoming exposed to systems that do not deserve to see it that is why privacy means more to me than secrecy - it is dignity - it is control - it is respect with all that @Arcium feels important from here because the real shift is not only about encrypting data but its also about making computation possible without turning the user inside out first

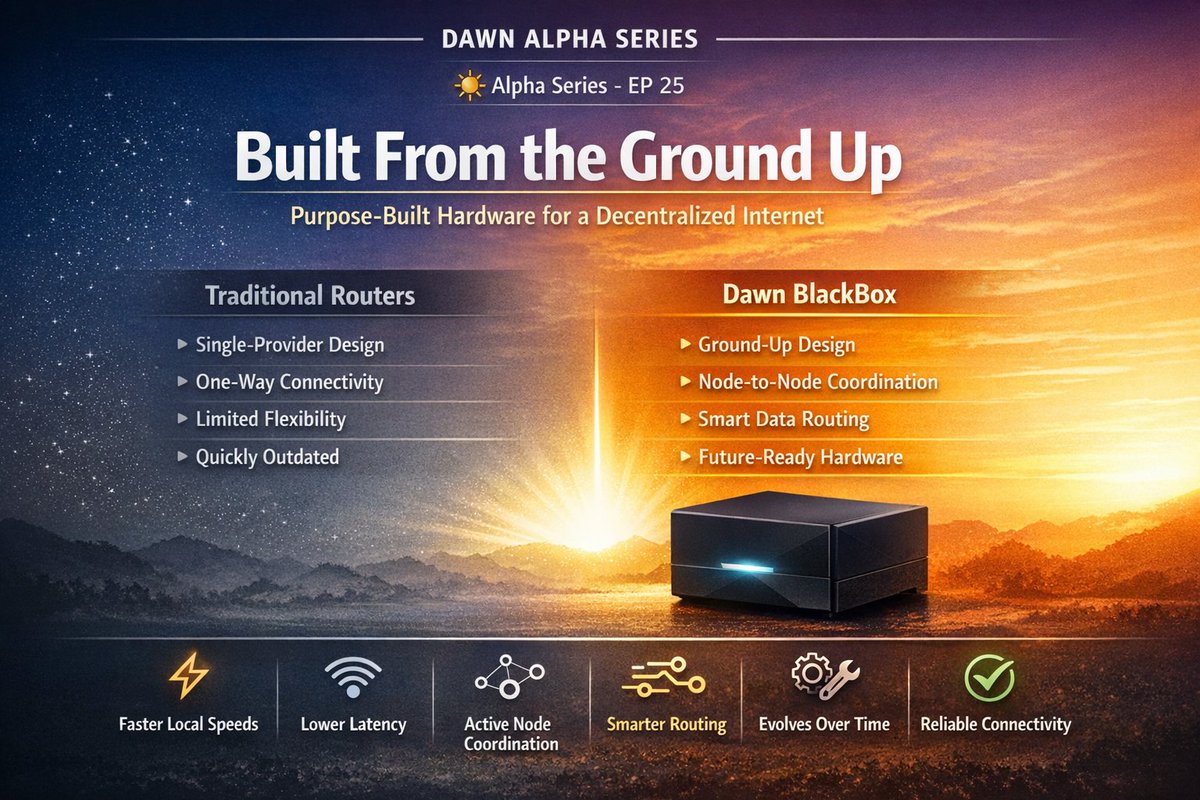

DAWN ALPHA SERIES ☀️ Alpha Series - EP 24: Most internet traffic travels through multiple providers before reaching its destination. @dawninternet decentralized network model helps move data through shorter, more efficient paths, closer to where networks exchange traffic (IXPs). The result: Faster speeds, lower delays, and a more resilient internet.

Because of 100k oo😂😂