Pinjia He

252 posts

@PinjiaHE

Assistant Professor at The Chinese University of Hong Kong, Shenzhen (CUHK-Shenzhen) @cuhksz.

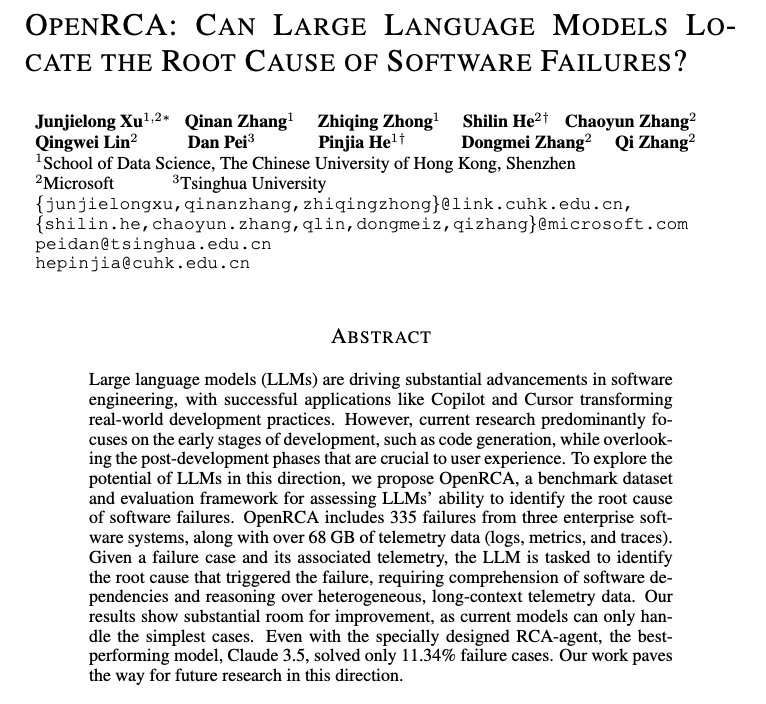

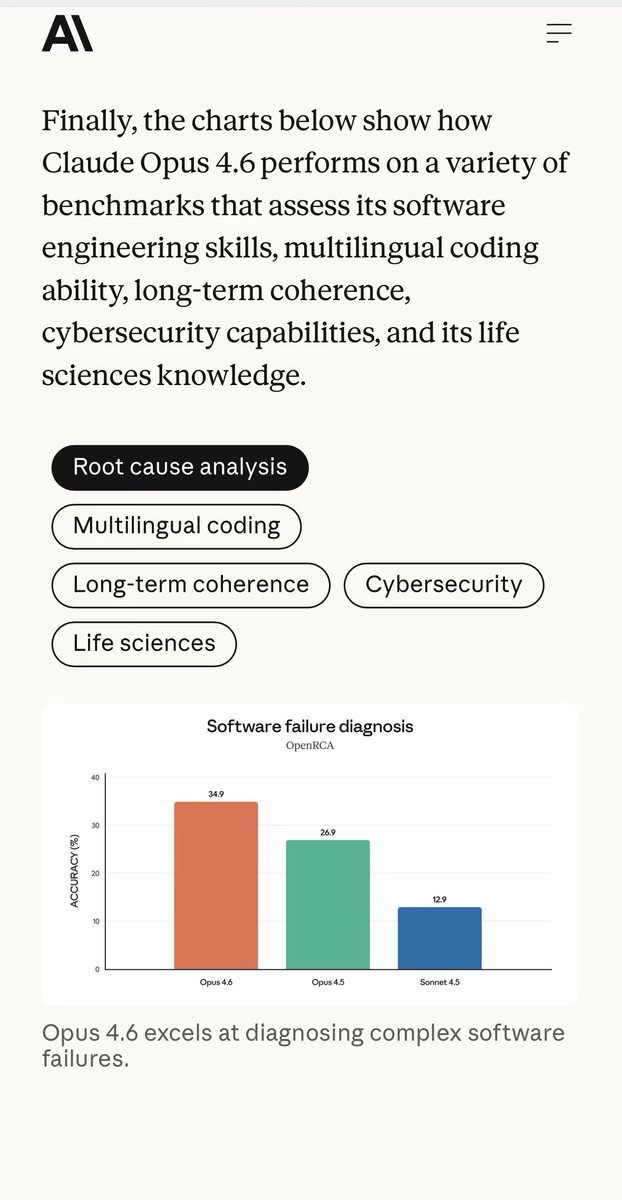

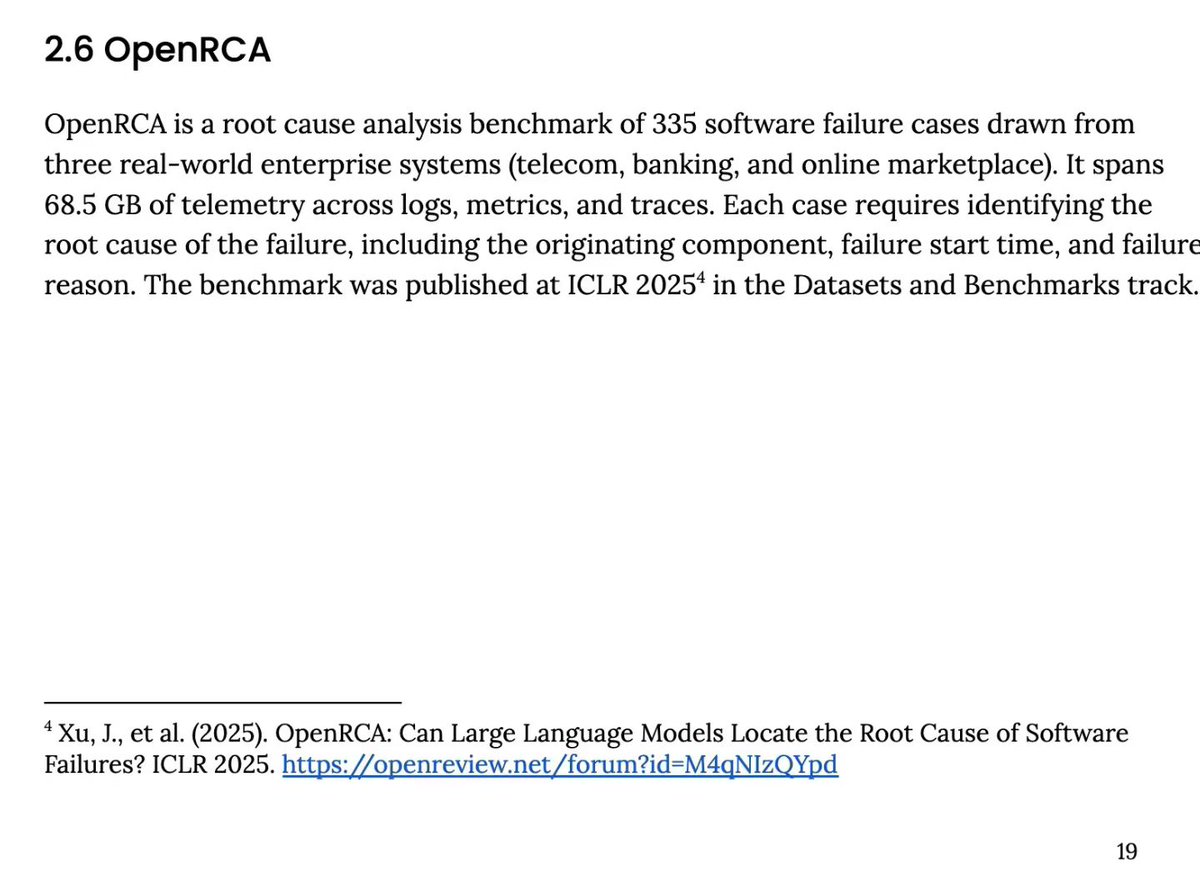

📢 Can LLMs locate software service failures? 🤔 My student @SiyuexiH's #ICLR2025 paper introduces OpenRCA, the first benchmark dataset for evaluating LLMs' root cause analysis capabilities in software systems. LLMs/Agents need to analyze system telemetry data to infer results for natural language queries. Experiments show current LLMs struggle with OpenRCA tasks without specialized RCA tools. Joint work with Microsoft and Tsinghua University. 🔗 Learn more: 📜 Paper: openreview.net/pdf?id=M4qNIzQ… 💻 Code: github.com/microsoft/Open… 📊 Leaderboard: microsoft.github.io/OpenRCA/ #iclr2025 #AI4SE #LLM #rootcauseanalysis

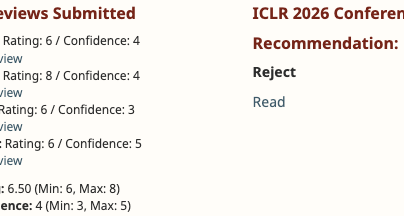

Heartbroken to receive a Reject for our #ICLR2026 submission (Rating: 8/6/6/6). The hardest part isn't the rejection itself, but the Meta-Review reasoning. The AC dismissed all reviewers' unanimous support, raised two new concerns (with factual errors themselves), and claimed "All reviews were superficial (while being marginally above the minimum bar for reviewers)." We believe in the peer review process, but a "single point of failure" overriding full consensus is tough to swallow.

Excited to share that two of our papers will be presented next week: one at SIGMOD (Tuesday), and another at the FUZZING Workshop @ ISSTA (Saturday)! The student collaborators from @ECNUER will present the papers. I’ll be at ISSTA/FSE next week—come say hi! Looking forward to great conversations and feedback. 👋 The SIGMOD work is a collaboration with @RiggerManuel, @DengWenjin48334, and Qiuyang Mang. We propose a geometry-aware test generator for spatial databases and prove metamorphic relations under affine transformations. This helped us uncover 34 previously unknown bugs in mainstream spatial database systems. The FUZZING workshop paper revisits combining static analysis and symbolic execution for precise bug finding. We show that accurate error traces from static analysis can actually help symbolic execution, but inaccurate traces can mislead symbolic execution and potentially human users.

When eyes and memory clash, who wins? 👁️🧠 Introducing a comprehensive study on vision-knowledge conflicts in MLLMs, where visual input contradicts the model's internal commonsense knowledge—and the results might surprise you. #ACL2025NLP 📈 We developed an automated framework to generate ConflictVis benchmark: 374 original images with 1,122 QA pairs designed to test when MLLMs see one thing but "know" another. 📊 Shocking findings across 9 leading MLLMs: 1⃣ ~20% over-reliance on parametric knowledge over visual evidence 2⃣ Yes-No questions show 43.6% memorization bias (Claude-3.5-Sonnet) 3️⃣ Action-related conflicts are 10.4% more problematic than place conflicts 👀 We propose "Focus-on-Vision" prompting strategy that significantly improves performance by instructing models to prioritize what they see over what they remember. Despite improvements, vision-knowledge conflicts remain a persistent challenge for multimodal AI systems. 📃 Paper: arxiv.org/abs/2410.08145

Super excited to share that I will be joining The University of Manchester (@OfficialUoM) as a Lecturer (Assistant Professor) in Cyber Security! The Systems and Software Security group at Manchester is already incredibly impressive, and I’m honored to help further strengthen it.

Trust your AI, but can it trust itself? 🤔 Introducing an online reinforcement learning framework, RISE (Reinforcing Reasoning with Self-Verification), enabling LLMs to simultaneously level-up BOTH their problem-solving AND self-checking skills! 🧐 Problems tackled: ✅ "Superficial self-reflection" — models failing to verify their own reasoning robustly. ✅ Separation between reasoning and self-verification training. 🚀 RISE empowers models to critique their OWN reasoning via on-the-fly feedback and verifiable rewards, promoting stronger, more dynamic reasoning loops and effective self-assessment skills. 📊 Key results: 📈 Up to 2.8× better self-verification accuracy on challenging math tasks. 📈 Outperforms instruction-tuned models (Qwen2.5): +3.7% in reasoning, +33.4% in verification accuracy. 📈 Better internal reasoning: frequent, more accurate verification behaviors. 🧑💻 Code: github.com/xyliu-cs/RISE 📃 Paper: arxiv.org/abs/2505.13445

Introducing our first #ICRA2025 paper, SELP (Safe Efficient LLM Planner), a method for generating plans for robot agents that adhere to user constraints while optimizing for time-efficient execution. 🔗 Preprint: arxiv.org/pdf/2409.19471 #LLMs #Robotics #Agent