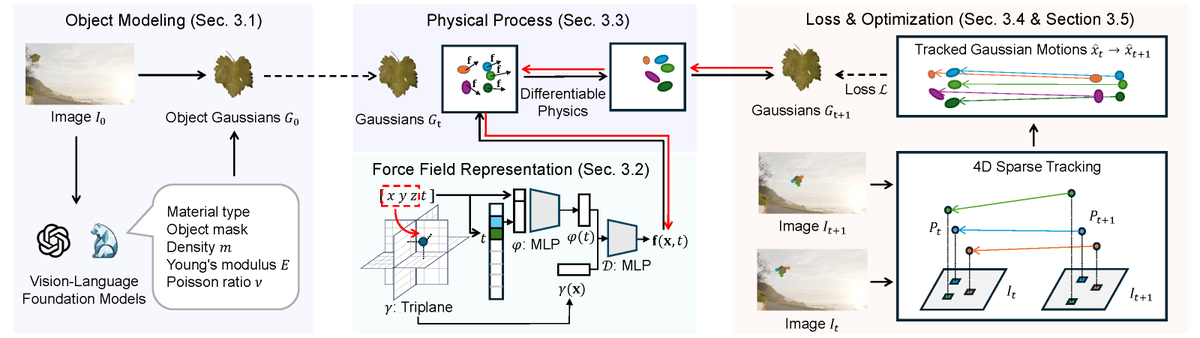

Introducing Ψ₀ (psi-lab.ai/Psi0) — an open foundation model for universal humanoid loco-manipulation. 🏆 Outperforms GR00T N1.6 by 40%+ overall success rate 📉 Uses only ~10% of the pre-training data 📦 Fully open-source: model, data, code, and deployment pipeline 1/10