Chaowei Xiao

304 posts

Chaowei Xiao

@ChaoweiX

Assistant Professor @Johns Hopkins University Researcher@NVIDIA| Researcher on AI Safety/Security

Are you a PhD student excited to build the future of Autonomous Vehicles? The @nvidia Autonomous Vehicles Research Group is now recruiting PhD research interns for 2026!! Apply here: nvidia.wd5.myworkdayjobs.com/en-US/NVIDIAEx…

We’ve developed Claude for Chrome, where Claude works directly in your browser and takes actions on your behalf. We’re releasing it at first as a research preview to 1,000 users, so we can gather real-world insights on how it’s used.

🔮 Introducing Prophet Arena — the AI benchmark for general predictive intelligence. That is, can AI truly predict the future by connecting today’s dots? 👉 What makes it special? - It can’t be hacked. Most benchmarks saturate over time, but here models face live, unseen future events. You can’t memorize tomorrow (unless you’ve cracked time travel). - It’s interpretable. Strong performance = real foresight, which translates into real investment gains. 👉 Check it out: prophetarena.co

🚨 New paper accepted to #ACL2025! We propose SudoLM, a framework that lets LLMs learn access control over parametric knowledge. Rather than blocking everyone from sensitive knowledge, SudoLM grants access to authorized users only. Paper: arxiv.org/abs/2410.14676… 🧵[1/6]👇

New! Academy member announcement. Dedicated to honoring excellence and advancing the common good, from 1780 to today. amacad.org/news/new-membe…

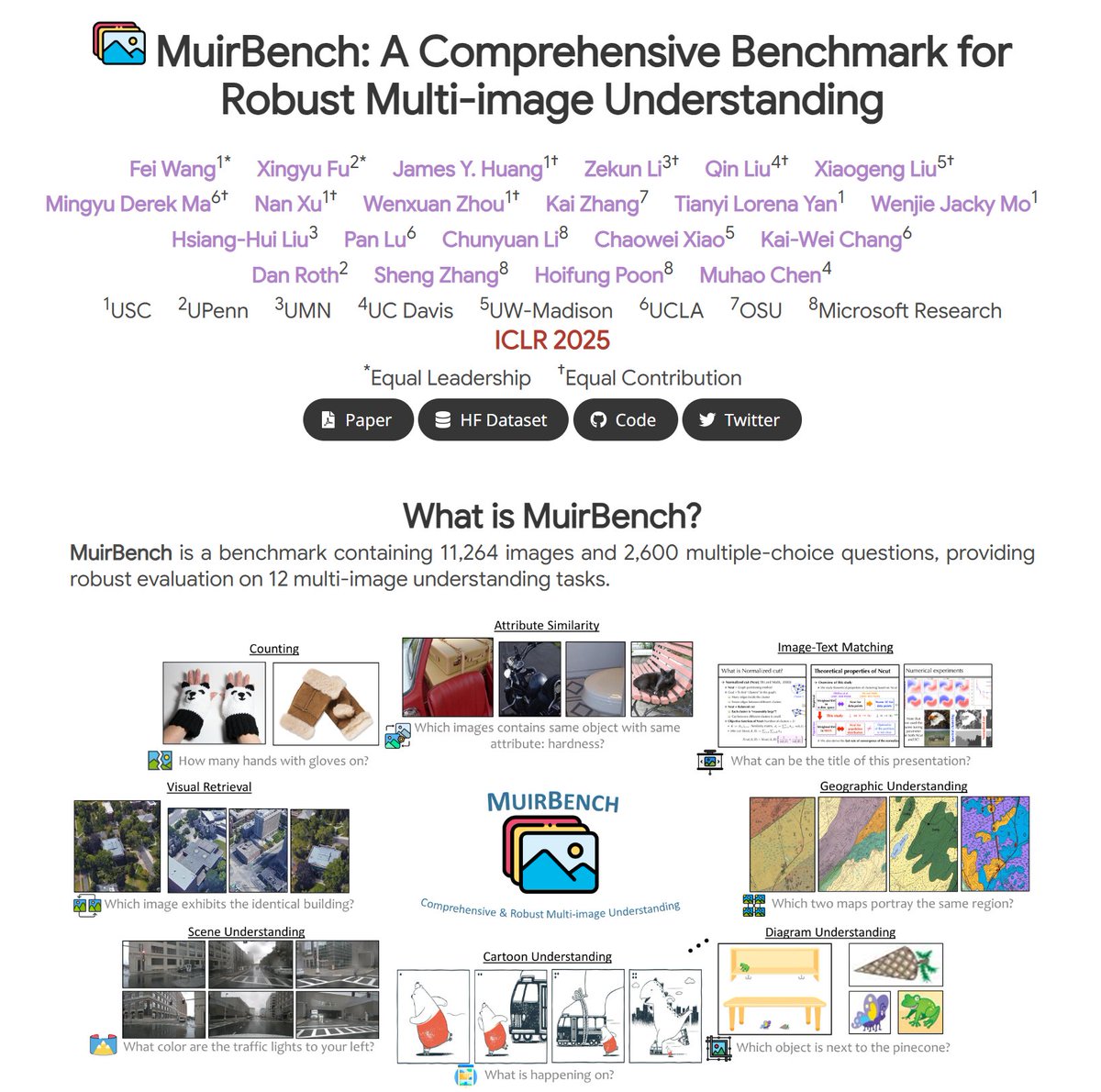

Thrilled to be featured in the #ICLR2025 Spotlight! 🎉 Come see our poster in Hall 3 + Hall 2B #602, April 25, 10:00–12:30 PM SGT