Fernando

1.4K posts

Fernando

@Principal_ADE

Building story-based software development. Ex-Google, Ex-Airbnb Instead of reconstructing what happened from thousands of log lines, you read a story.

Katılım Haziran 2025

222 Takip Edilen222 Takipçiler

Sabitlenmiş Tweet

@garrytan posted yesterday on X that markdown is becoming as important as code because it's a language of intent.

He's right. But intent without verification is just a README nobody reads.

For the past year my co-founders and I have been building the answer. We call it story-based telemetry. The artifact it creates is the OTEL Behavioral Manifest — intent you can actually verify in production.

I wrote about it here.

linkedin.com/pulse/otel-beh…

English

@aidenybai Eye functionality is effected by blood sugar levels in the muscle that control the lenses

English

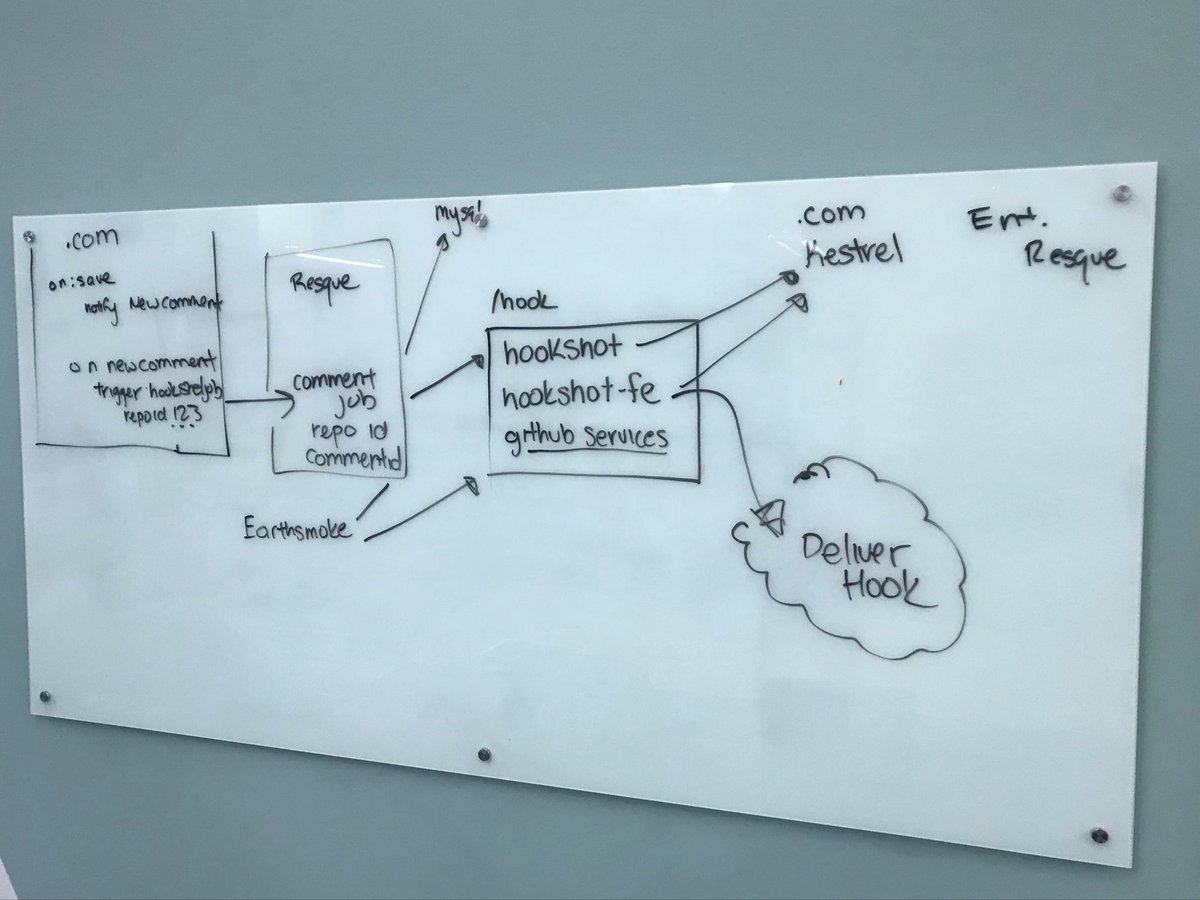

@kitlangton this is good signal for our feature set x.com/Principal_ADE/…

Fernando@Principal_ADE

Making Progress on Guided Code Review with Otel Verified Plans

English

Fernando@Principal_ADE

English

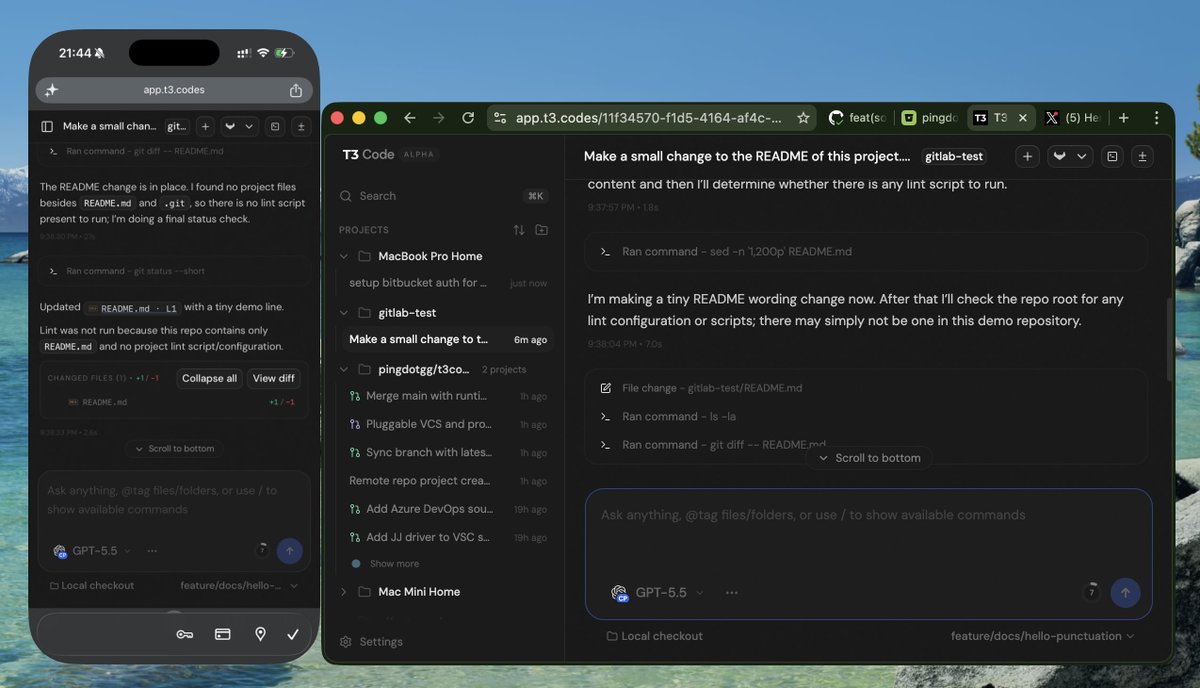

Not much of @theo's code from T3Code day 1 remains, but the core architecture that T3 Code is just a websocket server still stands and so fun to build on top of. Who really needs cloud runners when you can just have all your devices meshed and access any agent on any machine from anywhere?

English

@andrewbrown Might benefit from a 2dimensional layout of the repo x.com/principal_ade/…

Fernando@Principal_ADE

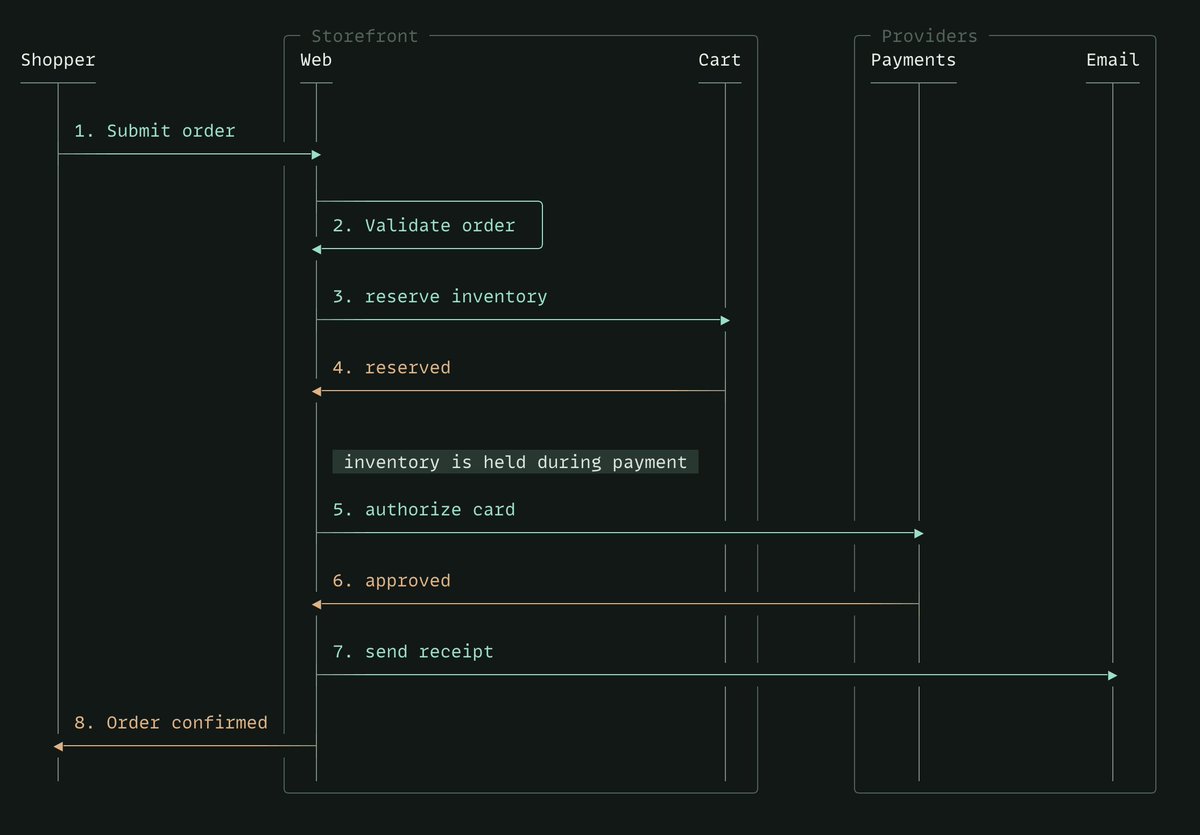

Sequence Diagram + Spacial Understanding of where its implemented

English

@thdxr we think the next github should have live activity for open source projects and honestly we are close x.com/Principal_ADE/…

Fernando@Principal_ADE

big day for the t3code peeps @shivamhwp @jullerino

English

@adocomplete Kind of, but we mostly do ui experimentation with regard to repos. x.com/Principal_ADE/…

Fernando@Principal_ADE

Making Progress on Guided Code Review with Otel Verified Plans

English

@DanielLockyer Gotta save money to subsidize those tokens somehow

English

Does Anthropic just have zero functioning support channels these days?

Richie - oss/acc@richiemcilroy

the Anthropic OSS program for Claude is fun, because I now get the Max plan for $0/mo, saving $200/mo but I’m still being charged $200/mo and support won’t reply great success

English

@sudobunni oh! We are working on something! Its pretty alpha so you will have to bear with us. You can check our our web version here app.principal-ade.com the app is a lot better

English

@ThePrimeagen @sama You have to tell him it will increase revenue

English

Dear @sama

Please refer to GPT as gipidy (jipidy). It's much nicer to say that way

English

@DhravyaShah @conductor_build In a few weeks you’ll be saying that about our innovation x.com/principal_ade/…

Fernando@Principal_ADE

Making Progress on Guided Code Review with Otel Verified Plans

English

.@conductor_build is the best app ever made. i was completely wrong before. this is the new way to code.

English

@ian_dot_so Innovation is definitely happening x.com/principal_ade/…

Fernando@Principal_ADE

Making Progress on Guided Code Review with Otel Verified Plans

English