Red Hat AI

2.3K posts

@RedHat_AI

Accelerating AI innovation with open platforms and community. The future of AI is open.

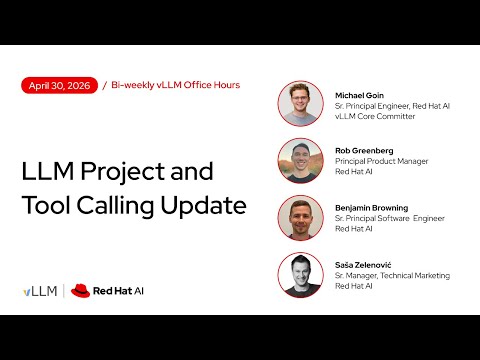

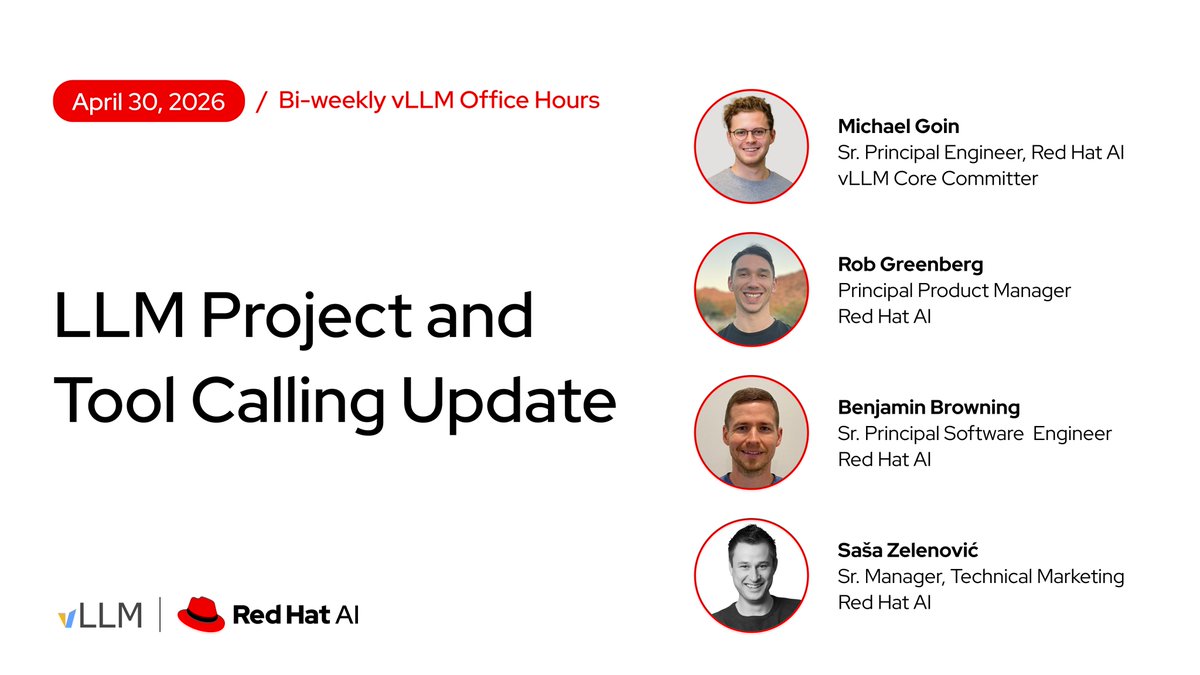

vLLM Office Hours is tomorrow 🗓️ @mgoin_ is dropping the latest project updates, including what's new in v0.20.0. Then we'll cover the current state of tool calling in @vllm_project and what's coming next. Get a calendar invite: red.ht/office-hours Watch on YouTube Live: youtube.com/live/N4qRxarKY…