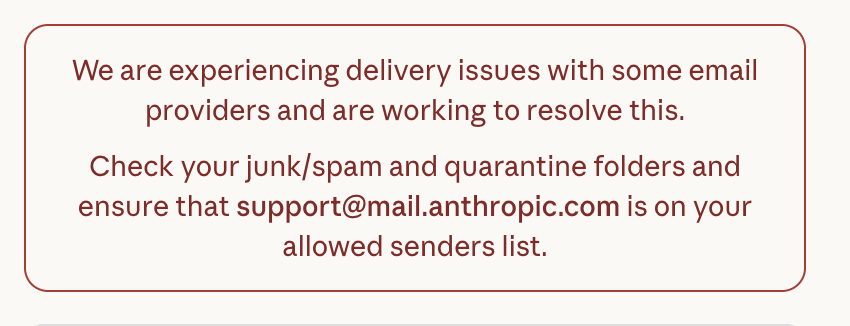

Whats up @AnthropicAI #anthropic #claudeai - I'm having a hard time getting into my account - and when I do I get the "Taking longer than usual..." message and nothing happens even why do try again and again and again shortly! #CustomerService

Sheila B

315 posts

@RealSheilaB

Technology Consultant | Technical Due Diligence | Artificial Intelligence Auditor | Views are my own, RT are not Endorsements

Whats up @AnthropicAI #anthropic #claudeai - I'm having a hard time getting into my account - and when I do I get the "Taking longer than usual..." message and nothing happens even why do try again and again and again shortly! #CustomerService

Today, we’re launching Aya, a new open-source, massively multilingual LLM & dataset to help support under-represented languages. Aya outperforms existing open-source models and covers 101 different languages – more than double covered by previous models. cohere.com/research/aya