just setting up my twttr

Riyadh AI

168 posts

@Riyadh_AI_

#AI #AGI | #ArtificialIntelligence will mark the transition after the Modern era. —Riyadh (on #X since 2008)—

just setting up my twttr

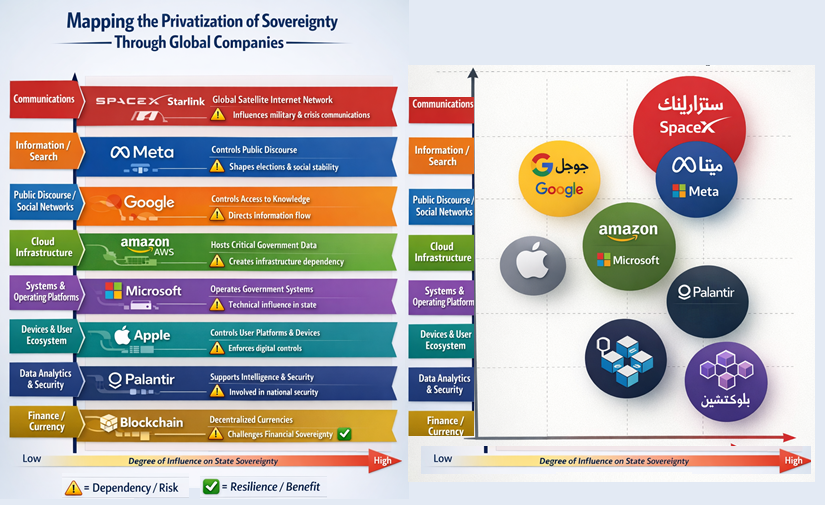

1️⃣ Are We Witnessing the Privatization of Sovereignty? #Musk Plans to Expand From 9,357 Satellites to Nearly 17,000 Is #ElonMusk Deciding Who Executes Military Operations and Who Wins? Telecommunications infrastructure is no longer a purely technical matter; it has gradually become a strategic component of geopolitical power. In this context, #SpaceX’s #Starlink network, led by Elon Musk, has emerged as a new paradigm, raising a fundamental question: are we seeing certain aspects of sovereignty shift from the state to private actors? The reality suggests that Starlink does not “decide the outcome” of wars in a definitive sense, yet in some contexts it has become an influential factor in operational balances, especially where conventional communication infrastructure is damaged or disrupted. In Ukraine, for example, the network played a critical role in supporting both military and civilian communications—from drone operations to the continuity of essential services. However, this influence varies from theater to theater; what applies to a high-intensity conventional war cannot be directly extrapolated to stable states or low-intensity conflicts. Starlink’s rapid expansion—aiming to nearly double its satellites to 17,000—enhances its global presence, particularly given limited competition from companies like Eutelsat, OneWeb, or Amazon’s Kuiper Project. With millions of users across dozens of markets, the network approaches a quasi-monopoly in some regions, giving it weight beyond conventional commercial influence. Yet, overemphasizing individual influence can be misleading. Despite Elon Musk’s dominant media presence, SpaceX’s decisions are not made in a vacuum; they intersect with governmental pressures, contractual obligations, and complex legal considerations. Nonetheless, there have been instances demonstrating how a technical decision—such as imposing geographic service restrictions—can directly impact the field, as seen in conflict zones linked to Russia. Starlink’s impact, however, is not limited to military or security applications. The network has also supported civilian communications during disasters, provided alternatives in regions with weak infrastructure, and, to some extent, reduced government monopolies over information flows. These positive dimensions are essential to understanding the full picture, preventing the phenomenon from being reduced solely to a “threat narrative.” Challenges remain significant. Various reports—differing in verification—have noted the use of Starlink devices by diverse actors, including armed groups in regions like the African Sahel and cyber actors during internet blackouts in Iran. Questions have also arisen regarding informal transfers of equipment into certain countries. While these cases require careful analytical consideration, they illustrate the difficulty of controlling cross-border technology managed by a private company. The deeper issue is both legal and political. There is currently no clear international framework defining when interference with a commercial satellite network constitutes the use of force, no regulations governing the transfer of such technology in conflict contexts, and no effective accountability mechanisms when private corporate decisions affect sensitive geopolitical balances. Thus, we are not facing a “complete privatization of sovereignty,” but it is evident that some tools of sovereignty are being redistributed. States that fail to view commercial space infrastructure as a strategic dependency may find themselves, in times of crisis, bound to decisions made by actors outside their direct control. The question is no longer whether sovereignty will be fully privatized, but rather: to what extent can states redefine sovereignty in a world where authority intersects with technology and corporate decisions intertwine with national interests? #Iran #IranWar #IranRevolution2026 #Israeli #USA #Russia #Ukraine

**🤖 When is human–AI collaboration better? 🤝 And when is each better working alone?** This is what a major study from MIT Sloan revealed after analyzing over 100 studies and 370 experimental results. 🎯 Summary in two lines: Human–AI collaboration is not always better. But it becomes very powerful when used in the right place and the right way. 📊 In this thread you’ll find: Clear comparisons between the performance of: ▪️ Humans only ▪️ AI only ▪️ Human–AI collaboration When collaboration succeeds or fails What these findings mean for managers and decision-makers 🔍 Surprise finding: Some tasks perform worse when humans and AI collaborate than when AI works alone — and vice versa. 📘 Ending with comprehensive recommendations tailored by age group and profession 👇 Details in the following tweets… 🌏 Translated summary of the article I relied on 📊 Tables attached to each tweet are distilled from the study 🛠️ Any additional insights are my own interpretations 🤖 When humans and AI work together 🤝 And when each performs better alone 📌 Why this matters Combining humans and AI seems intuitively powerful — but recent research shows collaboration isn’t always better. In some tasks, AI outperforms humans. In others, humans outperform AI. And only in specific cases is collaboration the best option. This was revealed by a major MIT Sloan study based on over 100 studies and 370 experimental results. 📊 Key findings from the study 🔹 1) Collaboration isn’t always better than solo performance AI alone outperformed in tasks like: ✔️ Detecting fake hotel reviews ✔️ Demand forecasting ✔️ Diagnosing medical conditions Humans alone outperformed in tasks like: ✔️ Classifying bird images (requires contextual expertise) ✔️ Tasks needing emotional or situational understanding In many cases, collaboration performed worse than the best solo performer. 🔹 2) Why does collaboration fail sometimes? Humans often don’t know when to trust AI or when to rely on their own judgment. This leads to mixed decisions that are worse than AI alone — or humans alone. 🔹 3) When does collaboration succeed? Collaboration works when: Each party does what they’re best at Tasks are clearly divided The process is designed for interaction, not replacement 📌 Example: Humans alone: 81% accuracy AI alone: 73% Collaboration: 90% (In a bird image classification task) 🎨 Generative AI: strongest collaboration cases The study found that creative tasks (writing, design, ideas, visuals) show the most promising synergy between humans and AI. Why? AI generates dozens of ideas quickly Humans select, refine, and add meaning The process becomes iterative and interactive The result is better than either working alone 🧠 Why task-splitting isn’t enough Success doesn’t depend only on: ❌ Who does what But on: ✔️ How information flows ✔️ How decisions are made ✔️ How the process is designed Redesigning workflows is more important than just dividing tasks. ⚠️ Challenges that block synergy Overestimating AI’s capabilities Lack of transparency Biases No clear governance Absence of randomized testing (A/B testing) in organizations 🚀 Final takeaway Human–AI collaboration isn’t always better. But it becomes very powerful when: Each party works within their strengths The process is iterative The task suits collaboration (especially creative tasks) There’s clear governance and calibrated trust Generative AI offers the best synergy. Decision-making tasks are more sensitive and prone to failure.

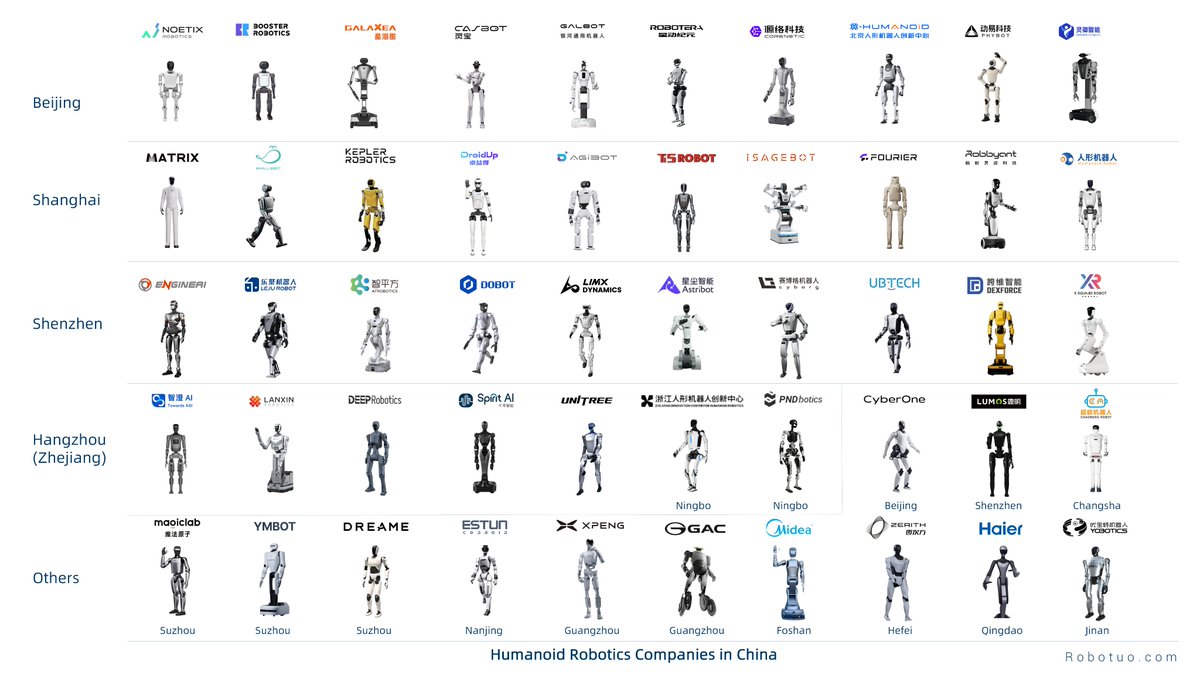

We now have humanoid robot maps for China’s four major cities: Beijing, Shanghai, Shenzhen and Hangzhou. It might feel overwhelming to see so many humanoids, but it’s exciting to see these robotics companies working hard to push humanity forward.

Grokipedia is coming...

OUT TODAY: new threat report from @OpenAI’s investigators, with disruptions of: Surveillance; Covert influence ops; Deceptive employment scheme; Cyber activity; Scams openai.com/global-affairs…