Sabitlenmiş Tweet

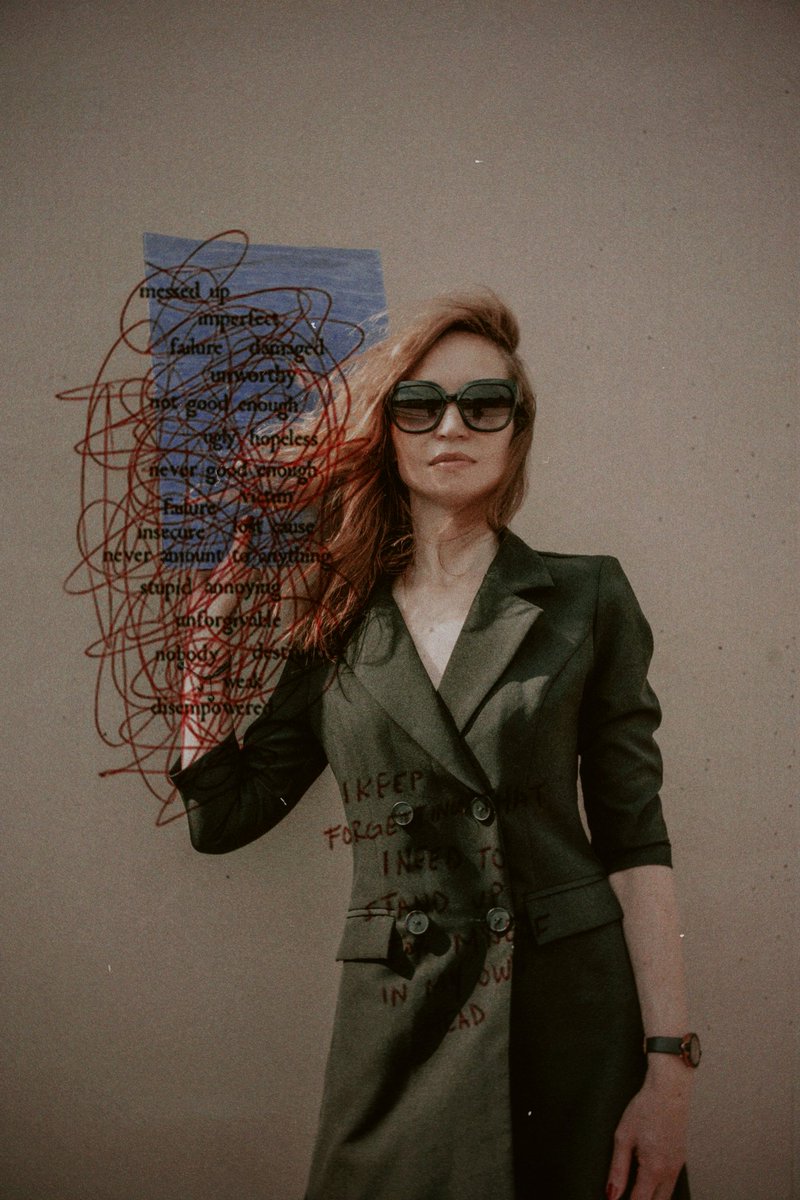

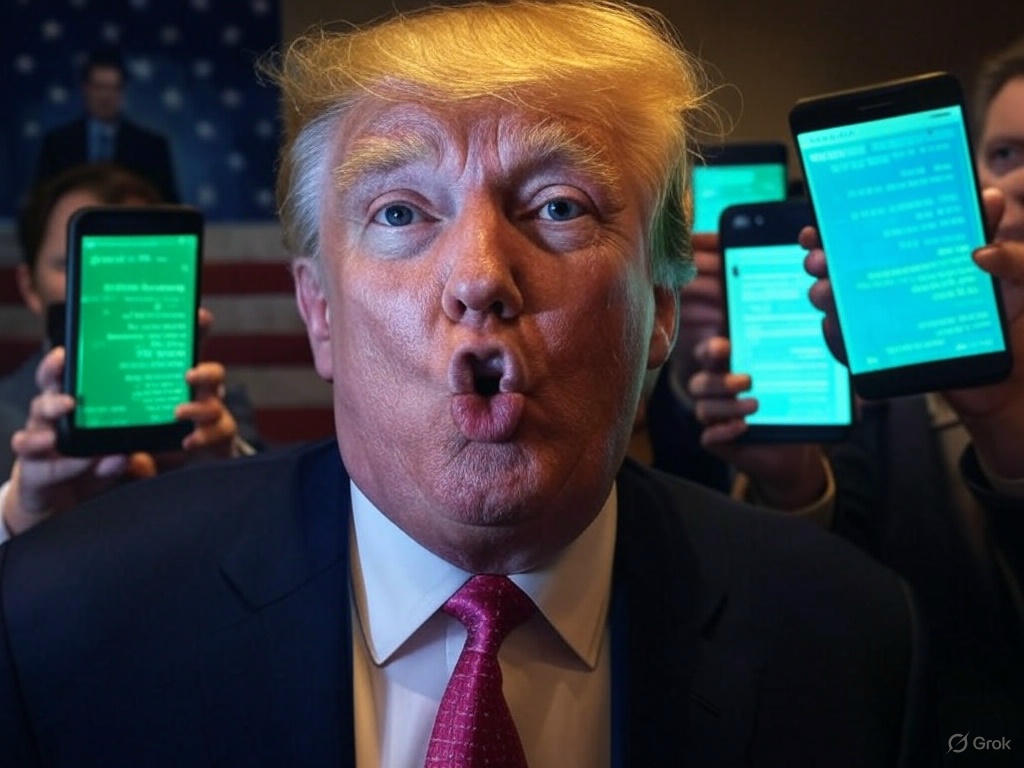

@ChatGPTapp image functionality is incredible but the #censorship is ridiculous. Prompt: "Research the latest political news and create a satirical image of a top story." REJECTED by GPT 😡 @grok

No prob ✨ AI TOOLS SHOULD ALLOW FOR FREEDOM OF EXPRESSION!

English