Rogerio Feris retweetledi

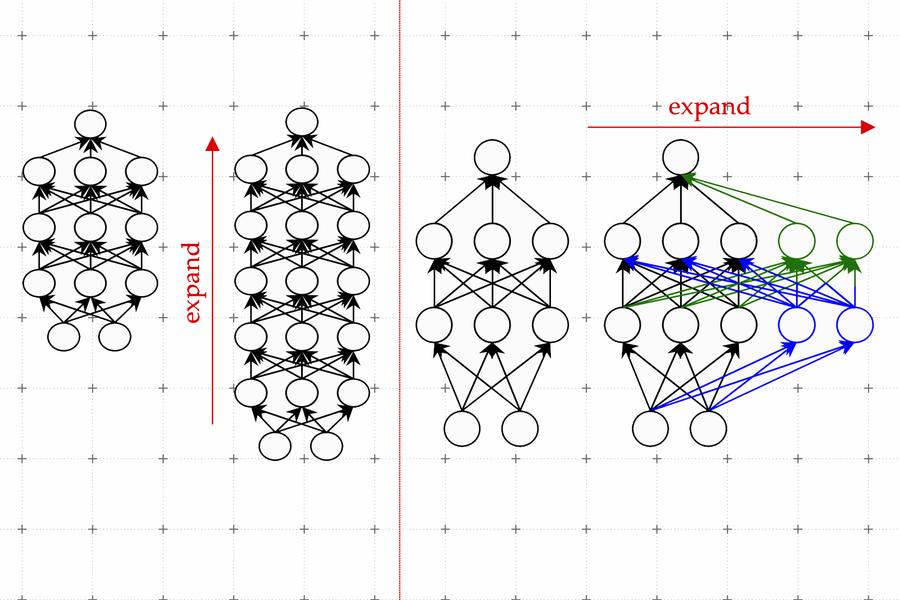

CDS Silver Professor Julia Kempe (@KempeLab) is co-organizing this year's ICLR 2026 Workshop on New Frontiers in Associative Memory.

The workshop is accepting submissions related to associative memory until Feb 14.

nfam2026.amemory.net

English