RomainB

651 posts

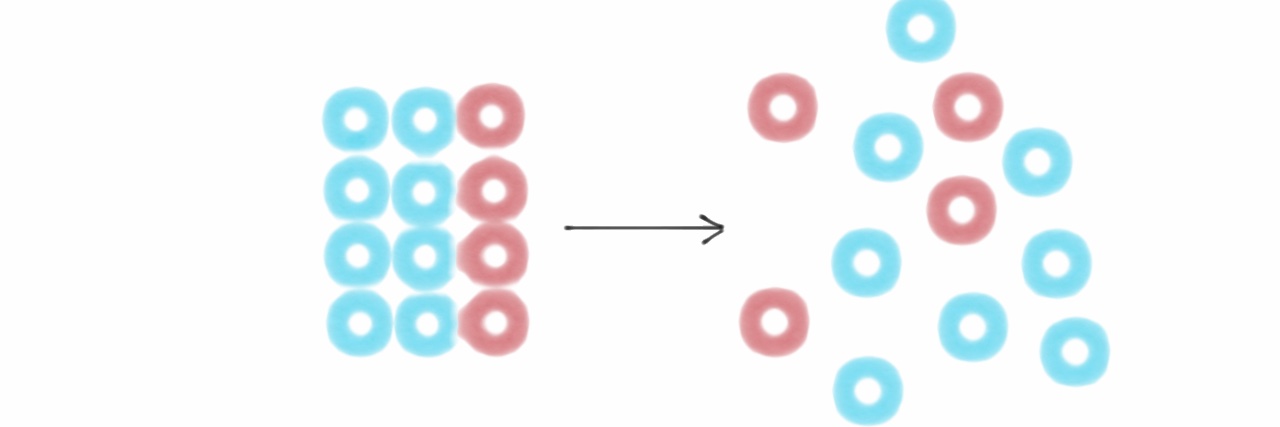

We showed model colored squares for a few hours. It learned to use a computer better than models trained on thousands of real screenshots.

AI denialists are sure sounding a lot like Flat Earthers right now. "AI isn't intelligent." "It's a prediction machine." Yet I can give an AI engine 100,000 lines of code and ask it to tell me what that code does. In plain English, it describes all the functionality of the code that it has NEVER seen before. That's not prediction. That's intelligence.

Stupidly late realization on why LLMs are so good at reasoning: human’s reasoning capability is bottlenecked by language! It’s not that languages are good at reasoning; reasoning ended up being defined by language first and foremost. The medium truly shapes the message

Dario Amodei says pre-training sits somewhere between learning and evolution. Humans inherit priors shaped over millions of years. LLMs start as random weights and distill trillions of tokens into those priors. We describe them using human learning metaphors. But the analogy only goes so far.

🚨 Official: The RB22 is here.

Introducing Cowork: Claude Code for the rest of your work. Cowork lets you complete non-technical tasks much like how developers use Claude Code.