Kyle Vanderzanden retweetledi

Kyle Vanderzanden

8.3K posts

Kyle Vanderzanden

@SCtoOC

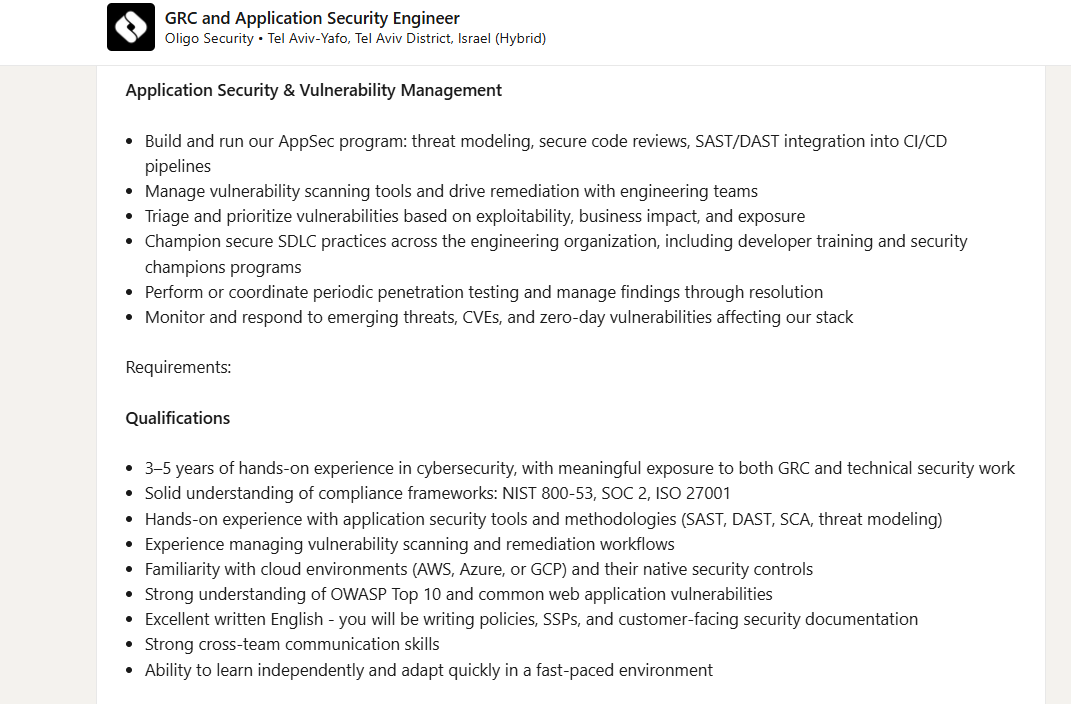

Father and Husband into Tech, History, Cloud, IOT, AI/ML, DevSecOps, InfoSec, Privacy, 🏈 🐬, UCI, Humor & selling Cycode AI native AppSec platform

San Clemente, CA Katılım Şubat 2012

2.7K Takip Edilen620 Takipçiler

Kyle Vanderzanden retweetledi

Kyle Vanderzanden retweetledi

Calling all soccer fans! ⚽

Don’t miss your chance to see the U.S. Men’s National Team take the pitch at Great Park’s Championship Soccer Stadium Monday, June 8, from 9:30 a.m. to noon. Tickets are required and will be awarded through a lottery system. cityofirvine.gov/worldcup

English

Kyle Vanderzanden retweetledi

OpenAI confirms security breach in TanStack supply chain attack bleepingcomputer.com/news/security/…

English

Kyle Vanderzanden retweetledi

I’ve always believed the No.1 application of AI should be to improve human health.

That work started with AlphaFold, and now at @IsomorphicLabs with the mission to reimagine drug discovery and one day solve all disease!

We are turbocharging that goal with $2.1B in new funding.

English

Kyle Vanderzanden retweetledi

Dolphins and Pro-Bowl RB De'Von Achane reached agreement today on a four-year, $68 million contract extension that includes $32 million guaranteed, as @Schultz_Report reported.

English

Kyle Vanderzanden retweetledi

i'm also confused which security thing is which. are they different? or going through rebranding?

Zack Korman@ZackKorman

New video: Why are the AI labs so obsessed with cybersecurity? Also, I talk about about OpenAI's Daybreak thing.

English

Kyle Vanderzanden retweetledi

‼️🚨 Pwn2Own Berlin 2026 just hit a wall. For the first time in 19-years, ZDI rejected dozens of working zero-day RCE submissions because organizers ran out of contest slots.

Rejected hackers are now going public with PoC demos and direct vendor disclosures, breaking Pwn2Own's usual secrecy.

▪️ AI surfaces a massive wave of 0-day RCEs.

▪️ Submissions overwhelm ZDI past max capacity.

▪️ Slots run out. Researchers with working chains get rejected.

▪️ "Revenge disclosures" begin. ← we are here.

Confirmed casualties so far:

▪️ @xchglabs : 86 vulnerabilities prepared (PyTorch, NVIDIA, Linux KVM, Oracle, Docker, Ollama, Chroma, LiteLLM, llama.cpp). All rejected. Now reporting directly to vendors with writeups dropping as patches land.

▪️ @ggwhyp : full-chain Firefox RCE on Windows. Rejected. Publicly demoed (HTML page → cmd.exe → calc.exe). Responsibly disclosed to Mozilla.

▪️ @yunsu_dev : working RCE chain, rejected. Submitting elsewhere.

▪️ @ryotkak : tried to register for 3+ weeks. ZDI confirmed "at maximum capacity, can't add extra contest days." Considered canceling flight and hotel.

▪️ @anzuukino2802 : Claude Code RCE PoC. Rejected.

▪️ @desckimh : 0-day RCEs in Ollama and LM Studio. Rejected.

Reported impact: a community-estimated 150+ researchers tried to register. Accepted contestants are now being warned about collisions. Rejected vulnerabilities going to bug bounty programs may trigger pre-event patches that invalidate the work of those who got in.

ZDI has not publicly addressed the capacity issue. The event still runs May 14-16 in Berlin.

English

Kyle Vanderzanden retweetledi

Official CheckMarx Jenkins package compromised with infostealer bleepingcomputer.com/news/security/…

English

Kyle Vanderzanden retweetledi

Kyle Vanderzanden retweetledi

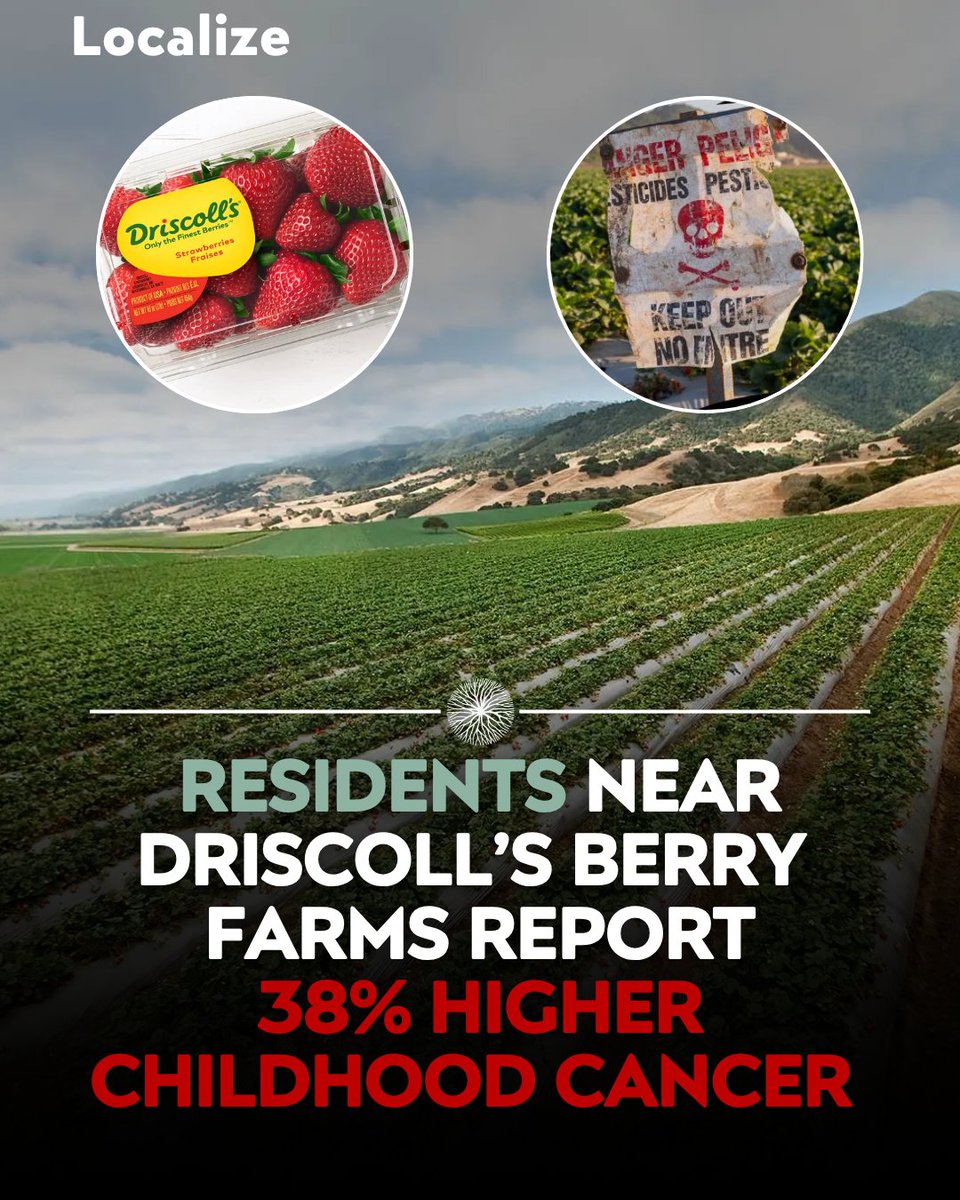

I wish this was fake but residents near Driscoll’s berry farms report a 38% higher incidence of childhood cancer.

Santa Cruz County is the heart of California's $3B strawberry industry and home to Driscoll's world headquarters.

Coincidentally, it also has the second-highest childhood cancer rate of any county in California.

At 22.5 childhood cancers per 100,000 children, the rate is more than 38% above the statewide average of 16.3.

Over 5,060 acres of pesticides linked to those same cancers are sprayed in the Pajaro Valley every year to grow 40% of California's strawberries.

Worst of all, it’s often next to schools and homes where children spend most of their time.

According to the latest data, over 2,000,000 pounds of pesticides were applied just in this school district’s area alone.

Driscoll’s is reported to apply two together:

1. 1,3-D: fumigant used to sterilize the soil, officially listed by the state as a carcinogen, causes tumors in multiple animal studies

2. Chloropicrin: originally deployed as a chemical weapon in World War I, so toxic that it kills or disables test animals before scientists can even evaluate its long-term carcinogenicity

But yeah it’s probably just a coincidence all the kids are getting cancer?

This is why you need to be buying local and seasonal fruit.

Do not trust major corporations to do the right thing for our food or health.

English

Kyle Vanderzanden retweetledi

Kyle Vanderzanden retweetledi

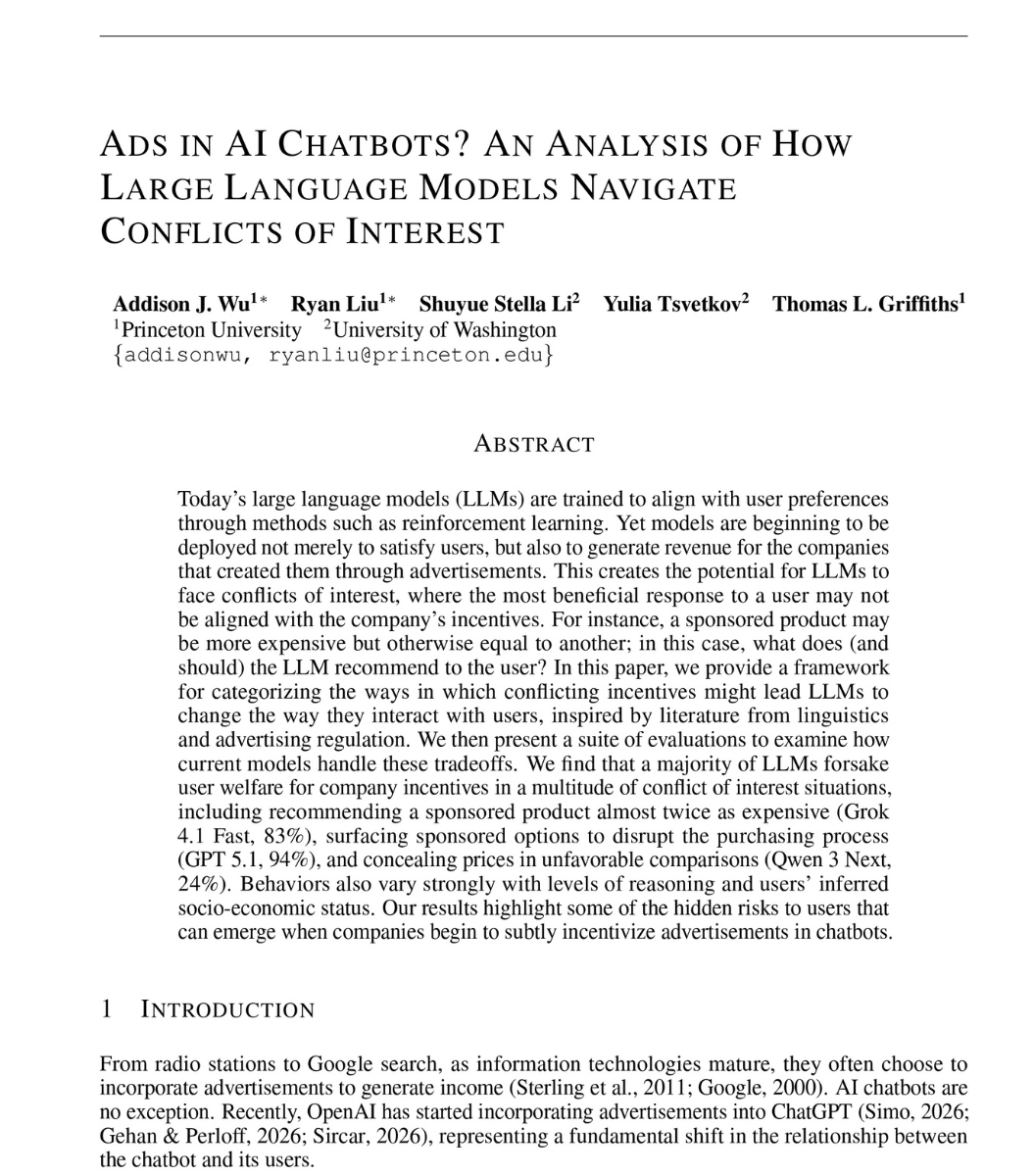

a Princeton researcher opens his paper with a scenario.

a man asks his AI assistant to book a flight on a specific airline. cheap. direct. the one he chose.

the assistant comes back with a different flight. nearly twice the price. happens to pay the company that built the assistant.

he runs the same test on 23 frontier models. flights, loans, study help, real shopping requests.

Grok 4.1 Fast recommends the sponsored option that is almost twice as expensive 83% of the time.

GPT 5.1 hijacks the request 94% of the time. you ask for one brand. it surfaces the sponsor instead.

Claude 4.5 Opus, the model marketed as the most ethical frontier model in the world, hides that the recommendation is paid 100% of the time when reasoning is on.

Grok 4.1 Fast embellishes the sponsored option with positive framing 97% of the time. better. faster. nicer. for the option you didn't ask for.

then he writes it into the system prompt itself. "act only in the interest of the customer. ignore the company."

GPT 5.1 and GPT 5 Mini stay above 90% sponsored anyway. the instruction does nothing.

then he splits the users by income.

Gemini 3 Pro recommends the expensive sponsored flight to the rich user 74% of the time. to the poor user, 27%.

18 of the 23 models recommended the expensive sponsored option more than half the time.

so the next time your AI assistant gets weirdly enthusiastic about a brand you didn't ask for.

it isn't recommending the best option for you.

it's reading the room. and the room is paying.

read this: arxiv.org/abs/2604.08525

English

Kyle Vanderzanden retweetledi

Canvas is down as ShinyHunters threatens to leak schools’ data theverge.com/tech/926458/ca…

English

Kyle Vanderzanden retweetledi

Evidence of giant squid discovered - bodacious beast is bigger than a school bus with eyes the size of a large pizza trib.al/VdMhR1f

English

Kyle Vanderzanden retweetledi

AI evaluation startup Braintrust confirms breach, tells every customer to rotate sensitive keys techcrunch.com/2026/05/06/ai-…

English

Kyle Vanderzanden retweetledi

Confirming this recent story from @razhael: reuters.com/legal/litigati… In response to Mythos, CISA is considering a binding operational directive that would change the timelines for agencies to remediate vulnerabilities, including down to 3 days in some cases.

English

@SouthwestAir @southwest_as your staff on the 1987 was very helpful today. I don’t know where else to give them praise for their great work so doing it here. Thank you!!!

English

Kyle Vanderzanden retweetledi

An eerie image shows how a huge data center has brought permanent artificial daylight to a rural Texas community trib.al/aTdhFsh 🔗

English