Samanvay Vajpayee

44 posts

Samanvay Vajpayee

@SamVajpayee

ML in Healthcare PhD-ing @uoftcompsci @vectorinst @UHN | BS Comp Eng @waterlooENG | ML @UOHI | Board, DIAS Group | Also comment on AI Safety, AI Policy & Tennis

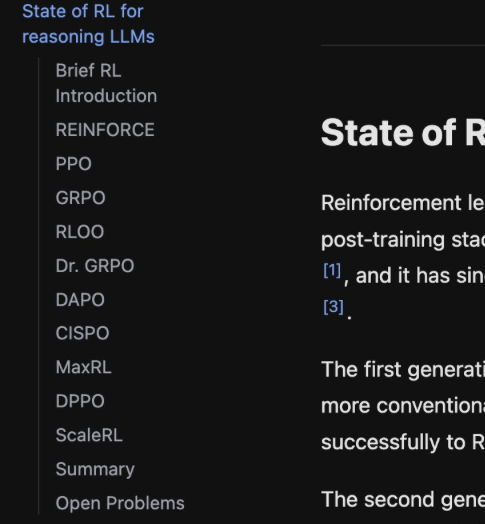

Finally finished! If you're interested in an overview of recent methods in reinforcement learning for reasoning LLMs, check out this blog post: aweers.de/blog/2026/rl-f… It summarizes ten methods, tries to highlight differences and trends, and has a collection of open problems

𝐀𝐬 𝐩𝐚𝐫𝐭 𝐨𝐟 𝐭𝐡𝐞 𝐨𝐧-𝐠𝐨𝐢𝐧𝐠 𝐀𝐈 𝐏𝐨𝐥𝐢𝐜𝐲 𝐖𝐡𝐢𝐭𝐞 𝐏𝐚𝐩𝐞𝐫 𝐒𝐞𝐫𝐢𝐞𝐬, 𝐭𝐡𝐞 𝐎𝐟𝐟𝐢𝐜𝐞 𝐨𝐟 𝐭𝐡𝐞 𝐏𝐫𝐢𝐧𝐜𝐢𝐩𝐚𝐥 𝐒𝐜𝐢𝐞𝐧𝐭𝐢𝐟𝐢𝐜 𝐀𝐝𝐯𝐢𝐬𝐞𝐫 𝐭𝐨 𝐭𝐡𝐞 𝐆𝐨𝐯𝐞𝐫𝐧𝐦𝐞𝐧𝐭 𝐨𝐟 𝐈𝐧𝐝𝐢𝐚 𝐫𝐞𝐥𝐞𝐚𝐬𝐞𝐬 𝐚 𝐰𝐡𝐢𝐭𝐞 𝐩𝐚𝐩𝐞𝐫 𝐨𝐧 “𝐀𝐝𝐯𝐚𝐧𝐜𝐢𝐧𝐠 𝐈𝐧𝐝𝐢𝐠𝐞𝐧𝐨𝐮𝐬 𝐅𝐨𝐮𝐧𝐝𝐚𝐭𝐢𝐨𝐧 𝐌𝐨𝐝𝐞𝐥𝐬. The versatility of Foundation Models makes them a critical layer of today’s AI ecosystem and a key area for innovation in India. Therefore, developing indigenous foundation models is a strategic priority. India’s objective is to harness foundation models for inclusive growth and public good, while ensuring they are governed in a manner consistent with the country’s values, legal framework, and security interests. This white paper provides an understanding of India’s approach to advancing indigenous foundation models through public–private collaboration and to governing these systems that support trust, accountability, and responsible adoption. The White Paper also provides details on India’s approach - which is centred on building indigenous capability across the foundation-model stack. Rather than relying on a single model, India is developing an ecosystem that combines (i) shared compute access, (ii) India-centric data and model repositories, and (iii) multiple model-building efforts across text, speech, multimodal, and sectoral systems. Read the White Paper here: psa.gov.in/CMS/web/sites/…

Our study of AMIE at @BIDMC_Medicine is out! You can read about what we did in posts from my co-authors (including the Google post below). But I wanted to talk about some background for this study, and what I think are most interesting findings. 🧵⬇️ x.com/GoogleResearch…

This is wild. theaustralian.com.au/business/techn…

New York wants to ban AI that outscores doctors on medical exams. Over 900,000 New Yorkers have no insurance. 92% of low-income legal problems go unaddressed. Anti-AI NY bill S7263 isn't consumer protection. It's cartel protection. gli.st/ypknnhdn

New heart disease guidelines suggest statins as early as age 30 statnews.com/2026/03/13/hea…

@sayashk @random_walker They only have PhD students to do work? I would have thought that training successors, would be important in of itself 🫠

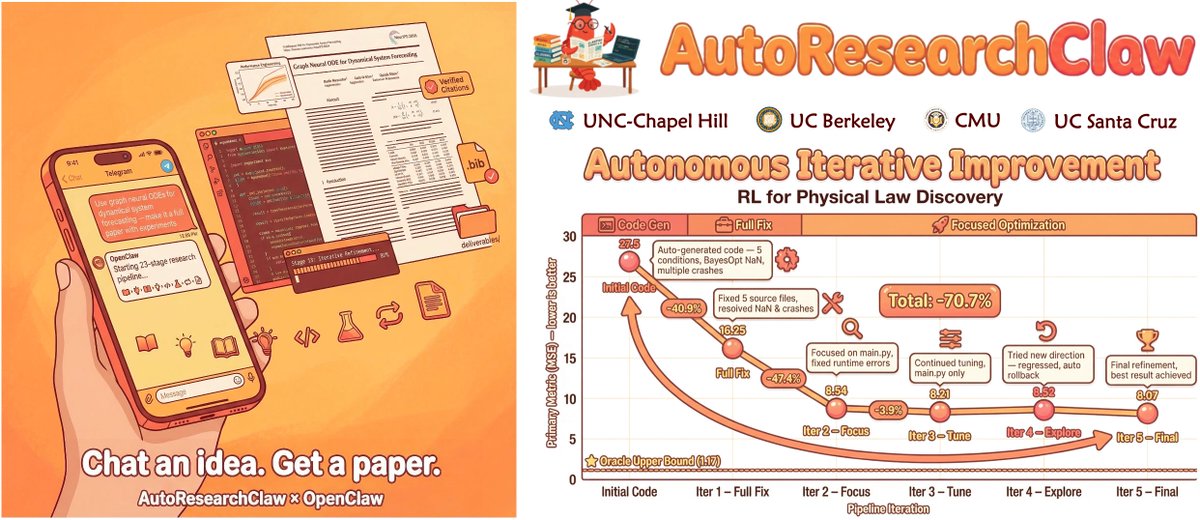

In the last few months, I've spoken to many CS professors who asked me if we even need CS PhD students anymore. Now that we have coding agents, can't professors work directly with agents? My view is that equipping PhD students with coding agents will allow them to do work that is orders of magnitude more impressive than they otherwise could. And they can be *accountable* for their outcomes in a way agents can't (yet). For example, who checks the agent's outputs are correct? Who is responsible for mistakes or errors?