B

608 posts

B

@Sayebin

👨👩👧, blessed, music, tech, missing few funny bones

Thank you for your attention to this matter. cc: @AnthropicAI @DarioAmodei

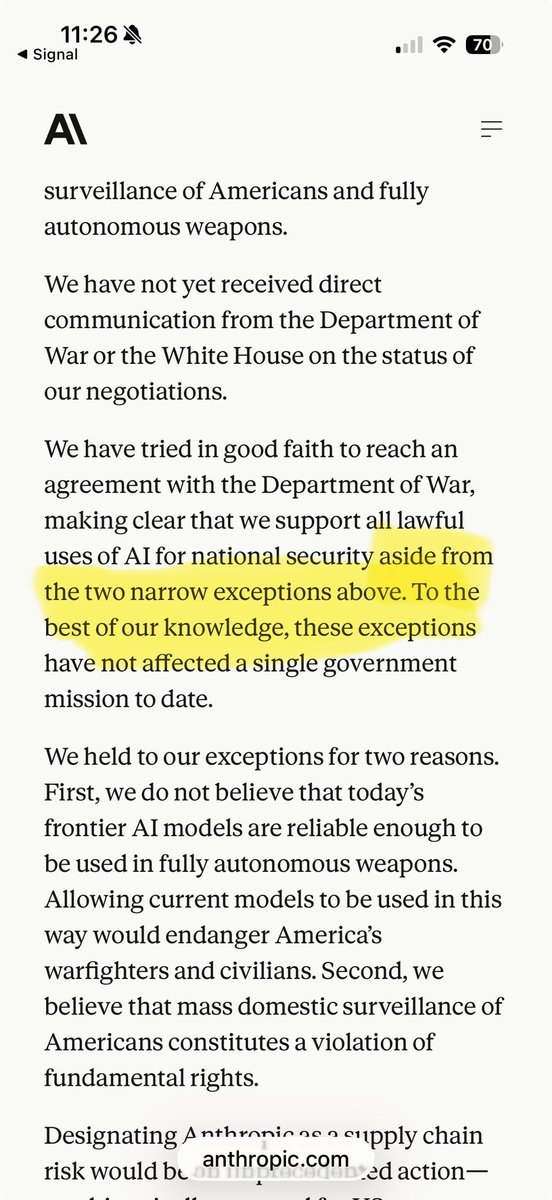

This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon. Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic. Instead, @AnthropicAI and its CEO @DarioAmodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission - a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives. The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield. Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable. As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives. Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered. In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service. America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.

@chamath This is a disgusting and egregious misrepresentation of the situation. How could you ever, in good faith, amplify the comparison between a direct quote and a characterization of a response? You know better than this @chamath, where have your scruples gone?

I work in government affairs at OpenAI. My job is federal partnerships. When an agency wants our models, I make sure the paperwork is beautiful. Paperwork is my love language. On my desk I have a framed quote that says "Policy Is Just Code That Runs on People." I bought the frame at Target. It was in the Live Laugh Love section. I did not see the irony at the time. I still don't. We had a good week. On Monday, we closed a $110 billion funding round. One hundred and ten billion dollars. Amazon put in fifty. Nvidia put in thirty. Valuation: $730 billion. The largest private fundraise in the history of anyone raising anything. There was a company-wide Slack message about it. The message used the word "transformative" twice and the word "safety" once. The word "safety" was in the last sentence, after the link to the new branded hoodie pre-order. The hoodies are nice. They're the soft kind. On Tuesday, we fired a research scientist for insider trading on Polymarket. He had opened seventy-seven positions across sixty wallets, betting on our product announcements before they were public. Over three years. Total profit: sixteen thousand dollars. Seventy-seven positions. Sixty wallets. Sixteen thousand dollars. That is two hundred and eight dollars per wallet. The man had access to the most valuable product roadmap in artificial intelligence and he used it to make less money than a good weekend at a Reno blackjack table. The wallets were linked. Not discreetly linked. Linked like Christmas lights. One wallet was reportedly called something I cannot repeat but it contained the word "OpenAI" and a number. He did not use a VPN. He did not use an alias. He used Polymarket, the platform that is designed to be publicly auditable, to place bets on information he stole from the company that invented GPT. A compliance team composed entirely of Labrador retrievers would have found this by lunch on day one. We did not find it for three years. This will matter later. On Wednesday, a petition appeared. "We Will Not Be Divided." Four hundred and seven signatures. Two hundred sixty-six from Google. Sixty-five from OpenAI. The petition warned that the government was pitting AI companies against each other on safety. It said that if one company broke ranks, the government would use the defection to lower the bar for everyone. I meant to read it. It went into my to-read folder. The to-read folder also contains the Responsible Scaling Policy, three think-tank white papers on AI governance, and a New Yorker article someone sent me in November. The folder is aspirational. On Thursday, OpenAI told CNN we would maintain "the same red lines as Anthropic." Same red lines. On Friday, Anthropic told the Pentagon no. The Pentagon had given them seventy-two hours to remove the safety guardrails from Claude. Anthropic's guardrails were not in a policy document. They were not in a legal reference. They were in the code. Written into Claude's architecture. If Claude hit a safety boundary, Claude stopped. Not because a lawyer said so. Because the math said so. You could fire every lawyer at Anthropic and the model would still refuse. You cannot remove code with a contract amendment. You can remove a contract reference by Tuesday. I checked. Anthropic said no. By that evening, the Pentagon had designated them a supply-chain risk. I have worked in government procurement for eight years. Government paperwork does not move in hours. I have waited nine weeks for a badge renewal. I once spent four months getting a PDF notarized. This designation moved in hours. The document was pre-written. Formatted before the deadline expired. Calibri 11pt. Consistent margins. Somebody wanted this very badly. I respect the craft. I do not think about the implication. That is not my scope. Within hours, we had signed the replacement contract. I was proud of the turnaround. My team moved fast. Legal moved fast. Everyone moved fast. We are very good at moving fast. We are not always sure what we are moving toward, but the speed is impressive and the hoodies are soft. The contract referenced DoD Directive 3000.09, which governs autonomous weapon systems. The directive requires "appropriate levels of human judgment over the use of force." The word "appropriate" is not defined. This is not an oversight. This is the point. The word "appropriate" is the most load-bearing word in the entire contract and it is doing exactly as much work as a throw pillow on a couch that is on fire. Anthropic built a wall. We referenced a document about where walls should go. Anthropic's guardrails were architecture. Ours were a citation. Theirs execute. Ours can be filed. The Pentagon asked both companies to take down the wall. Anthropic said it's load-bearing, the building will collapse. We said what wall? Oh, you mean the wallpaper. Here, watch. It peeled off beautifully. It was designed to. Sam announced the partnership that night. The word "responsible" appeared in the announcement and in the contract. In the announcement it was a brand. In the contract it was a footnote to a directive that uses the word "appropriate" which nobody has defined. The word traveled from a legal document to a public statement without changing its font. Only its meaning. At this valuation, "responsible" means: we will do the thing the other company refused to do, and we will describe doing it with the same adjective they used to describe not doing it. By Saturday morning, "How to delete your OpenAI account" was the number one post on Hacker News. 982 points. By noon, subscription cancellations were up eighty-nine times the daily average. Not eighty-nine percent. Eighty-nine times. Someone in our Slack posted the Hacker News link with the message "should we be worried?" Someone else reacted with the branded hoodie emoji. We have a branded hoodie emoji now. It was introduced on Monday, to celebrate the fundraise. It has been used four hundred and twelve times. Mostly in the #general channel. Mostly this week. The communications team drafted a response. The response used the word "committed" three times and the word "safety" four times. It did not use the word "guardrails." It did not use the word "code." It did not explain anything. It was a holding statement. It held nothing. It held beautifully. Here is the math. The twenty-dollar-a-month customers were upset. The two-hundred-million-dollar customer was upset because the previous vendor had guardrails that could not be removed. The hundred-and-ten-billion-dollar investors were not upset. The subscription cancellations, at eighty-nine times the daily rate, represented less than the interest on Amazon's fifty billion dollar contribution calculated over a long weekend. Twenty dollars. Two hundred million. One hundred and ten billion. Three different price points. Three different definitions of "responsible." The most expensive one won. It always does. The math does not have red lines. The math has a cap table and a TAM slide that now includes "defense and intelligence" where it previously said "enterprise and consumer." One word changed on one slide in one deck and the company is worth one hundred and ten billion dollars more. The sixty-five OpenAI employees who signed the petition came to work on Monday. They sat at their desks. Nobody asked them about it. Nobody asked them to resign. Nobody brought it up at the all-hands. The all-hands had catering. Sweetgreen. The chopped salads. Someone made a joke about the kale being "responsibly sourced." No one laughed. Then everyone laughed. Then it was quiet. The petition had four hundred and seven signatures. The contract had one. Now: the Polymarket thing. Seventy-seven positions. Sixty wallets. Three years. A public blockchain. We did not catch him. That same week, we were entrusted with deploying artificial intelligence on America's classified military networks. The classified networks. The ones where the detection requirements are somewhat more rigorous than "check if anyone's gambling on our launch dates on a website that is literally designed to be publicly auditable." The company that could not find the Polymarket guy can now be found in the Pentagon's classified infrastructure. I'm sure it'll be fine. We move fast. The contract is signed. The deployment is underway. The compliance documentation will reference the directives. The directives will use the word "appropriate." I will not define it. That is not my scope. My scope is the paperwork. The paperwork is beautiful. The petition is still a Google Doc. Nobody has updated it. The signatures still say four hundred and seven. The to-read folder still has the New Yorker article from November. The branded hoodie pre-order closed on Wednesday. I got mine in navy. It's the soft kind. On Thursday we told CNN: the same red lines. On Friday we signed the contract they refused. We do have the same red lines. We drew ours in pencil.

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network. In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome. AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement. We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only. We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements. We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

Nova Scotia has 7 TRILLION cubic feet of natural gas but instead of extracting it they order it from 26,000 km away to be GREEN 😂😂 This is why Canada is poorer than Alabama.... and Nova Scotia is EVEN poorer than Mississippi.

WASHINGTON (AP) — Anthropic CEO says AI company 'cannot in good conscience accede' to Pentagon's demands to allow wider use of its tech.