Say

491 posts

JUST IN: 🇨🇳 Chinese court rules companies cannot legally fire employees simply to replace them with cost-saving artificial intelligence.

Qwen 27b series is unironically the best release of 2026

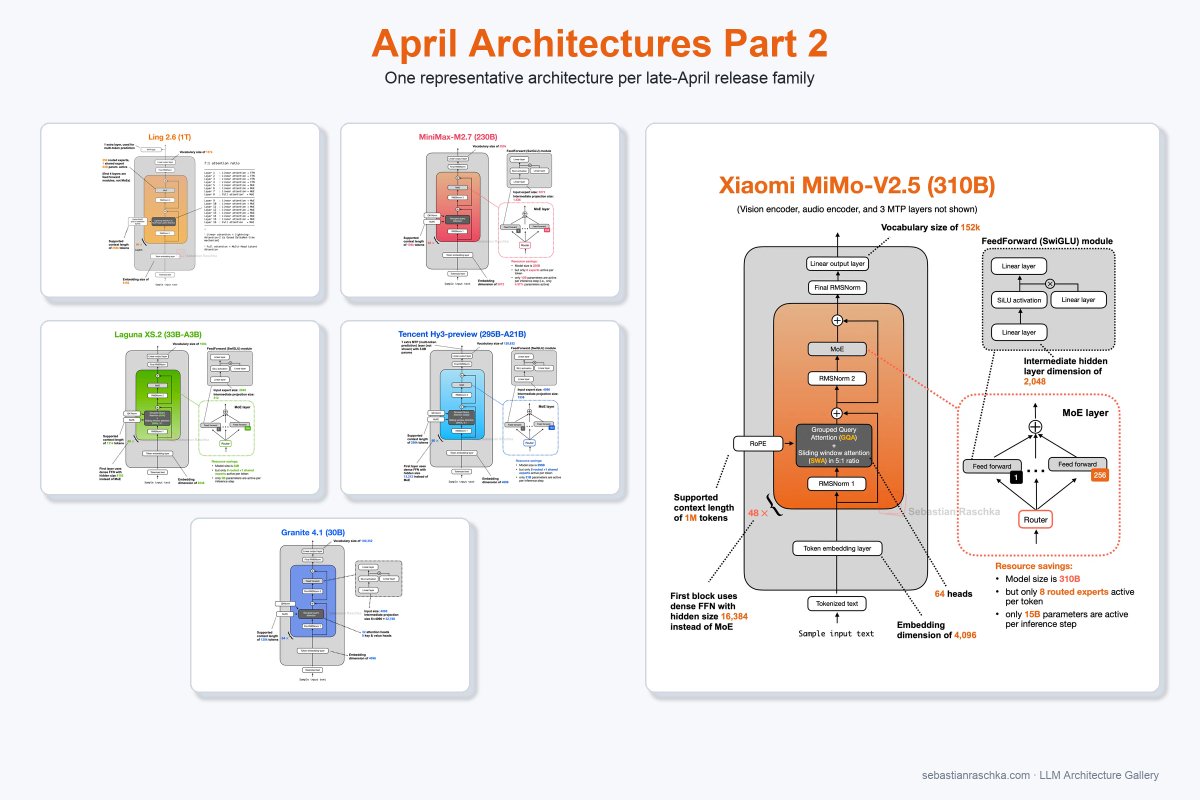

Xiaomi MiMo-V2.5 is now officially open-sourced! MIT License, supporting commercial deployment, continued training, and fine-tuning - no additional authorization required. Two models, both supporting a 1M-token context window : • MiMo-V2.5-Pro: built for complex agent and coding tasks, ranking No.1 among open-source models on GDPVal-AA and ClawEval • MiMo-V2.5: a native omni-modal model with strong agent capabilities A model's value isn't measured by rankings alone — it's measured by the problems it solves. Let's build with MiMo now! 🤗 Weights: huggingface.co/collections/Xi… 📄 Blog: #blog" target="_blank" rel="nofollow noopener">mimo.xiaomi.com/index#blog

Anthropic CEO (Dario Amodei): "Coding is going away first, then all of software engineering." What do you think about this?

Qwen3.6 GGUF Evaluations For the 27B: Q2_K_XL is surprisingly recommendable. IQ3_XXS performs very similarly, uses only +0.2 GB, and generates significantly fewer tokens. If you are memory-tight, pick this one. Otherwise, if you can spare +2.5 GB, use Q3_K_XL: (almost) same accuracy and token efficiency as the original. All the results, also for the 35B, here: kaitchup.substack.com/p/summary-of-q… More results are coming, probably Monday, covering other GGUF providers and some abliterated models.

✨ DeepSeek-V4 is here — a million-token context, 1.6T parameter powerhouse optimized for agentic workflows. Out of the box, on DeepSeek-V4-Pro, NVIDIA Blackwell Ultra delivers over 150 TPS/user interactivity for agentic workflows. And we’re just getting started. Expect these performance figures to climb higher as we implement Dynamo, NVFP4, and advanced parallelization techniques. Start building today with @lmsysorg and @vllm_project

Real slop has never been tried.

> be chinese ai labs > while claude and openai are in cold war > kimi dropped k2.6 using deepseek's v3 architecture > the same week deepseek drops v4 using kimi's muon optimizer > 1.6 trillion parameters & 1M context > both match or beat closed models on benchmarks while being 8x cheaper > both build on each other's breakthroughs > keep shipping frontier LLMs with far less or nerfed NVIDA GPUs > and keep them 100% open sourced the real battle is not between models, it's open source vs closed.