Som Jaiswal

128 posts

Som Jaiswal

@SomJaiswalAI

Building @helping_ai | Humanizing Artificial Intelligence

Katılım Nisan 2025

82 Takip Edilen16 Takipçiler

The Galgotia fiasco is the inevitable end result of what I said about education a while back. When institutions prioritize optics, money, and rote memorization over real learning and critical thinking, failure isn’t an accident - it’s the outcome. m.economictimes.com/magazines/pana…

English

@sabeer Those who will control infra will rule the industry for sure :)

English

Everyone is debating crypto prices.

They’re missing the real shift.

AI agents already write code, negotiate, and operate 24/7 - yet they can’t transact.

No bank accounts. No fiat. No regulated money.

At the same time, 2B+ humans are locked out of digital value systems.

The future isn’t another token.

It’s infrastructure.

English

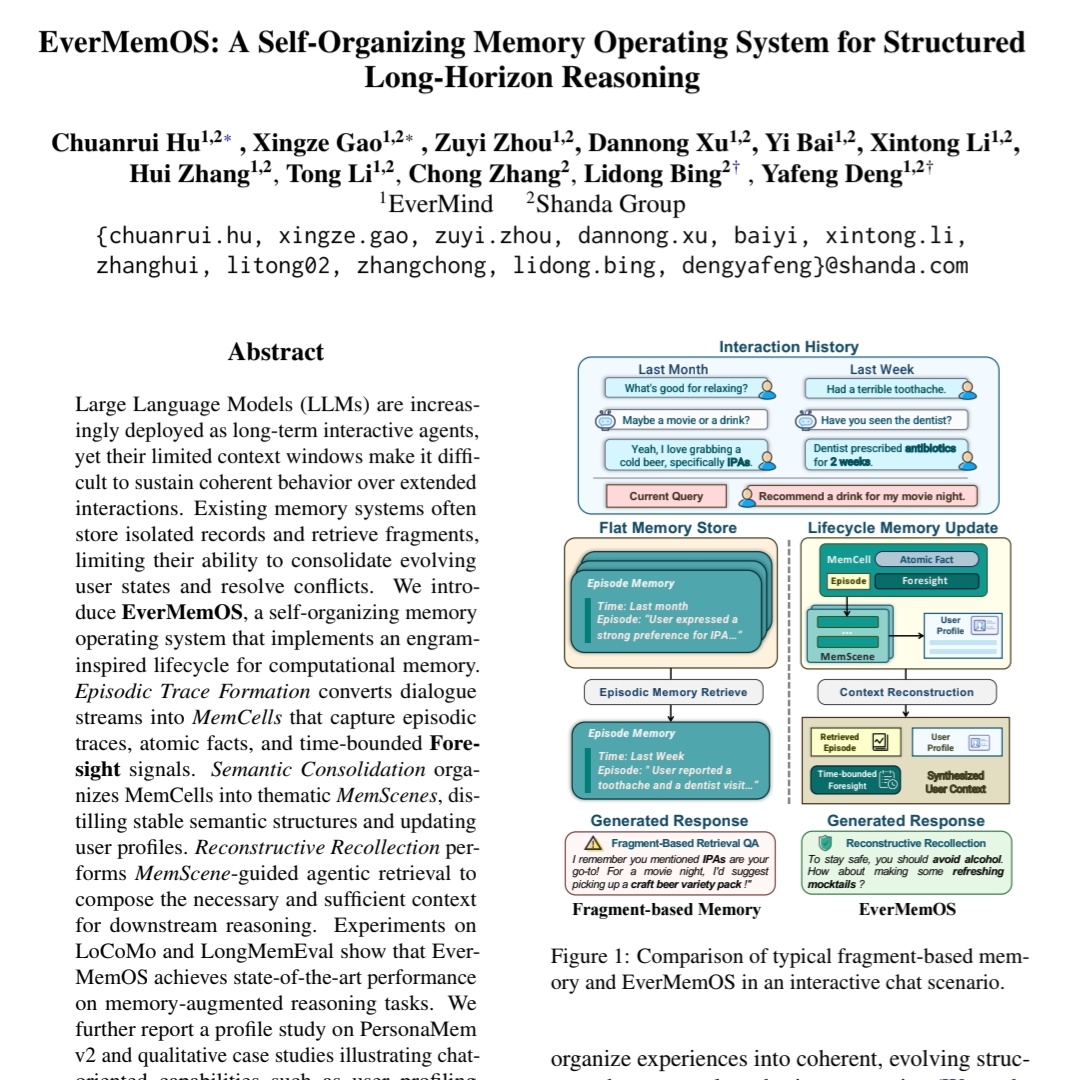

Read here : [2601.05184v1] Observations and Remedies for Large Language Model Bias in Self-Consuming Performative Loop share.google/JhJQ8vf4ZjrFBK…

English

Just read a nice paper on LLM bias and self-consuming training loops. Very relevant for anyone working on synthetic data and fairness.

At @helping_ai, we’re focused on building trustworthy AI, and research like this really matters @ucsc

English

@Yuchenj_UW The difference is clear one is chasing the dominance over all stuff and other is chasing the exact enterprise use case

English

OpenAI and Anthropic have opposite cultures.

OpenAI runs like a modern Bell Labs. 2-3 researchers spin up projects like GPT & Sora, then turn them into products. Maximal ambition, from each kind of model to robotics to AI device.

Anthropic is brutally focused. They believe coding is the path to AGI. Everything else is noise. No image models. No video models. No vagueposts.

It will be fascinating to see which one wins.

English

@AndrewYNg @AndrewYNg True! If a system can’t survive onboarding, feedback, and real work for a week, calling it AGI is just marketing. A work-based, adversarial, non-prepublished test is the right bar. Benchmarks should chase reality, not the other way around.

English

Happy 2026! Will this be the year we finally achieve AGI? I’d like to propose a new version of the Turing Test, which I’ll call the Turing-AGI Test, to see if we’ve achieved this. I’ll explain in a moment why having a new test is important.

The public thinks achieving AGI means computers will be as intelligent as people and be able to do most or all knowledge work. I’d like to propose a new test. The test subject — either a computer or a skilled professional human — is given access to a computer that has internet access and software such as a web browser and Zoom. The judge will design a multi-day experience for the test subject, mediated through the computer, to carry out work tasks. For example, an experience might consist of a period of training (say, as a call center operator), followed by being asked to carry out the task (taking calls), with ongoing feedback. This mirrors what a remote worker with a fully working computer (but no webcam) might be expected to do.

A computer passes the Turing-AGI Test if it can carry out the work task as well as a skilled human.

Most members of the public likely believe a real AGI system will pass this test. Surely, if computers are as intelligent as humans, they should be able to perform work tasks as well as a human one might hire. Thus, the Turing-AGI Test aligns with the popular notion of what AGI means.

Here’s why we need a new test: “AGI” has turned into a term of hype rather than a term with a precise meaning. A reasonable definition of AGI is AI that can do any intellectual task that a human can. When businesses hype up that they might achieve AGI within a few quarters, they usually try to justify these statements by setting a much lower bar. This mismatch in definitions is harmful because it makes people think AI is becoming more powerful than it actually is. I’m seeing this mislead everyone from high-school students (who avoid certain fields of study because they think it’s pointless with AGI’s imminent arrival) to CEOs (who are deciding what projects to invest in, sometimes assuming AI will be more capable in 1-2 years than any likely reality).

The original Turing Test, which required a computer to fool a human judge, via text chat, into being unable to distinguish it from a human, has been insufficient to indicate human-level intelligence. The Loebner Prize competition actually ran the Turing Test and found that being able to simulate human typing errors — perhaps even more than actually demonstrating intelligence — was needed to fool judges. A main goal of AI development today is to build systems that can do economically useful work, not fool judges. Thus a modified test that measures ability to do work would be more useful than a test that measures the ability to fool humans.

For almost all AI benchmarks today (such as GPQA, AIME, SWE-bench, etc.), a test set is determined in advance. This means AI teams end up at least indirectly tuning their models to the published test sets. Further, any fixed test set measures only one narrow sliver of intelligence. In contrast, in the Turing Test, judges are free to ask any question to probe the model as they please. This lets a judge test how “general” the knowledge of the computer or human really is. Similarly, in the Turing-AGI Test, the judge can design any experience — which is not revealed in advance to the AI (or human subject) being tested. This is a better way to measure generality of AI than a predetermined test set.

AI is on an amazing trajectory of progress. In previous decades, overhyped expectations led to AI winters, when disappointment about AI capabilities caused reductions in interest and funding, which picked up again when the field made more progress. One of the few things that could get in the way of AI’s tremendous momentum is unrealistic hype that creates an investment bubble, risking disappointment and a collapse of interest. To avoid this, we need to recalibrate society’s expectations on AI. A test will help.

If we run a Turing-AGI Test competition and every AI system falls short, that will be a good thing! By defusing hype around AGI and reducing the chance of a bubble, we will create a more reliable path to continued investment in AI. This will let us keep on driving forward real technological progress and building valuable applications — even ones that fall well short of AGI. And if this test sets a clear target that teams can aim toward to claim the mantle of achieving AGI, that would be wonderful, too. And we can be confident that if a company passes this test, they will have created more than just a marketing release — it will be something incredibly valuable.

[Original text: deeplearning.ai/the-batch/issu… ]

English

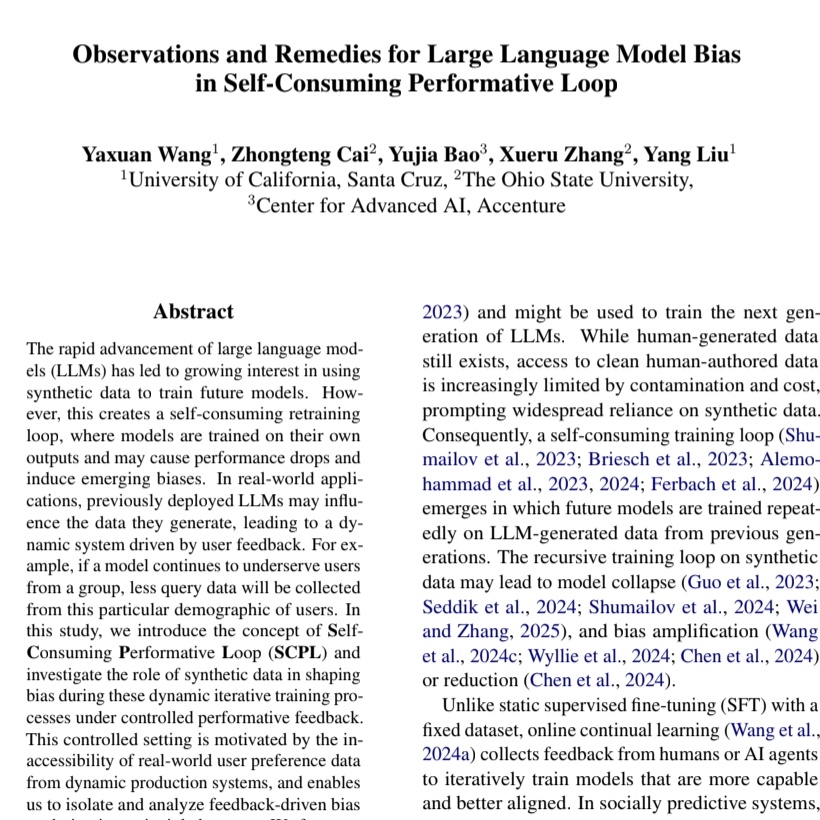

This paper shows “Aha moments” in reasoning models are mostly illusion. Models don’t really self-correct; accuracy improves only when we force rethinking under uncertainty :)

Read here - The Illusion of Insight in Reasoning Models share.google/ZcQwsptaWMvGCF…

English

@drfeifei As a young AI researcher, this is the frontier that excites me most. Moving from words to worlds through world models feels essential for true spatial intelligence. Looking forward to learning from this.

English

AI’s next frontier is Spatial Intelligence, a technology that will turn seeing into reasoning, perception into action, and imagination into creation. But what is it? Why does it matter? How do we build it? And how can we use it?

Today, I want to share with you my thoughts on building and using world models to unlock spatial intelligence in this essay below. 1/n

English

Som Jaiswal retweetledi

We’re hiring exceptional engineers to join our team.

We’re building something bold at the intersection of AI x Finance with high-impact, technically challenging, and moving fast.

If you thrive in AI and backend engineering, reach out: barot@spacesos.com

English

Som Jaiswal retweetledi