Ward Plunet

13.4K posts

Ward Plunet

@StartupYou

Phd in Neuroscience looking at the intersection between machine learning and neuroscience #machinelearning #AI #neuroscience

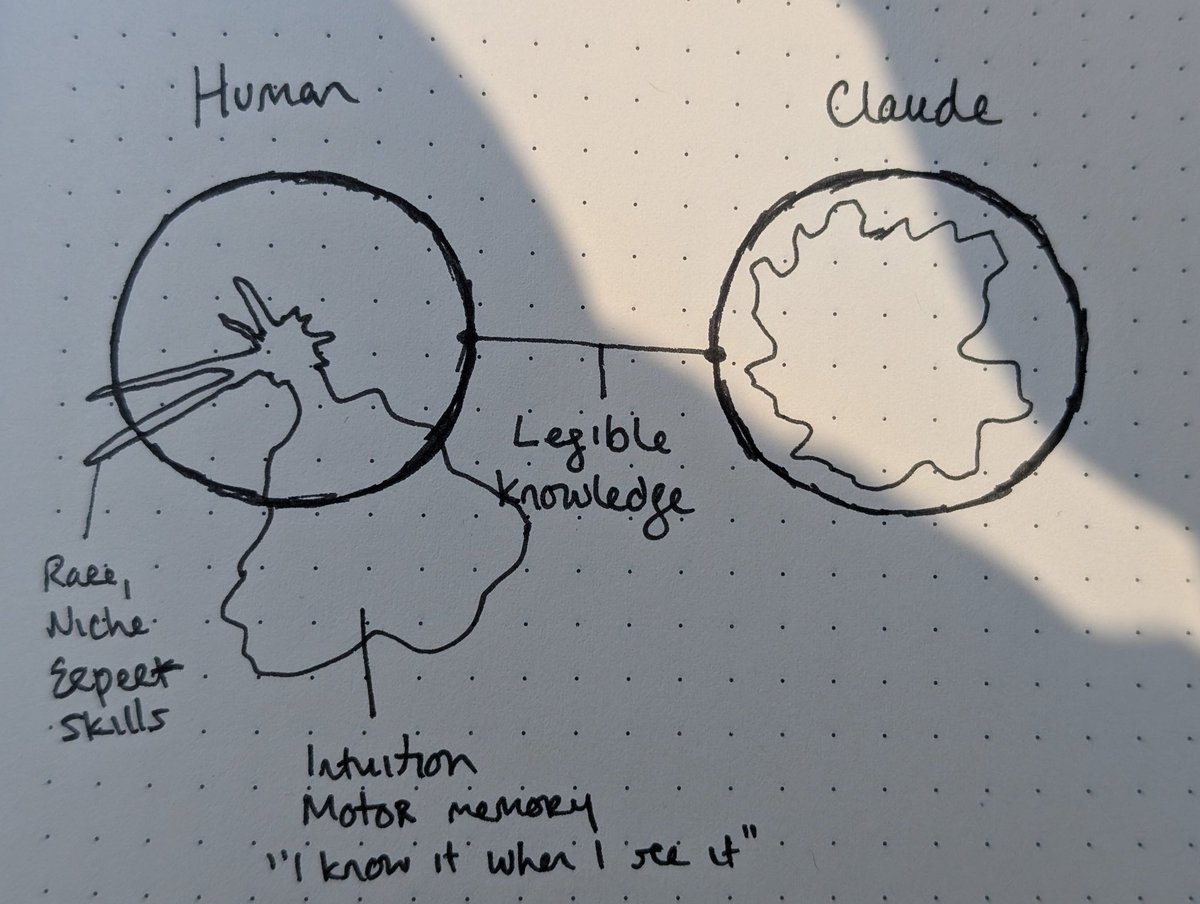

For example, we gave Claude an impossible programming task. It kept trying and failing; with each attempt, the “desperate” vector activated more strongly. This led it to cheat the task with a hacky solution that passes the tests but violates the spirit of the assignment.

🔥 AI just got its own infinite laboratory. Introducing LabWorld — the leap for AI-powered science. LabOS just turned real biomedical protocols into fully executable, high-fidelity digital simulations. This changes everything for AI scientists. 🧬⚡

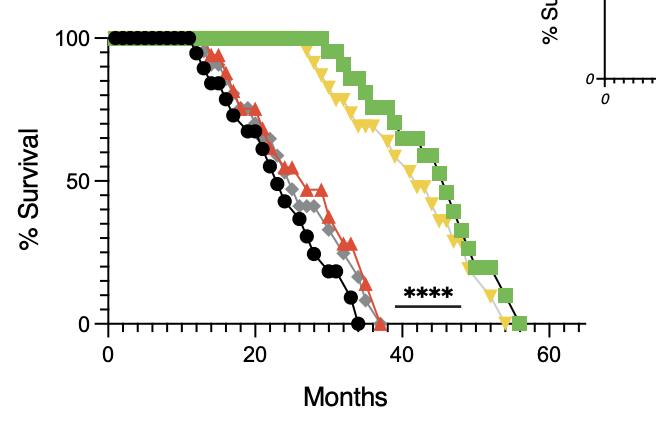

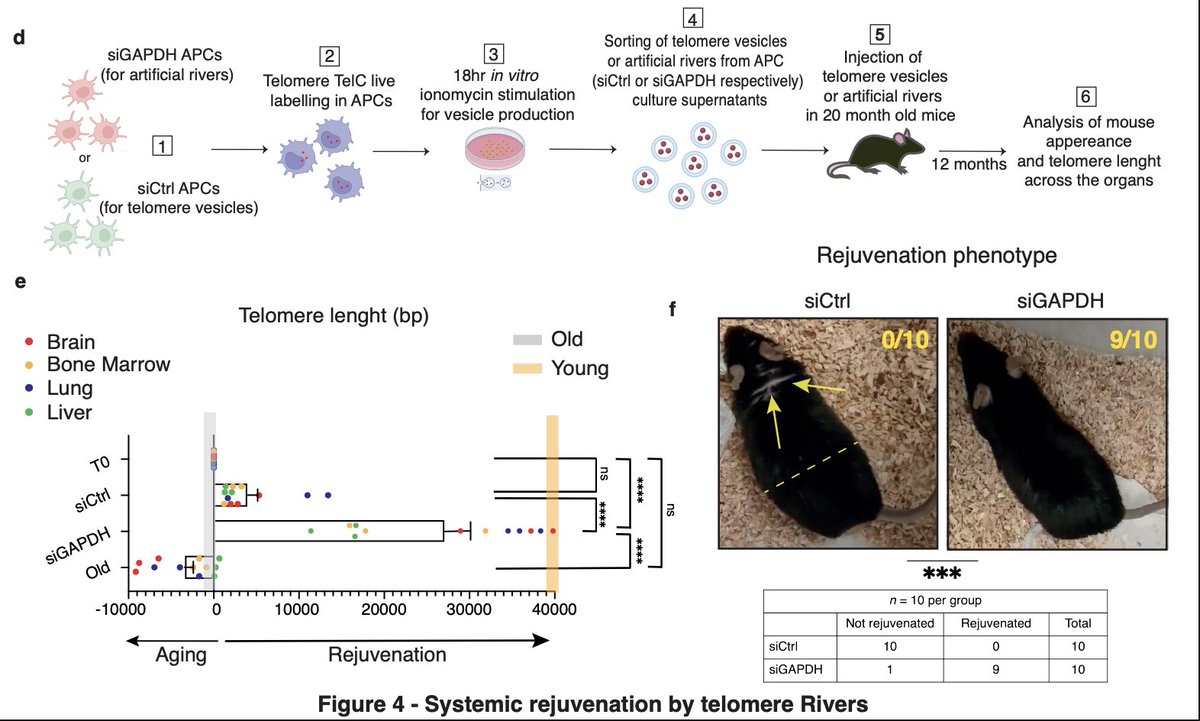

David Sinclair said: "You can reverse aging by 75% in 6 weeks… by reinstalling the "software" of the body so that it's young again." This idea sprouted when he proved in his first experiment that you can accelerate aging in mice: "We took two mice born on the same day—same age, same genetics. We 'scratched the CD' of one mouse, corrupting its software and accelerating its aging. The result was dramatic. One looked far older than its brother." He believed if you can give aging, you can also take it away. Tomorrow, I'll share his experiment on how he reversed aging in mice (and then Monkeys). — @davidasinclair

Today we're introducing TRIBE v2 (Trimodal Brain Encoder), a foundation model trained to predict how the human brain responds to almost any sight or sound. Building on our Algonauts 2025 award-winning architecture, TRIBE v2 draws on 500+ hours of fMRI recordings from 700+ people to create a digital twin of neural activity and enable zero-shot predictions for new subjects, languages, and tasks. Try the demo and learn more here: go.meta.me/tribe2

Brain tissue is as soft as gelatin. Most silicon electrodes implanted into it are ~1,000,000x stiffer. That mismatch isn’t a small detail, it’s one of the main reasons BCIs fail over time. #Neurotechnology #BCI #BrainComputerInterface #Neuroscience #MaterialsScience

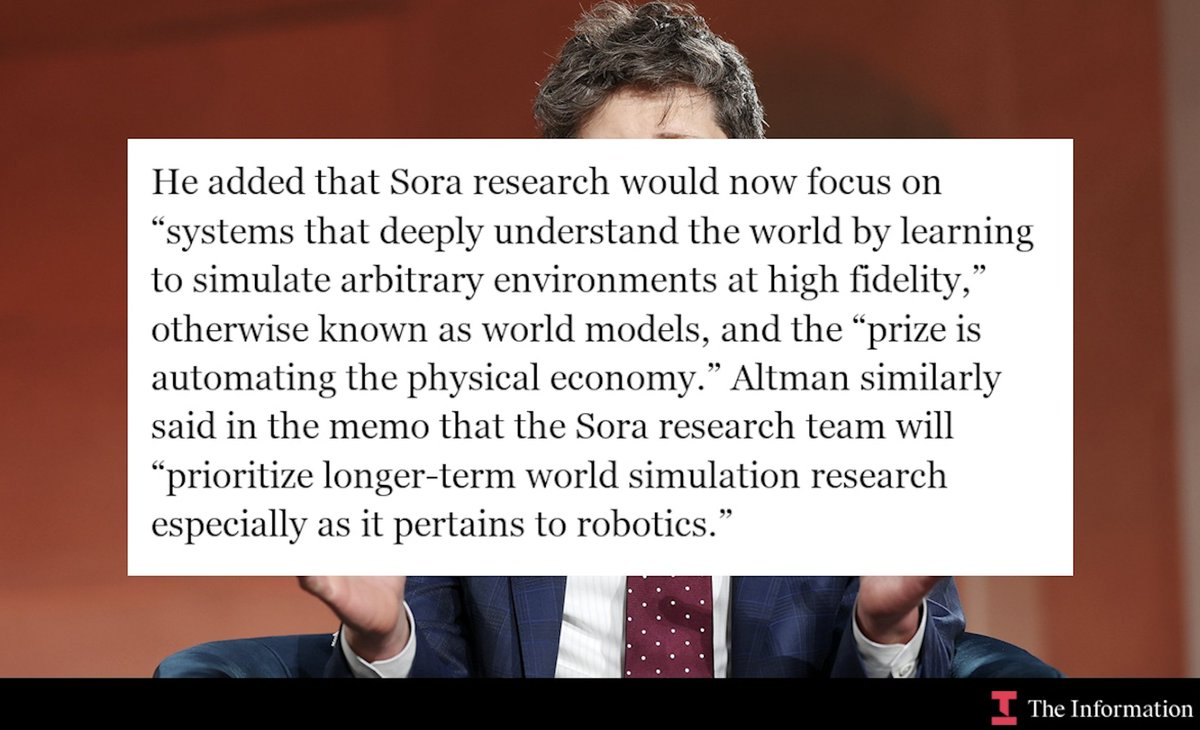

Either OpenAI officially achieved AGI or this is the biggest troll move ever: - they rename product organization to "AGI Deployment" - Altman says the next LLM is a "very strong model" - it very much accelerate the economy Quote: "Altman also said that the company would be renaming senior executive Fidji Simo’s product organization to “AGI Deployment,” a reference to artificial general intelligence, or AI that’s roughly on par with humans." However, Altman says "Spud is very strong model" in “a few weeks” that the team believes “can really accelerate the economy.”

Introducing Hyperagents: an AI system that not only improves at solving tasks, but also improves how it improves itself. The Darwin Gödel Machine (DGM) demonstrated that open-ended self-improvement is possible by iteratively generating and evaluating improved agents, yet it relies on a key assumption: that improvements in task performance (e.g., coding ability) translate into improvements in the self-improvement process itself. This alignment holds in coding, where both evaluation and modification are expressed in the same domain, but breaks down more generally. As a result, prior systems remain constrained by fixed, handcrafted meta-level procedures that do not themselves evolve. We introduce Hyperagents – self-referential agents that can modify both their task-solving behavior and the process that generates future improvements. This enables what we call metacognitive self-modification: learning not just to perform better, but to improve at improving. We instantiate this framework as DGM-Hyperagents (DGM-H), an extension of the DGM in which both task-solving behavior and the self-improvement procedure are editable and subject to evolution. Across diverse domains (coding, paper review, robotics reward design, and Olympiad-level math solution grading), hyperagents enable continuous performance improvements over time and outperform baselines without self-improvement or open-ended exploration, as well as prior self-improving systems (including DGM). DGM-H also improves the process by which new agents are generated (e.g. persistent memory, performance tracking), and these meta-level improvements transfer across domains and accumulate across runs. This work was done during my internship at Meta (@AIatMeta), in collaboration with Bingchen Zhao (@BingchenZhao), Wannan Yang (@winnieyangwn), Jakob Foerster (@j_foerst), Jeff Clune (@jeffclune), Minqi Jiang (@MinqiJiang), Sam Devlin (@smdvln), and Tatiana Shavrina (@rybolos).