Stefano Straus

708 posts

@milesdeutscher Switching model halfway means recreating the cache at a higher cost. Do your math first.

English

@levelsio The name is VMC in Italy, mechanical controlled ventilation.

English

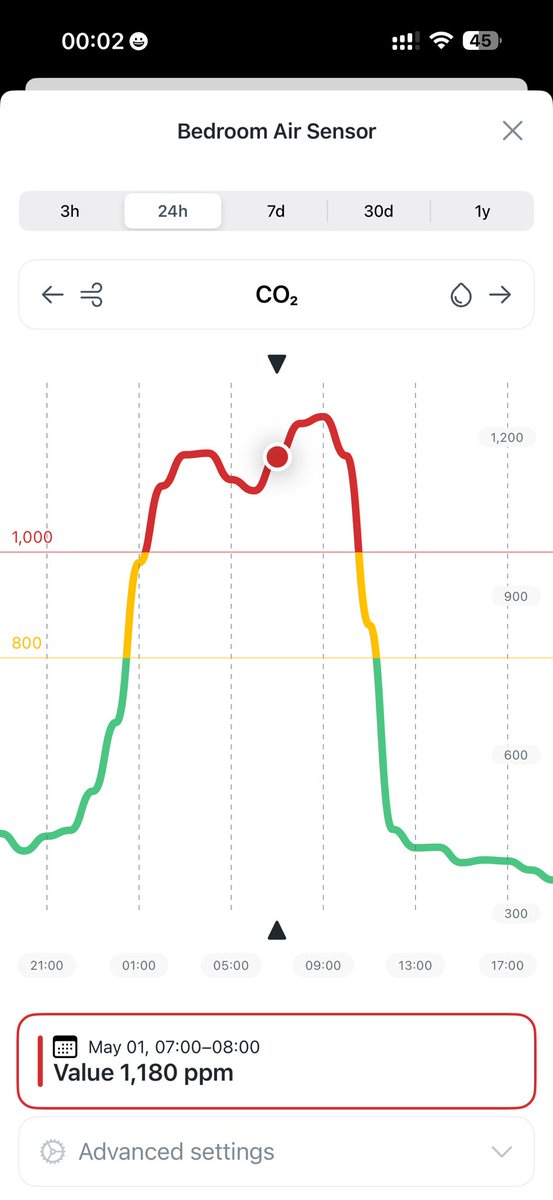

I still haven't solved the CO2 bedroom challenge

You open the window and you wake up from a 6am garbage truck or barking dogs and sunlight

You close it, you suffocate in 1200 ppl at 5am

I guess you really need some mini tube in your wall with a vent that opens and closed based on internal CO2 but how do I build that?

English

@Pinperepette Quindi leggi dalla session e fai il parsing? Io invece sono andato diritto wrappando la PTY e creando un MCP di controllo. Dai un occhio github.com/sstraus/tuicom…. Hai usato ratatui?

Italiano

come usi Claude Code? Mi sono fatto una UI dove Claude lavora direttamente dentro i progetti, con editor e terminale veri, supporto multi-project e anche connessione via SSH per lavorare su macchine remote, e mentre genera codice non ti incolla roba in chat ma scrive i file in tempo reale nell’editor, così vedi tutto mentre succede e puoi intervenire subito; la sto testando da qualche giorno, poi la rilascio

Italiano

@llmdevguy So for coding, should we use 5.4 or 5.3-codex? Which one is more efficient?

English

🔥My first article is done!

TL;DR: Use GPT-5.5 with low or even no thinking mode.

And remember to use precise prompts.

BTW, I forgot to mention not to poison the context. Once you're done with a task, clear the context. This is important with GPT-5.5.

Mateusz Mirkowski@llmdevguy

English

Claude Code’s cache seems to drop multiple times within the same session.

The practical impact is that we are paying more than expected, especially after a 10-minute break, when the next request appears to rebuild context instead of reusing the cached prefix.

The documented TTL should be around 1 hour, so this may be a different root cause, but the business effect is the same: cache misses, higher token cost, and slower interactions.

English

@asaio87 I made it in 30 minutes with Claude. Just plan it right and use a proper framework. Don't rely just on Claude Code.

English

@RLanceMartin Sorry, but Claude's memory is bad. Had to turn it off in CC, was creating more problems than solutions.

English

a few lessons we’ve learned on context management + long-term memory

Lance Martin@RLanceMartin

English

@solomonneas @mattpocockuk As usual, it is full of new bugs.

English

@mattpocockuk They're pushing versions twice a day at this point. At least theyre trying....ish

English

Tried Opus 4.7 again today. Still not there.

Too verbose. Asks pointless questions. Suggests irrelevant alternatives. But the real issue is worse.

It misunderstands intent and then takes destructive actions with confidence. If your request doesn’t match its internal assumption, it “fixes” things by deleting or rewriting code that was perfectly fine.

That’s not just annoying. That’s dangerous.

This isn't a minor UX issue. It’s a trust problem.

English

Day after the launch, not much to report. Please give a thumbs up! I know you'll like the tool, just like me!

x.com/StefanoStraus/…

Stefano Straus@StefanoStraus

Like what we do? Leave us a review and help us spread the word! producthunt.com/products/tuico…

English

TUICommander: AI-native IDE for multi-agent development producthunt.com/products/tuico… via @producthunt

Français

Like what we do? Leave us a review and help us spread the word! producthunt.com/products/tuico…

English

Less than a week into the new Opus, and a new pattern is emerging.

We started from issues on Claude Opus 4.7. Higher token consumption, more verbosity, less predictable behavior. Enough to force a rollback to Claude Opus 4.6.

Then we found something worse.

The main cost driver was not only the model. It was cache behavior, and it affects both.

Cache invalidation is unstable. It drops more often than expected, sometimes after short idle windows, sometimes almost immediately without a clear trigger. Every drop forces a full context rebuild.

In practice, this means token consumption increases silently. Not only because prompts are bigger, but because the system keeps re-sending context that should have been cached.

The key point is this. Cache is saving you from an unsustainable cost spike, it usually covers 99% of the traffic.

The takeaway is uncomfortable.

We moved away from 4.7 to reduce token usage, but uncovered an infrastructure issue that can offset or even exceed those savings.

So the problem is no longer just model efficiency. It is the interaction between model and runtime. Probably a Claude Code or Infrastructure bug.

If you are running Claude Code at scale and not tracking cache behavior, you are flying blind. @bcherny

English

@Pinperepette Il centesimo che scrive la stessa cosa 🤣

Benvenuto nel club

Italiano

Claude Reforge è quel layer che metti sotto Claude Code quando ti sei rotto di vederlo rifare gli stessi errori, osserva quello che succede mentre lavori, prende errori, azioni e outcome, li comprime in episodi e regole, e alla prossima esecuzione non parte da zero ma con contesto reale già dentro, quindi non prova a caso ma evita i percorsi già falliti e riusa quelli che hanno funzionato, il tutto locale, senza embeddings, senza API, solo runtime learning che trasforma ogni debug passato in decisioni future e ti accorcia brutalmente il tempo tra problema e fix github.com/Pinperepette/c…

Italiano

✨ Integrated Hoodmaps now into my new site hotelist.com

I started it 2 years ago but finally working on it again

It's a hotel booking site with hotels rated by AI to avoid all the fake reviews and paid listings of modern booking sites

Any way, if you zoom in to any city, it'll pull the Hoodmaps neighborhood data, so you can stay in the cool area, not the tourist area or the crime area!

Let me know what you think 😊

@levelsio@levelsio

This was asked for for YEARS and I could never find time to build it myself 🗺️ Hoodmaps for 🏡 Airbnb Hoodmaps is my app that lets you find out where to stay in a city, it classifies neighborhoods by: 🟥 Tourists 🟨 Cool 🟩 Rich 🟦 Suits ⬜️ Normies I asked Claude Code to build it and it kinda works, not perfect but a start I just need to get the map to update faster and then publish it as a Chrome extension For now you can try it though: hoodmaps.com/airbnb-overlay… Copy paste that in console on Airbnb map, type your city as a slug (like los-angeles) and it should work Happy booking!!!

English

@readcopyupdate @panda_liyin @adalengineer Have you used it? It's so bad. Over 300% more tokens are used for the same task.

English

@panda_liyin @adalengineer some evals are showing it uses considerably less tokens to complete tasks successfully which would be a net pricing win

English

We decided not to hype Opus 4.7 in @adalengineer .

After testing it, our view was simple: for production use, it feels more like a regression than an upgrade over Opus 4.6.

A few reasons:

- weaker performance on real-world tasks

- less effortful reasoning

- a hidden pricing hit from tokenizer changes, with the same prompt counting as up to 35% more tokens

So while Opus 4.7 is now available in the latest version of AdaL, we’re not positioning it as a headline improvement.

Right now, our team is still sticking with Opus 4.6, Gemini 3.1 Pro, and GPT 5.4.

We’d rather be honest than promotional.

If you’ve used Opus 4.7, I’d love to hear your review.

English

@panda_liyin @adalengineer Exactly the same experience. We rolled back as well the day after the release.

English

@mattrubens It has been a good journey together. Good luck!

English