Success

47 posts

Success

@SuccessVsdworld

Silence Over Noise. ML || DL || Quant

You all are overthinking your app ideas. This app makes your Mac moan when you slap it -> it made $5,000 in 3 days. Just ship it.

Share a piece of introspection about yourself

Open Lovable is live and now has 6,000+ Github stars 💜 💙 It's an Next.js app I built that instantly reimagines any website and generates full React apps in seconds. Powered by @firecrawl, @GroqInc, @e2b and more. Here's a complete breakdown of the project in 4 minutes👇

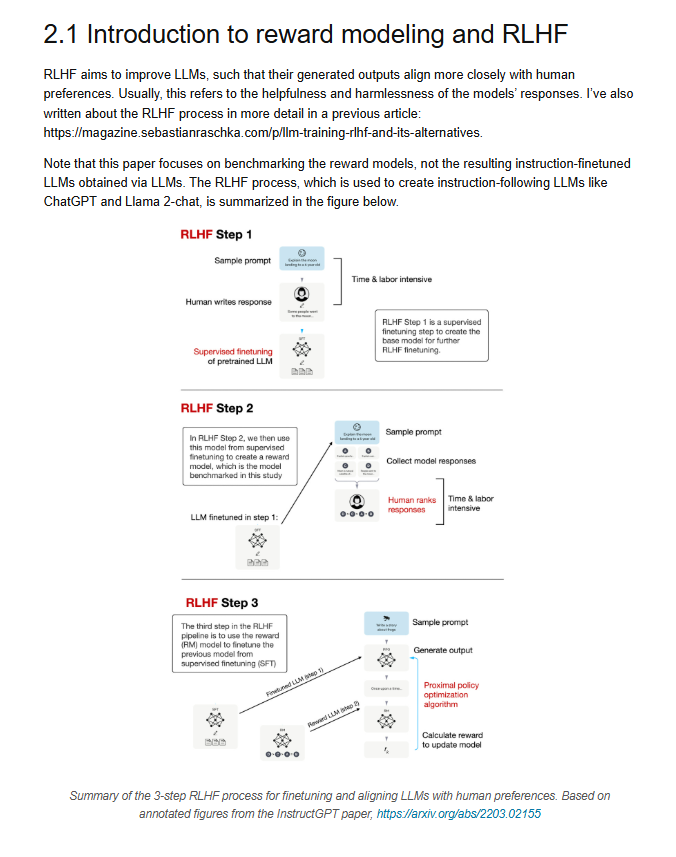

ML grind day 137/365🎯 (finetuning llms) > visualized how LoRA saves memory > how quantization handled hardware limits > used QLoRA + PEFT to finetune normal gpu(not my code btw) > read a few book pages