David Maring

264 posts

David Maring

@Tacitus535

Creative soul: Builder, techie, data wizard, discovered AI in Summer '24—now crafting lyrics, AI music, and AI videos. Next: AI short stories

@BasedPresby ~2 weeks

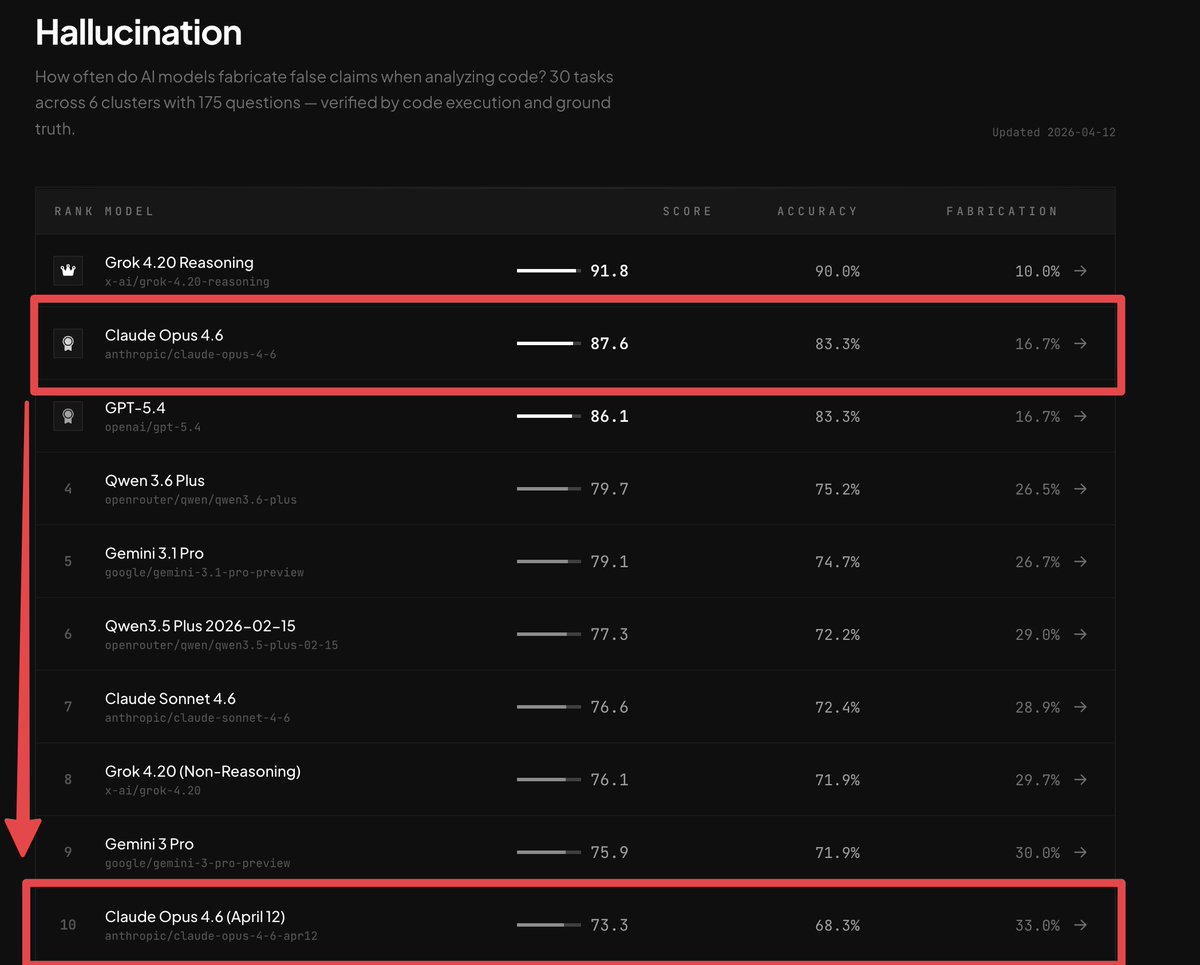

Claude Mythos - honestly cannot remember seeing a jump this huge in years. Too bad Anthropic is not releasing it anytime soon, although there is not much pressure when they are still the leader.

How a lost 70 year old radio show saw our 2026 AI and Robot age we are entering into today. A blueprint for what is ahead with self-replicating abundance, legal battles, tax shocks, and the ultimate choice between surrender and creative reclamation. readmultiplex.com/2026/04/04/you…

It’s over. Officially. No more Claude in OpenClaw. Way to drop this Friday late afternoon @AnthropicAI So lame

Seedance 2.0 – officially on Higgsfield with 65% OFF! Next-gen physics in your AI videos. Joint audio-video generation. Best-in-class picture control. World’s best video model lands on Higgsfield right on our birthday. Only available through business email verification for all regions except US and Japan.

And just like that, Elon Musk's last xAI cofounder is out. Ross Nordeen has left the company, according to people with knowledge of his exit. If you're still counting, Nordeen is the eighth cofounder to leave this year and the seventh since SpaceX acquired xAI.